25년도 AWS EKS Hands-on Study 스터디 정리 내용입니다.

본 실습 참고 페이지 : https://aws-ia.github.io/terraform-aws-eks-blueprints/patterns/blue-green-upgrade/

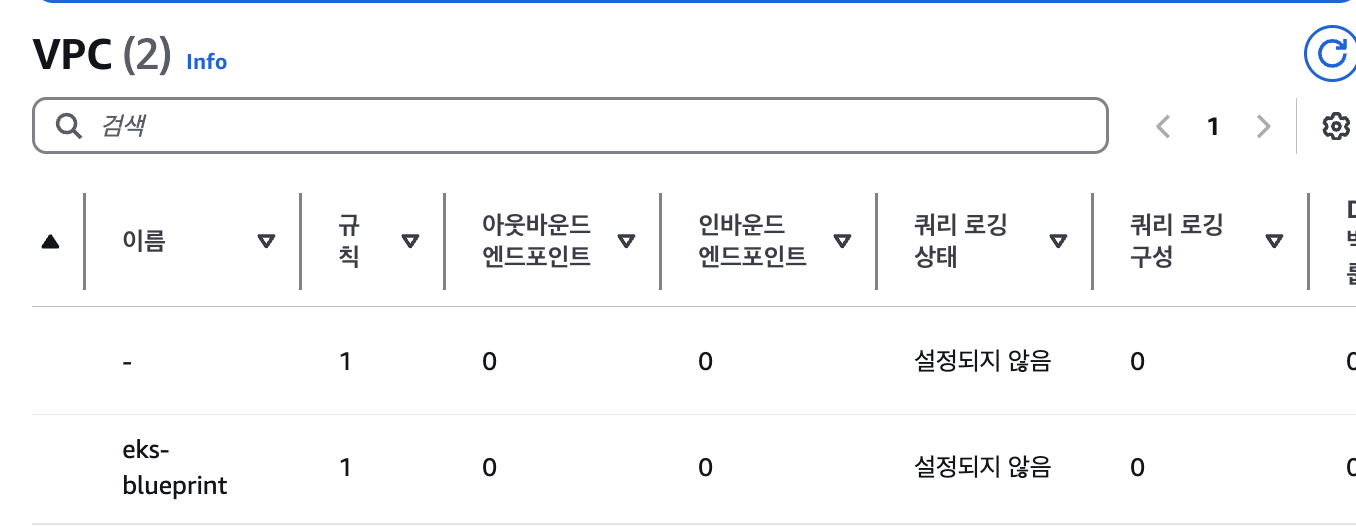

블루그린 마이그레이션 실습

블루그린 마이그레이션이란

무중단 배포를 실현하기 위한 배포 전략으로 두 개의 거의 동일한 운영 환경(Blue, Green)을 활용하여 새로운 버전을 안전하게 배포하고 롤백 가능성도 확보하는 방식이다.

EKS Blueprints를 활용하여 두 개 버전의 클러스터 또는 두 개 버전의 디플로이먼트를 효율적으로 관리한다.

사전 작업

aws secretsmanager create-secret \

--name github-blueprint-ssh-key \

--secret-string "$(cat ~/.ssh/id_rsa.pub)"

tfvars 파일에서 시크릿 매니저로 GitHub 개인 ssh 키 값을 AWS Secret Manager의 비밀에 일반 텍스트로 저장되어야 하지만 terraform.tfvars.example 의 github-blueprint-ssh-keyterraform 변수를 사용하여 이를 변경할 수 있다.

가이드에 적힌 값을 사용하기 위해 시크릿 매니저를 추가해주었다.

cat ../terraform.tfvars.example

# You should update the below variables

aws_region = "ap-northeast-2"

environment_name = "eks-blueprint"

hosted_zone_name = 생성한 도메인

eks_admin_role_name = "Admin" # Additional role admin in the cluster (usually the role I use in the AWS console)

# EKS Blueprint Workloads ArgoCD App of App repository

gitops_workloads_org = "git@github.com:aws-samples"

gitops_workloads_repo = "eks-blueprints-workloads"

gitops_workloads_revision = "main"

gitops_workloads_path = "envs/dev"

#Secret manager secret for github ssk jey

aws_secret_manager_git_private_ssh_key_name = "github-blueprint-ssh-key"

terraform.tfvars

cp terraform.tfvars.example terraform.tfvars

ln -s ../terraform.tfvars environment/terraform.tfvars

ln -s ../terraform.tfvars eks-blue/terraform.tfvars

ln -s ../terraform.tfvars eks-green/terraform.tfvarsEnvironment Stack

cd environment

terraform init

terraform apply

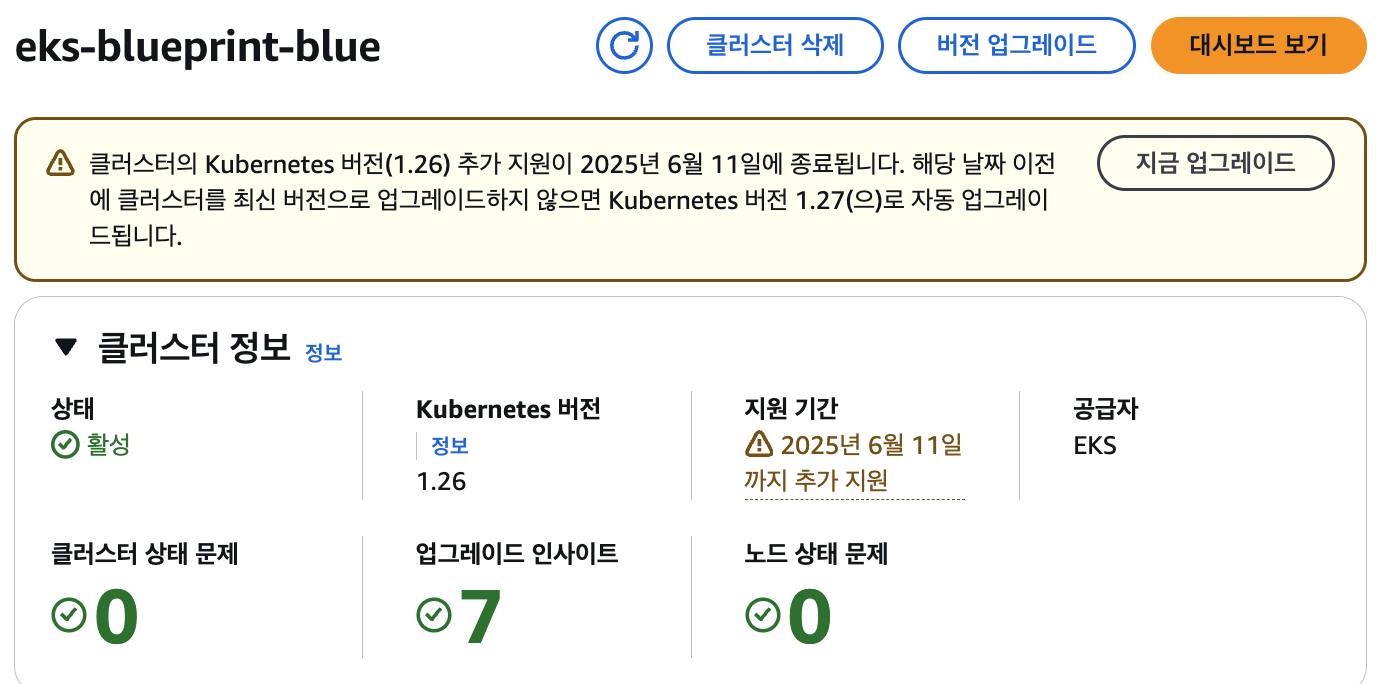

블루 클러스터 생성

cd eks-blue

terraform init

terraform apply

access_argocd = <<EOT

export KUBECONFIG="/tmp/eks-********"

aws eks --region ap-northeast-2 update-kubeconfig --name eks-********

echo "ArgoCD URL: https://$(kubectl get svc -n argocd argo-cd-argocd-server -o jsonpath='{.status.loadBalancer.ingress[0].hostname}')"

echo "ArgoCD Username: admin"

echo "ArgoCD Password: $(aws secretsmanager get-secret-value --secret-id ****-admin-secret.******** --query SecretString --output text --region ap-northeast-2)"

EOT

configure_kubectl = "aws eks --region ap-northeast-2 update-kubeconfig --name eks-********"

eks_blueprints_dev_teams_configure_kubectl = [

"aws eks --region ap-northeast-2 update-kubeconfig --name eks-******** --role-arn arn:aws:iam::************:role/team-burnham-********",

"aws eks --region ap-northeast-2 update-kubeconfig --name eks-******** --role-arn arn:aws:iam::************:role/team-riker-********",

]

eks_blueprints_ecsdemo_teams_configure_kubectl = [

"aws eks --region ap-northeast-2 update-kubeconfig --name eks-******** --role-arn arn:aws:iam::************:role/team-ecsdemo-crystal-********",

"aws eks --region ap-northeast-2 update-kubeconfig --name eks-******** --role-arn arn:aws:iam::************:role/team-ecsdemo-frontend-********",

"aws eks --region ap-northeast-2 update-kubeconfig --name eks-******** --role-arn arn:aws:iam::************:role/team-ecsdemo-nodejs-********",

]

eks_blueprints_platform_teams_configure_kubectl = "aws eks --region ap-northeast-2 update-kubeconfig --name eks-******** --role-arn arn:aws:iam::************:role/team-platform-********"

eks_cluster_id = "eks-********"

gitops_metadata = <sensitive>

배포 완료까지 약 25분가량 걸렸다 ..!

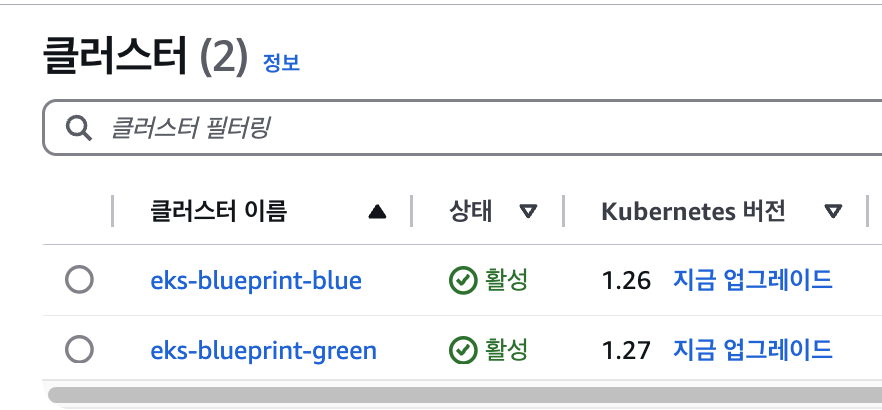

그린 클러스터 생성

cd eks-green

terraform init

terraform applyaccess_argocd = <<EOT

export KUBECONFIG="/tmp/eks-********"

aws eks --region ap-northeast-2 update-kubeconfig --name eks-********

echo "ArgoCD URL: https://$(kubectl get svc -n argocd argo-cd-argocd-server -o jsonpath='{.status.loadBalancer.ingress[0].hostname}')"

echo "ArgoCD Username: admin"

echo "ArgoCD Password: $(aws secretsmanager get-secret-value --secret-id ****-admin-secret.******** --query SecretString --output text --region ap-northeast-2)"

EOT

configure_kubectl = "aws eks --region ap-northeast-2 update-kubeconfig --name eks-********"

eks_blueprints_dev_teams_configure_kubectl = [

"aws eks --region ap-northeast-2 update-kubeconfig --name eks-******** --role-arn arn:aws:iam::************:role/team-burnham-********",

"aws eks --region ap-northeast-2 update-kubeconfig --name eks-******** --role-arn arn:aws:iam::************:role/team-riker-********",

]

eks_blueprints_ecsdemo_teams_configure_kubectl = [

"aws eks --region ap-northeast-2 update-kubeconfig --name eks-******** --role-arn arn:aws:iam::************:role/team-ecsdemo-crystal-********",

"aws eks --region ap-northeast-2 update-kubeconfig --name eks-******** --role-arn arn:aws:iam::************:role/team-ecsdemo-frontend-********",

"aws eks --region ap-northeast-2 update-kubeconfig --name eks-******** --role-arn arn:aws:iam::************:role/team-ecsdemo-nodejs-********",

]

eks_blueprints_platform_teams_configure_kubectl = "aws eks --region ap-northeast-2 update-kubeconfig --name eks-******** --role-arn arn:aws:iam::************:role/team-platform-********"

eks_cluster_id = "eks-********"

gitops_metadata = <sensitive>

그린 클러스터도 활성화되었다.

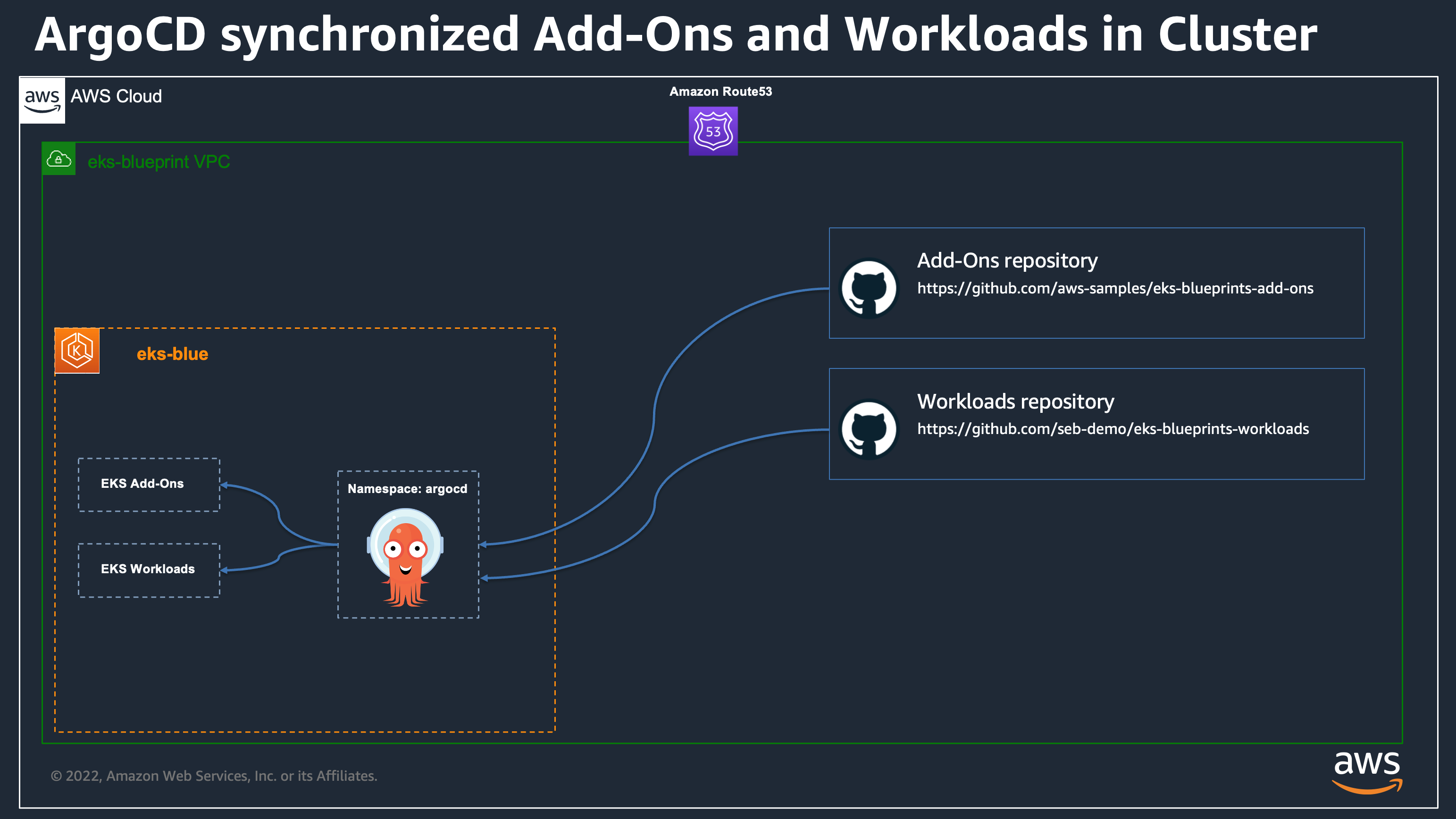

배포된 argocd repourl 변경하기

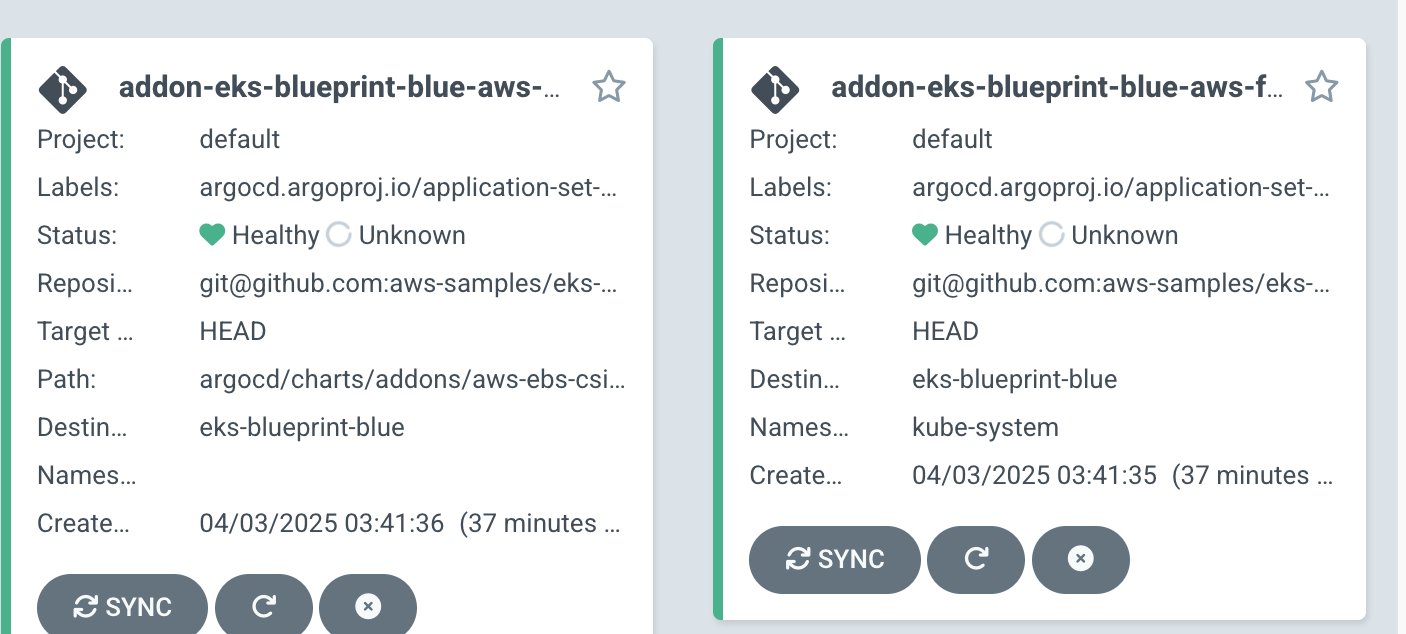

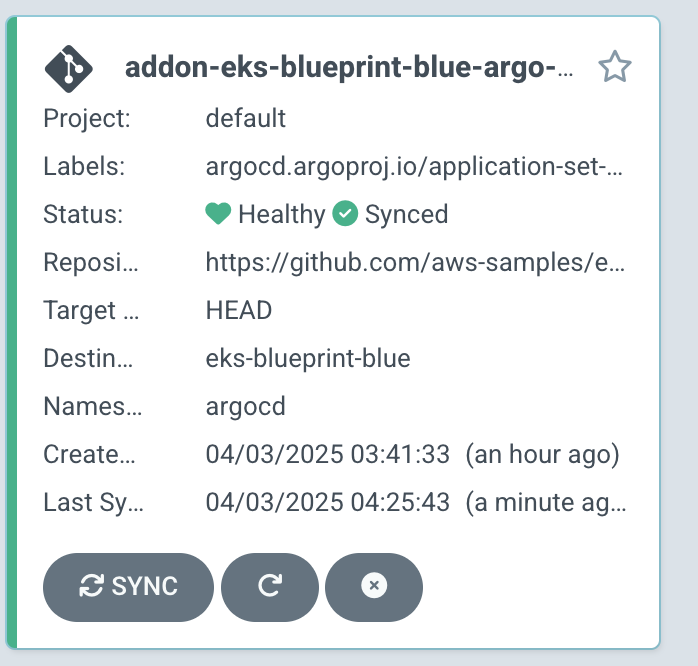

분명 실습대로 따라갔는데... 배포된 파드가 안보이는 것이였다..? 왜일까 하고 ArgoCD에 들어가보니

테라폼으로 생성했더니 레포 Url이.. 깃이 기본으로 돼있어서 https가 아니였다 으아악!

그래서...

kubectl patch secret eks-blueprint-blue -n argocd --type merge \

-p '{"metadata": {"annotations": {"addons_repo_url": "https://github.com/aws-samples/eks-blueprints-add-ons.git"}}}'

각 앱에서 메타데이터 변경하는 것은 깃옵스 방식이 아니므로 이렇게.. 아예 시크릿을 수정해주었다.

레포 경로를 수정해주고 제대로 싱크가 된 것을 확인할 수 있다.

k get po -A

NAMESPACE NAME READY STATUS RESTARTS AGE

amazon-cloudwatch aws-cloudwatch-metrics-clvpt 1/1 Running 0 11m

amazon-cloudwatch aws-cloudwatch-metrics-f92k6 1/1 Running 0 11m

amazon-cloudwatch aws-cloudwatch-metrics-jww4k 1/1 Running 0 11m

argocd argo-cd-argocd-application-controller-0 1/1 Running 0 12m

argocd argo-cd-argocd-applicationset-controller-55c4c79d49-hrrxs 1/1 Running 0 3h20m

argocd argo-cd-argocd-dex-server-6c5d66ddf7-lw8ls 1/1 Running 0 3h20m

argocd argo-cd-argocd-notifications-controller-69fd44d464-kzrxs 1/1 Running 0 3h20m

argocd argo-cd-argocd-redis-5f474f9d77-cchxz 1/1 Running 0 3h20m

argocd argo-cd-argocd-repo-server-544b5b8d66-5qspv 1/1 Running 0 93s

argocd argo-cd-argocd-repo-server-544b5b8d66-86jjn 1/1 Running 0 10m

argocd argo-cd-argocd-repo-server-544b5b8d66-p2lpx 1/1 Running 0 11m

argocd argo-cd-argocd-repo-server-544b5b8d66-rrmms 1/1 Running 0 12m

argocd argo-cd-argocd-repo-server-544b5b8d66-zr9lh 1/1 Running 0 92s

argocd argo-cd-argocd-server-dbbd888c8-9zlqw 1/1 Running 0 12m

cert-manager cert-manager-5c84984c7b-tftk7 1/1 Running 0 11m

cert-manager cert-manager-cainjector-67b46dfbc4-4vbbc 1/1 Running 0 11m

cert-manager cert-manager-webhook-557cf8b498-qrcs7 1/1 Running 0 11m

external-dns external-dns-6bc79b4c7f-l2w8n 1/1 Running 0 11m

external-secrets external-secrets-5f7fc744fb-ntn8p 1/1 Running 0 11m

external-secrets external-secrets-cert-controller-6ddf99cb9d-r7wrf 1/1 Running 0 11m

external-secrets external-secrets-webhook-64449c7c97-d6ccz 1/1 Running 0 11m

geolocationapi geolocationapi-7f6bfb647d-v52mm 2/2 Running 0 82s

geolocationapi geolocationapi-7f6bfb647d-vdtkb 2/2 Running 0 82s

geordie downstream0-7999f7bc68-nlsft 1/1 Running 0 109s

geordie downstream1-5ff6b6fd8c-k8pf8 1/1 Running 0 109s

geordie frontend-65766fdccc-lcrpf 1/1 Running 0 109s

geordie redis-server-76d7b647dd-28hlq 1/1 Running 0 109s

geordie yelb-appserver-56d6d6685b-mwzbc 1/1 Running 0 109s

geordie yelb-db-5dfdd5d44f-dvvvk 1/1 Running 0 109s

geordie yelb-ui-56545895f-4zh8r 1/1 Running 0 109s

ingress-nginx ingress-nginx-controller-85b4dd96b4-2lncv 1/1 Running 0 11m

ingress-nginx ingress-nginx-controller-85b4dd96b4-8cvdk 1/1 Running 0 11m

ingress-nginx ingress-nginx-controller-85b4dd96b4-xst7z 1/1 Running 0 11m

karpenter karpenter-5dfb76b559-2tgtr 1/1 Running 0 11m

karpenter karpenter-5dfb76b559-xg4vt 1/1 Running 0 11m

kube-system aws-for-fluent-bit-6pmdh 1/1 Running 0 12m

kube-system aws-for-fluent-bit-75k28 1/1 Running 0 12m

kube-system aws-for-fluent-bit-lcl44 1/1 Running 0 12m

kube-system aws-load-balancer-controller-7c68859454-2q5zn 1/1 Running 0 11m

kube-system aws-load-balancer-controller-7c68859454-mh9l9 1/1 Running 0 11m

kube-system aws-node-mzl6k 2/2 Running 0 3h35m

kube-system aws-node-ntwxl 2/2 Running 0 3h35m

kube-system aws-node-p8w8g 2/2 Running 0 3h35m

kube-system coredns-767f9b58bd-cfv4j 1/1 Running 0 3h36m

kube-system coredns-767f9b58bd-g7hjb 1/1 Running 0 3h36m

kube-system kube-proxy-g8c7g 1/1 Running 0 3h35m

kube-system kube-proxy-vsv65 1/1 Running 0 3h35m

kube-system kube-proxy-x4gmg 1/1 Running 0 3h35m

kube-system metrics-server-8794b9cdf-m9gkg 1/1 Running 0 11m

kyverno kyverno-admission-controller-757766c5f5-w6rr5 1/1 Running 0 10m

kyverno kyverno-background-controller-bc589cf8c-2whdj 1/1 Running 0 10m

kyverno kyverno-cleanup-admission-reports-29060370-w5hr7 0/1 Completed 0 7m30s

kyverno kyverno-cleanup-cluster-admission-reports-29060370-s8csj 0/1 Completed 0 7m30s

kyverno kyverno-cleanup-controller-5c9fdbbc5c-2sskr 1/1 Running 0 10m

kyverno kyverno-reports-controller-6cc9fd6979-bzw9v 1/1 Running 0 10m

team-burnham burnham-869784cc9c-5ghwd 1/1 Running 0 117s

team-burnham burnham-869784cc9c-ds9kz 1/1 Running 0 117s

team-burnham burnham-869784cc9c-qgbck 1/1 Running 0 117s

team-burnham nginx-5769f5bf7c-d5s7s 1/1 Running 0 117s

team-riker deployment-2048-85c46566b7-j9r2s 1/1 Running 0 116s

team-riker deployment-2048-85c46566b7-kr89z 1/1 Running 0 116s

team-riker deployment-2048-85c46566b7-tf5kp 1/1 Running 0 116s

team-riker guestbook-ui-6bb6d8f45d-lvf25 1/1 Running 0 116s

이 상태에서 드디어 앱이 제대로 배포가 되어 파드가 정상적으로 확인된다.

burnham pod 확인

kubectl get deployment -n team-burnham -l app=burnham

NAME READY UP-TO-DATE AVAILABLE AGE

burnham 3/3 3 3 2m45s

nginx 1/1 1 1 2m45s

kubectl get pods -n team-burnham -l app=burnham

NAME READY STATUS RESTARTS AGE

burnham-869784cc9c-5ghwd 1/1 Running 0 2m54s

burnham-869784cc9c-ds9kz 1/1 Running 0 2m54s

burnham-869784cc9c-qgbck 1/1 Running 0 2m54s

kubectl logs -n team-burnham -l app=burnham

2025/04/02 19:37:21 {url: / }, cluster: eks-blueprint-blue }

2025/04/02 19:37:31 {url: / }, cluster: eks-blueprint-blue }

2025/04/02 19:37:36 {url: / }, cluster: eks-blueprint-blue }

2025/04/02 19:37:46 {url: / }, cluster: eks-blueprint-blue }

2025/04/02 19:37:51 {url: / }, cluster: eks-blueprint-blue }

2025/04/02 19:38:01 {url: / }, cluster: eks-blueprint-blue }

2025/04/02 19:38:06 {url: / }, cluster: eks-blueprint-blue }

2025/04/02 19:38:16 {url: / }, cluster: eks-blueprint-blue }

2025/04/02 19:38:21 {url: / }, cluster: eks-blueprint-blue }

2025/04/02 19:38:31 {url: / }, cluster: eks-blueprint-blue }

curl -s ***.ap-northeast-2.elb.amazonaws.com | grep CLUSTER_NAME | awk -F "<span>|</span>" '{print $4}'

eks-blueprint-blue

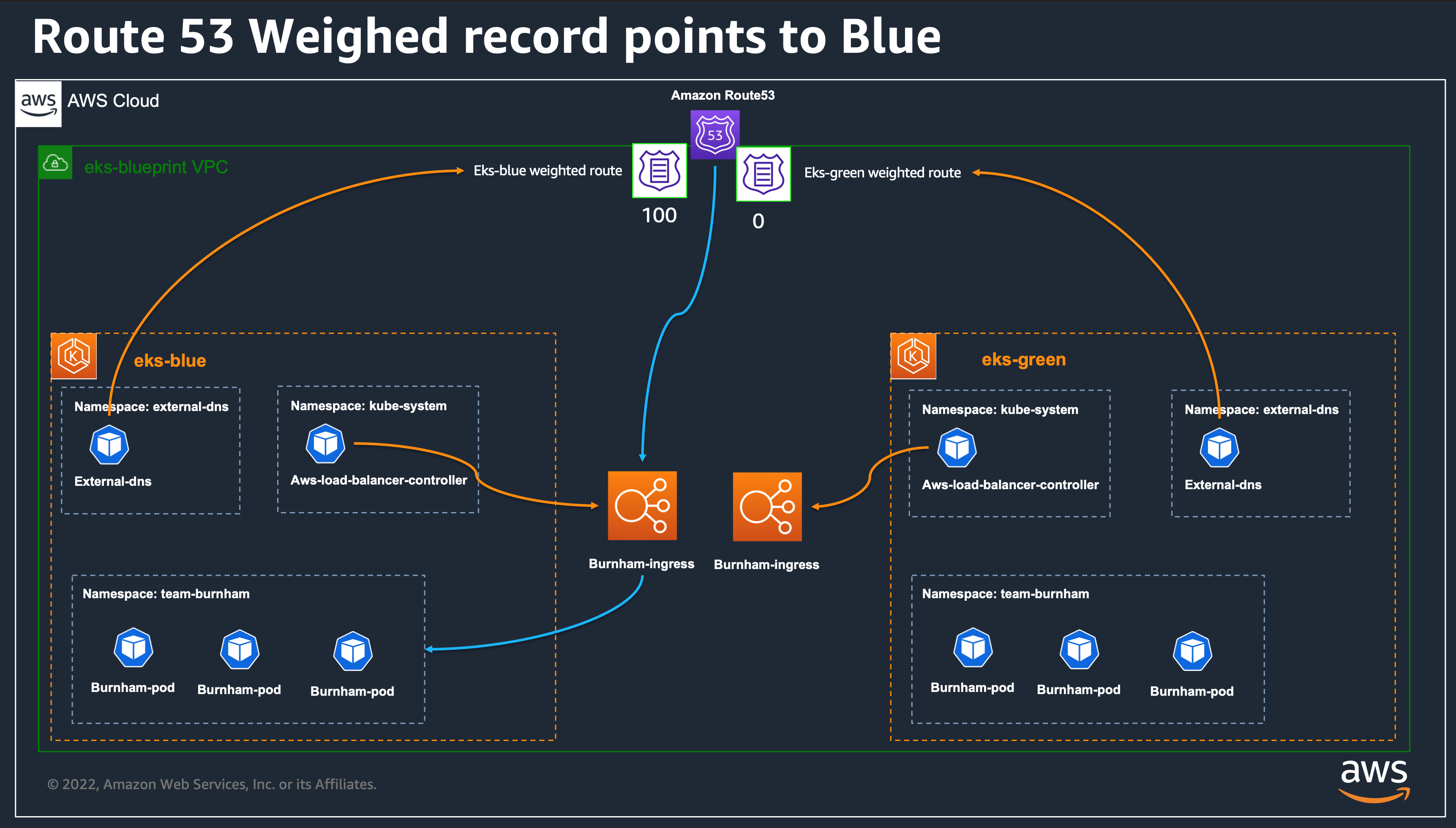

블루 -> 그린 마이그레이션

cat eks-blue/main.tf | grep route

argocd_route53_weight = "100"

route53_weight = "100"

ecsfrontend_route53_weight = "100"

cat eks-green/main.tf | grep route

argocd_route53_weight = "0" # We control with theses parameters how we send traffic to the workloads in the new cluster

route53_weight = "0"

ecsfrontend_route53_weight = "0"

현재는 DNS 레코드의 가중치가 eks‑blue에 100, eks‑green에 0으로 설정되어 있어 모든 트래픽이 eks‑blue로만 라우팅된다.

main.tf

module "eks_cluster" {

source = "../modules/eks_cluster"

aws_region = var.aws_region

service_name = "green"

cluster_version = "1.27"

argocd_route53_weight = "0"

route53_weight = var.route53_weight

}

variables.tf

variable "route53_weight" {

type = string

description = "Route53 weight for DNS records"

default = "50"

}

eks-blueprint-blue

eks-blueprint-blue

eks-blueprint-blue

eks-blueprint-green

eks-blueprint-green

eks-blueprint-green'Infra > AWS' 카테고리의 다른 글

| [AEWS] #8주차 젠킨스, ArgoCD 실습 (1) | 2025.03.30 |

|---|---|

| [AEWS] #7주차 Fargate 배포 실습 (1) | 2025.03.23 |

| [AEWS] #6주차 Kyverno 실습 (5) (0) | 2025.03.16 |

| [AEWS] #6주차 IRSA 실습 (4) (1) | 2025.03.16 |

| [AEWS] #6주차 EKS 인증/인가 (3) (1) | 2025.03.16 |