26년도 K8S Deploy 정리 글입니다.

kubespray 통한 k8s 배포

kubespray 변수 우선순위

Kubespray는 Ansible 기반으로 동작하며, 설정 대부분이 변수(variable) 로 제어된다.

따라서 Ansible 변수 우선순위(variable precedence) 구조로 제어되는데, Ansible은 변수가 선언되는 위치에 따라 우선순위가 명확히 정의되어 있으며, 숫자가 높을수록 우선 적용된다.

Ansible 공식 문서 기준으로 변수 우선순위는 다음과 같은 구조를 가진다.

- 낮은 우선순위 변수는 높은 우선순위 변수에 의해 언제든 덮어씌워질 수 있다

- 가장 높은 우선순위는 항상 Extra vars (-e) 이다

만약 같은 이름의 변수가 여러 곳에 정의되어 있다면 가장 나중에, 가장 높은 단계에서 정의된 값이 실제 적용된다.

(1) Role defaults (우선순위 낮음)

roles/*/defaults/main.yml- Kubespray에서 제공하는 기본값

- 사용자가 별도 설정을 하지 않으면 이 값이 적용된다

- 가장 먼저 로딩되며 잘 덮어씌워진다

(2) Inventory group_vars / host_vars (실질적으로 설정하는 값)

inventory/mycluster/group_vars/all.yml

inventory/mycluster/group_vars/k8s_cluster.yml

inventory/mycluster/host_vars/k8s-node1.yml- Kubespray에서 사용자가 수정하는 영역

- Role defaults를 덮어씌우는 주된 수단

- 클러스터 단위 / 그룹 단위 / 노드 단위 제어가 가능

(3) Play vars / Playbook 내부 vars (강제 설정)

- name: Install etcd

vars:

etcd_cluster_setup: false

etcd_events_cluster_setup: false

import_playbook: install_etcd.yml- vars: 는 Play vars

- inventory 변수보다 우선순위가 높다

- 사용자가 inventory에서 값을 바꿔도 이 playbook 안에서는 무시된다

(4) Extra vars (-e)

ansible-playbook cluster.yml -e etcd_cluster_setup=true- 가장 우선순위 높다

- 디버깅, 강제 override, 임시 테스트에 사용된다

root@admin-lb:~# cd /root/kubespray/

root@admin-lb:~/kubespray# cat /root/kubespray/inventory/mycluster/inventory.ini

[kube_control_plane]

k8s-node1 ansible_host=192.168.10.11 ip=192.168.10.11 etcd_member_name=etcd1

k8s-node2 ansible_host=192.168.10.12 ip=192.168.10.12 etcd_member_name=etcd2

k8s-node3 ansible_host=192.168.10.13 ip=192.168.10.13 etcd_member_name=etcd3

[etcd:children]

kube_control_plane

[kube_node]

k8s-node4 ansible_host=192.168.10.14 ip=192.168.10.14

#k8s-node5 ansible_host=192.168.10.15 ip=192.168.10.15

변수가 어디서 사용되었는지 추적

root@admin-lb:~/kubespray# grep -Rn "allow_unsupported_distribution_setup" inventory/mycluster/ playbooks/ roles/ -A1 -B1

inventory/mycluster/group_vars/all/all.yml-141-## If enabled it will allow kubespray to attempt setup even if the distribution is not supported. For unsupported distributions this can lead to unexpected failures in some cases.

inventory/mycluster/group_vars/all/all.yml:142:allow_unsupported_distribution_setup: false

--

roles/kubernetes/preinstall/tasks/0040-verify-settings.yml-22- assert:

roles/kubernetes/preinstall/tasks/0040-verify-settings.yml:23: that: (allow_unsupported_distribution_setup | default(false)) or ansible_distribution in supported_os_distributions

roles/kubernetes/preinstall/tasks/0040-verify-settings.yml-24- msg: "{{ ansible_distribution }} is not a known OS"

설정 배포

root@admin-lb:~/kubespray# cat /etc/haproxy/haproxy.cfg

#---------------------------------------------------------------------

# Global settings

#---------------------------------------------------------------------

global

log 127.0.0.1 local2

chroot /var/lib/haproxy

pidfile /var/run/haproxy.pid

maxconn 4000

user haproxy

group haproxy

daemon

# turn on stats unix socket

stats socket /var/lib/haproxy/stats

# utilize system-wide crypto-policies

ssl-default-bind-ciphers PROFILE=SYSTEM

ssl-default-server-ciphers PROFILE=SYSTEM

#---------------------------------------------------------------------

# common defaults that all the 'listen' and 'backend' sections will

# use if not designated in their block

#---------------------------------------------------------------------

defaults

mode http

log global

option httplog

option tcplog

option dontlognull

option http-server-close

#option forwardfor except 127.0.0.0/8

option redispatch

retries 3

timeout http-request 10s

timeout queue 1m

timeout connect 10s

timeout client 1m

timeout server 1m

timeout http-keep-alive 10s

timeout check 10s

maxconn 3000

# ---------------------------------------------------------------------

# Kubernetes API Server Load Balancer Configuration

# ---------------------------------------------------------------------

frontend k8s-api

bind *:6443

mode tcp

option tcplog

default_backend k8s-api-backend

backend k8s-api-backend

mode tcp

option tcp-check

option log-health-checks

timeout client 3h

timeout server 3h

balance roundrobin

server k8s-node1 192.168.10.11:6443 check check-ssl verify none inter 10000

server k8s-node2 192.168.10.12:6443 check check-ssl verify none inter 10000

server k8s-node3 192.168.10.13:6443 check check-ssl verify none inter 10000

# ---------------------------------------------------------------------

# HAProxy Stats Dashboard - http://192.168.10.10:9000/haproxy_stats

# ---------------------------------------------------------------------

listen stats

bind *:9000

mode http

stats enable

stats uri /haproxy_stats

stats realm HAProxy\ Statistic

stats admin if TRUE

# ---------------------------------------------------------------------

# Configure the Prometheus exporter - curl http://192.168.10.10:8405/metrics

# ---------------------------------------------------------------------

frontend prometheus

bind *:8405

mode http

http-request use-service prometheus-exporter if { path /metrics }

no loghttp://192.168.10.10:9000/haproxy_stats

root@admin-lb:~/kubespray# ansible-inventory -i /root/kubespray/inventory/mycluster/inventory.ini --graph

@all:

|--@ungrouped:

|--@etcd:

| |--@kube_control_plane:

| | |--k8s-node1

| | |--k8s-node2

| | |--k8s-node3

|--@kube_node:

| |--k8s-node4

ANSIBLE_FORCE_COLOR=true ansible-playbook -i inventory/mycluster/inventory.ini -v cluster.yml -e kube_version="1.32.9" | tee kubespray_install.log

...

Sunday 08 February 2026 00:43:34 +0900 (0:00:00.043) 0:05:33.369 *******

===============================================================================

kubernetes/kubeadm : Join to cluster if needed ------------------------- 16.06s

kubernetes/control-plane : Joining control plane node to the cluster. -- 14.54s

download : Download_container | Download image if required ------------- 11.63s

download : Download_container | Download image if required ------------- 11.35s

kubernetes/control-plane : Kubeadm | Initialize first control plane node (1st try) --- 7.24s

download : Download_container | Download image if required -------------- 7.20s

download : Download_file | Download item -------------------------------- 6.75s

download : Download_container | Download image if required -------------- 6.66s

download : Download_container | Download image if required -------------- 6.41s

download : Download_file | Download item -------------------------------- 6.23s

system_packages : Manage packages --------------------------------------- 5.71s

container-engine/containerd : Download_file | Download item ------------- 5.61s

network_plugin/flannel : Flannel | Wait for flannel subnet.env file presence --- 5.49s

etcd : Restart etcd ----------------------------------------------------- 5.41s

download : Download_container | Download image if required -------------- 5.21s

download : Download_container | Download image if required -------------- 5.17s

etcd : Configure | Check if etcd cluster is healthy --------------------- 5.15s

container-engine/crictl : Download_file | Download item ----------------- 4.59s

etcd : Gen_certs | Write etcd member/admin and kube_control_plane client certs to other etcd nodes --- 4.56s

container-engine/runc : Download_file | Download item ------------------- 4.48s

앤서블 배포 시 약 10분 정도 시간이 소요된다.

api server

root@admin-lb:~/kubespray# kubectl get nodes -o jsonpath='{range .items[*]}{.metadata.name}{"\t"}{.spec.podCIDR}{"\n"}{end}'

E0208 01:32:25.680343 15124 memcache.go:265] "Unhandled Error" err="couldn't get current server API group list: Get \"http://localhost:8080/api?timeout=32s\": dial tcp [::1]:8080: connect: connection refused"

배포 직후 kubeconfig를 admin-lb 노드에서도 사용할 수 있도록 설정해준다.

위는 지금 설정 안해주어서 에러 메세지가 뜬 상황

root@admin-lb:~/kubespray# mkdir /root/.kube

scp k8s-node1:/root/.kube/config /root/.kube/

cat /root/.kube/config | grep server

config 100% 5665 5.4MB/s 00:00

server: https://127.0.0.1:6443

root@admin-lb:~/kubespray# sed -i 's/127.0.0.1/192.168.10.11/g' /root/.kube/config

control-plane 로컬 전용 kubeconfig를 외부 관리 노드(admin-lb)에서 사용할 수 있도록 API Server endpoint를 실제 네트워크 주소로 교체한다.

root@admin-lb:~/kubespray# kubectl describe node | grep -E 'Name:|Taints'

Name: k8s-node1

Taints: node-role.kubernetes.io/control-plane:NoSchedule

Name: k8s-node2

Taints: node-role.kubernetes.io/control-plane:NoSchedule

Name: k8s-node3

Taints: node-role.kubernetes.io/control-plane:NoSchedule

Name: k8s-node4

Taints: <none>root@admin-lb:~/kubespray# kubectl get nodes -o jsonpath='{range .items[*]}{.metadata.name}{"\t"}{.spec.podCIDR}{"\n"}{end}'

k8s-node1 10.233.64.0/24

k8s-node2 10.233.65.0/24

k8s-node3 10.233.66.0/24

k8s-node4 10.233.67.0/24

etcd 확인

root@admin-lb:~/kubespray# ssh k8s-node1 etcdctl.sh member list -w table

+------------------+---------+-------+----------------------------+----------------------------+------------+

| ID | STATUS | NAME | PEER ADDRS | CLIENT ADDRS | IS LEARNER |

+------------------+---------+-------+----------------------------+----------------------------+------------+

| 8b0ca30665374b0 | started | etcd3 | https://192.168.10.13:2380 | https://192.168.10.13:2379 | false |

| 2106626b12a4099f | started | etcd2 | https://192.168.10.12:2380 | https://192.168.10.12:2379 | false |

| c6702130d82d740f | started | etcd1 | https://192.168.10.11:2380 | https://192.168.10.11:2379 | false |

+------------------+---------+-------+----------------------------+----------------------------+------------+root@admin-lb:~/kubespray# for i in {1..3}; do echo ">> k8s-node$i <<"; ssh k8s-node$i etcdctl.sh endpoint status -w table; echo; done

>> k8s-node1 <<

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| 127.0.0.1:2379 | c6702130d82d740f | 3.5.25 | 6.4 MB | true | false | 5 | 11188 | 11188 | |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

>> k8s-node2 <<

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| 127.0.0.1:2379 | 2106626b12a4099f | 3.5.25 | 6.5 MB | false | false | 5 | 11189 | 11189 | |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

>> k8s-node3 <<

+----------------+-----------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+----------------+-----------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| 127.0.0.1:2379 | 8b0ca30665374b0 | 3.5.25 | 6.4 MB | false | false | 5 | 11189 | 11189 | |

+----------------+-----------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

자동 완성 및 단축어 설정

source <(kubectl completion bash)

alias k=kubectl

alias kc=kubecolor

complete -F __start_kubectl k

echo 'source <(kubectl completion bash)' >> /etc/profile

echo 'alias k=kubectl' >> /etc/profile

echo 'alias kc=kubecolor' >> /etc/profile

echo 'complete -F __start_kubectl k' >> /etc/profile

control 컴포넌트 확인

kube-apiserver.yaml

root@admin-lb:~/kubespray# ssh k8s-node1 cat /etc/kubernetes/manifests/kube-apiserver.yamlspec:

containers:

- command:

- kube-apiserver

- --advertise-address=192.168.10.11

- --allow-privileged=true

- --anonymous-auth=True

- --apiserver-count=3

- --authorization-mode=Node,RBAC

- '--bind-address=::'

- --client-ca-file=/etc/kubernetes/ssl/ca.crt

- --default-not-ready-toleration-seconds=300

- --default-unreachable-toleration-seconds=300

- --enable-admission-plugins=NodeRestriction

- --enable-aggregator-routing=False

- --enable-bootstrap-token-auth=true

- --endpoint-reconciler-type=lease

- --etcd-cafile=/etc/ssl/etcd/ssl/ca.pem

- --etcd-certfile=/etc/ssl/etcd/ssl/node-k8s-node1.pem

- --etcd-compaction-interval=5m0s

- --etcd-keyfile=/etc/ssl/etcd/ssl/node-k8s-node1-key.pem

- --etcd-servers=https://192.168.10.11:2379,https://192.168.10.12:2379,https://192.168.10.13:2379

kube-controller-manager

root@admin-lb:~/kubespray# ssh k8s-node1 cat /etc/kubernetes/manifests/kube-controller-manager.yamlspec:

containers:

- command:

- kube-controller-manager

- --allocate-node-cidrs=true

- --authentication-kubeconfig=/etc/kubernetes/controller-manager.conf

- --authorization-kubeconfig=/etc/kubernetes/controller-manager.conf

- '--bind-address=::'

- --client-ca-file=/etc/kubernetes/ssl/ca.crt

- --cluster-cidr=10.233.64.0/18

- --cluster-name=cluster.local

- --cluster-signing-cert-file=/etc/kubernetes/ssl/ca.crt

- --cluster-signing-key-file=/etc/kubernetes/ssl/ca.key

- --configure-cloud-routes=false

- --controllers=*,bootstrapsigner,tokencleaner

- --kubeconfig=/etc/kubernetes/controller-manager.conf

- --leader-elect=true

- --leader-elect-lease-duration=15s

- --leader-elect-renew-deadline=10s

- --node-cidr-mask-size-ipv4=24

- --node-monitor-grace-period=40s

- --node-monitor-period=5s

- --profiling=False

- --requestheader-client-ca-file=/etc/kubernetes/ssl/front-proxy-ca.crt

- --root-ca-file=/etc/kubernetes/ssl/ca.crt

- --service-account-private-key-file=/etc/kubernetes/ssl/sa.key

- --service-cluster-ip-range=10.233.0.0/18

- --terminated-pod-gc-threshold=12500

- --use-service-account-credentials=true

kube-scheduler

root@admin-lb:~/kubespray# ssh k8s-node1 cat /etc/kubernetes/manifests/kube-scheduler.yamlspec:

containers:

- command:

- kube-controller-manager

- --allocate-node-cidrs=true

- --authentication-kubeconfig=/etc/kubernetes/controller-manager.conf

- --authorization-kubeconfig=/etc/kubernetes/controller-manager.conf

- '--bind-address=::'

- --client-ca-file=/etc/kubernetes/ssl/ca.crt

- --cluster-cidr=10.233.64.0/18

- --cluster-name=cluster.local

- --cluster-signing-cert-file=/etc/kubernetes/ssl/ca.crt

- --cluster-signing-key-file=/etc/kubernetes/ssl/ca.key

- --configure-cloud-routes=false

- --controllers=*,bootstrapsigner,tokencleaner

- --kubeconfig=/etc/kubernetes/controller-manager.conf

- --leader-elect=true

- --leader-elect-lease-duration=15s

- --leader-elect-renew-deadline=10s

- --node-cidr-mask-size-ipv4=24

- --node-monitor-grace-period=40s

- --node-monitor-period=5s

- --profiling=False

- --requestheader-client-ca-file=/etc/kubernetes/ssl/front-proxy-ca.crt

- --root-ca-file=/etc/kubernetes/ssl/ca.crt

- --service-account-private-key-file=/etc/kubernetes/ssl/sa.key

- --service-cluster-ip-range=10.233.0.0/18

- --terminated-pod-gc-threshold=12500

- --use-service-account-credentials=true

인증서 정보 확인

컨트롤 플레인은 3대인데 인증서 정보를 확인하면 1번 노드에만 super-admin.conf가 있는것을 확인할 수 있다.

root@admin-lb:~/kubespray# for i in {1..3}; do echo ">> k8s-node$i <<"; ssh k8s-node$i ls -l /etc/kubernetes/super-admin.conf ; echo; done

>> k8s-node1 <<

-rw-------. 1 root root 5689 Feb 8 00:42 /etc/kubernetes/super-admin.conf

>> k8s-node2 <<

ls: cannot access '/etc/kubernetes/super-admin.conf': No such file or directory

>> k8s-node3 <<

ls: cannot access '/etc/kubernetes/super-admin.conf': No such file or directory

- k8s-node1

- /etc/kubernetes/super-admin.conf 파일이 존재

- root 전용 권한(600)으로 생성됨

- k8s-node2, k8s-node3

- 동일 경로에 해당 파일이 존재하지 않음

/etc/kubernetes/super-admin.conf는 Kubespray가 생성하는 superuser kubeconfig 파일로 Kubernetes API에 대해 cluster-admin 수준 이상의 권한을 가지며, 일반적인 admin.conf보다 자동화, bootstrap, 내부 제어용으로 사용된다.

기본 사용자는 system:masters 그룹에 속한다.

Kubespray는 다중 control-plane 환경에서도 모든 관리용 kubeconfig를 모든 노드에 뿌리지 않는다. 대신 control-plane 중 하나의 노드(primary, first master) 를 기준으로 삼고 아래 파일들을 해당 노드에만 생성한다.

- /etc/kubernetes/admin.conf

- /etc/kubernetes/super-admin.conf

- /root/.kube/config

coredns 확인

root@admin-lb:~/kubespray# for i in {1..3}; do echo ">> k8s-node$i <<"; ssh k8s-node$i kubeadm certs check-expiration ; echo; done

>> k8s-node1 <<

[check-expiration] Reading configuration from the "kubeadm-config" ConfigMap in namespace "kube-system"...

[check-expiration] Use 'kubeadm init phase upload-config --config your-config.yaml' to re-upload it.

W0208 02:19:43.293312 51059 utils.go:69] The recommended value for "clusterDNS" in "KubeletConfiguration" is: [10.233.0.10]; the provided value is: [10.233.0.3]

CERTIFICATE EXPIRES RESIDUAL TIME CERTIFICATE AUTHORITY EXTERNALLY MANAGED

admin.conf Feb 07, 2027 15:42 UTC 364d ca no

apiserver Feb 07, 2027 15:42 UTC 364d ca no

apiserver-kubelet-client Feb 07, 2027 15:42 UTC 364d ca no

controller-manager.conf Feb 07, 2027 15:42 UTC 364d ca no

front-proxy-client Feb 07, 2027 15:42 UTC 364d front-proxy-ca no

scheduler.conf Feb 07, 2027 15:42 UTC 364d ca no

super-admin.conf Feb 07, 2027 15:42 UTC 364d ca no

CERTIFICATE AUTHORITY EXPIRES RESIDUAL TIME EXTERNALLY MANAGED

ca Feb 05, 2036 15:42 UTC 9y no

front-proxy-ca Feb 05, 2036 15:42 UTC 9y no

>> k8s-node2 <<

[check-expiration] Reading configuration from the "kubeadm-config" ConfigMap in namespace "kube-system"...

[check-expiration] Use 'kubeadm init phase upload-config --config your-config.yaml' to re-upload it.

W0208 02:19:43.751491 50100 utils.go:69] The recommended value for "clusterDNS" in "KubeletConfiguration" is: [10.233.0.10]; the provided value is: [10.233.0.3]

CERTIFICATE EXPIRES RESIDUAL TIME CERTIFICATE AUTHORITY EXTERNALLY MANAGED

admin.conf Feb 07, 2027 15:42 UTC 364d ca no

apiserver Feb 07, 2027 15:42 UTC 364d ca no

apiserver-kubelet-client Feb 07, 2027 15:42 UTC 364d ca no

controller-manager.conf Feb 07, 2027 15:42 UTC 364d ca no

front-proxy-client Feb 07, 2027 15:42 UTC 364d front-proxy-ca no

scheduler.conf Feb 07, 2027 15:42 UTC 364d ca no

!MISSING! super-admin.conf

CERTIFICATE AUTHORITY EXPIRES RESIDUAL TIME EXTERNALLY MANAGED

ca Feb 05, 2036 15:42 UTC 9y no

front-proxy-ca Feb 05, 2036 15:42 UTC 9y no

>> k8s-node3 <<

[check-expiration] Reading configuration from the "kubeadm-config" ConfigMap in namespace "kube-system"...

[check-expiration] Use 'kubeadm init phase upload-config --config your-config.yaml' to re-upload it.

W0208 02:19:44.231769 50364 utils.go:69] The recommended value for "clusterDNS" in "KubeletConfiguration" is: [10.233.0.10]; the provided value is: [10.233.0.3]

CERTIFICATE EXPIRES RESIDUAL TIME CERTIFICATE AUTHORITY EXTERNALLY MANAGED

admin.conf Feb 07, 2027 15:42 UTC 364d ca no

apiserver Feb 07, 2027 15:42 UTC 364d ca no

apiserver-kubelet-client Feb 07, 2027 15:42 UTC 364d ca no

controller-manager.conf Feb 07, 2027 15:42 UTC 364d ca no

front-proxy-client Feb 07, 2027 15:42 UTC 364d front-proxy-ca no

scheduler.conf Feb 07, 2027 15:42 UTC 364d ca no

!MISSING! super-admin.conf

CERTIFICATE AUTHORITY EXPIRES RESIDUAL TIME EXTERNALLY MANAGED

ca Feb 05, 2036 15:42 UTC 9y no

front-proxy-ca Feb 05, 2036 15:42 UTC 9y no

각 control-plane 노드는 클러스터 전역 설정을 공유하고 있으며, 인증서 역시 동일한 CA 체계 하에서 관리되고 있다.

구성된 인증서의 정보는 아래와 같다.

- 만료 시점: 2027-02-07

- 잔여 기간: 약 364일

- CA: ca 또는 front-proxy-ca

- externally managed: no

2, 3번 노드에서 보이는 !MISSING! super-admin.conf 메세지는 1번 노드가 primary 노드임을 보여주는 내용이다.

kubeadm이 권장하는 clusterDNS 주소는 Service CIDR의 첫 번째 IP (기본적으로 .10)인데 현재 kubelet 설정에는 10.233.0.3 이 사용되고 있다. Kubespray 환경에서는 CoreDNS Service IP를 .3 또는 .10 등으로 돼있다.

이는 기존 클러스터 호환성을 확보한 내부 네트워크 설계된 것이며, 과거 kube-dns 구성과의 연속성을 위해 의도적으로 조정되었다고 볼 수 있다.

root@admin-lb:~/kubespray# kubectl get svc -n kube-system coredns

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

coredns ClusterIP 10.233.0.3 <none> 53/UDP,53/TCP,9153/TCP 102m

root@admin-lb:~/kubespray# kubectl get cm -n kube-system kubelet-config -o yaml | grep clusterDNS -A2

clusterDNS:

- 10.233.0.3

clusterDomain: cluster.local

coredns 는 Kubernetes 클러스터 내부 DNS를 담당하는 Service이며, Service 타입은 ClusterIP이고 클러스터 내부에서만 접근 가능한 가상 IP를 가진다. 이 ClusterIP가 바로 10.233.0.3 이다

53/UDP, 53/TCP 포트는 일반적인 DNS 질의를 수행하고, 9153/TCP는 Prometheus metrics 용 포트로 역할을 수행하여 CoreDNS가 DNS 서버 + 관측 대상 컴포넌트 역할을 동시에 수행하고 있음을 보여준다.

cluster ip 확인

root@admin-lb:~/kubespray# kubectl exec -it -n kube-system nginx-proxy-k8s-node4 -- cat /etc/resolv.conf

search kube-system.svc.cluster.local svc.cluster.local cluster.local default.svc.cluster.local

nameserver 10.233.0.3

options ndots:5

이 클러스터에서 CoreDNS는 10.233.0.3 주소로 서비스 되고 있다.

해당 nginx 파드의 모든 DNS 질의는 10.233.0.3 으로 전달되고, 이 IP는 CoreDNS Service의 ClusterIP 이다.

조인된 노드와 조인되지 않은 노드 dns 설정 비교

root@admin-lb:~/kubespray# ssh k8s-node1 cat /etc/resolv.conf

# Generated by NetworkManager

search default.svc.cluster.local svc.cluster.local

nameserver 10.233.0.3

nameserver 168.126.63.1

nameserver 168.126.63.2

options ndots:2 timeout:2 attempts:2

root@admin-lb:~/kubespray# ssh k8s-node5 cat /etc/resolv.conf

# Generated by NetworkManager

search lan

nameserver 168.126.63.1

nameserver 168.126.63.2

조인되지 않은 5번 노드는 기본 dns 설정을 가지고 있다.

kubeadm-config cm 정보 확인

Control Plane Endpoint

controlPlaneEndpoint: 192.168.10.11:6443

이 항목은 클러스터 외부에서 접근할 때의 kube-apiserver 진입점을 의미한다.

- 현재 설정: 192.168.10.11:6443

- LB VIP 또는 대표 control-plane 노드 주소로 사용 중

- 앞서 admin-lb에서 kubeconfig의 server: 값을 이 주소로 수정한 것과 정확히 일치한다

API Server 인증서 SAN 구성

apiserver-count: "3"

authorization-mode: Node,RBAC

bind-address: '::'

service-cluster-ip-range: 10.233.0.0/18kube-apiserver TLS 인증서가 신뢰하는 접속 대상 목록이다.

- 서비스 DNS 이름 : kubernetes.default.svc.cluster.local

- Service ClusterIP : 10.233.0.1

- 로컬 접근 : 127.0.0.1, ::1

- control-plane 노드 이름 : k8s-node1~3

- LB DNS / IP

- lb-apiserver.kubernetes.local

- 192.168.10.11~13

이 구성 덕분으로 Pod 내부, control-plane 노드, admin-lb, LB 경유 접근 모두 tls 오류 없이 가능하게 된다.

API Server 주요 extraArgs

apiserver-count: "3"

authorization-mode: Node,RBAC

bind-address: '::'

service-cluster-ip-range: 10.233.0.0/18- apiserver-count: 3 : control-plane 노드가 3대임을 명시

- authorization-mode: Node,RBAC : Kubernetes 표준 권한 모델

- bind-address: '::' : IPv4/IPv6 dual-stack 대응

- service-cluster-ip-range

- Service CIDR = 10.233.0.0/18

- CoreDNS Service IP(10.233.0.3)가 이 범위에 포함

Networking 설정

networking:

dnsDomain: cluster.local

podSubnet: 10.233.64.0/18

serviceSubnet: 10.233.0.0/18- Service 네트워크 : 10.233.0.0/18

- Pod 네트워크 : 10.233.64.0/18

- DNS 도메인 : cluster.local

앞에서 확인한 내용을 정리하면 아래와 같다.

- CoreDNS Service IP = 10.233.0.3

- kubelet clusterDNS = 10.233.0.3

- Pod /etc/resolv.conf nameserver = 10.233.0.3

DNS 섹션: disabled: true의 의미

dns:

disabled: true이 부분은 kubeadm 기본 DNS 설치를 비활성화한다는 의미다.

kubeadm 자체의 CoreDNS addon은 사용하지 않으며 대신 Kubespray가 Helm/Manifest 기반으로 CoreDNS를 별도 배포·관리하여 DNS 생명주기를 관리한다.

etcd 구성 (External etcd)

etcd:

external:

endpoints:

- https://192.168.10.11:2379

- https://192.168.10.12:2379

- https://192.168.10.13:2379

control-plane static pod가 아니라 외부(etcd cluster) 3노드 etcd HA 구성되어 있으며 TLS 인증서 기반으로 접근한다.

이는 control-plane과 etcd의 역할을 명확히 분리한 구조다.

인증서 유효기간 설정

certificateValidityPeriod: 8760h0m0s

caCertificateValidityPeriod: 87600h0m0s

kubeadm certs check-expiration 결과와 일치하며, 인증서 수명 주기가 설정 → 실제 상태까지 일관되게 유지되고 있다.

- 일반 인증서: 약 1년

- CA 인증서: 약 10년

K8S API 엔드포인트

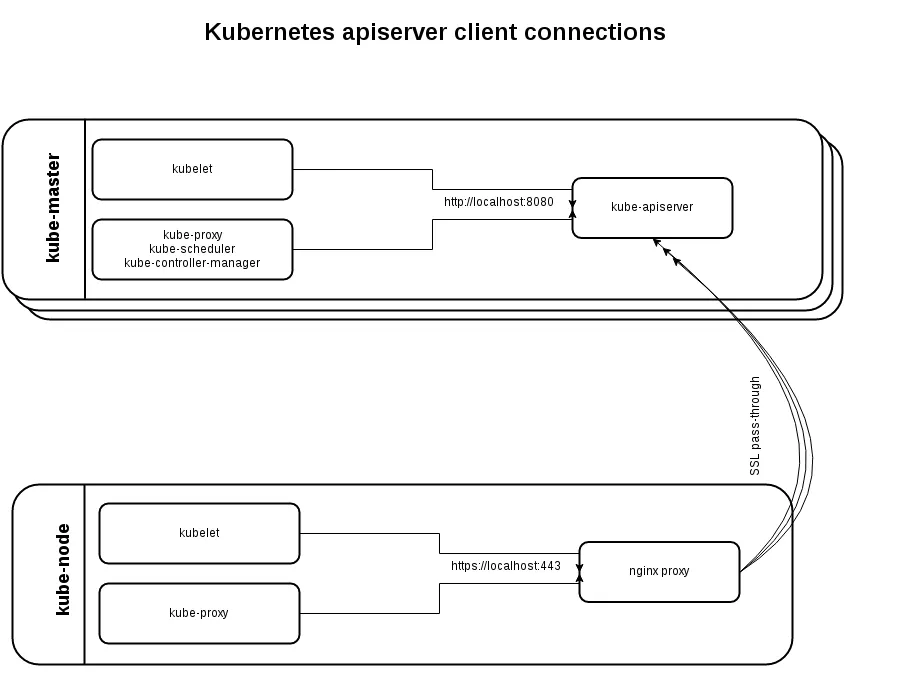

Worker 노드에서 Kubernetes API에 접근하는 실제 경로와 Kubespray의 Client-Side Load Balancing 구조를 알아본다.

워커노드 정보 확인

root@admin-lb:~/kubespray# ssh k8s-node4 crictl ps

CONTAINER IMAGE CREATED STATE NAME ATTEMPT POD ID POD NAMESPACE

468e938c98d5c bc6c1e09a843d 2 hours ago Running metrics-server 0 09ff933093700 metrics-server-65fdf69dcb-jnvbd kube-system

1b68d0c87c769 2f6c962e7b831 2 hours ago Running coredns 0 b0027b8776163 coredns-664b99d7c7-5drtx kube-system

37d48e61e4df1 cadcae92e6360 2 hours ago Running kube-flannel 0 c8cdc9b9aff8e kube-flannel-ds-arm64-wkwrg kube-system

8117e271262f3 72b57ec14d31e 2 hours ago Running kube-proxy 0 c02b63ef148dc kube-proxy-bjqdx kube-system

6a22218ae0325 5a91d90f47ddf 2 hours ago Running nginx-proxy 0 83316bf2859bd nginx-proxy-k8s-node4 kube-system

nginx 컨피그

root@admin-lb:~/kubespray# ssh k8s-node4 cat /etc/nginx/nginx.conf

error_log stderr notice;

worker_processes 2;

worker_rlimit_nofile 130048;

worker_shutdown_timeout 10s;

events {

multi_accept on;

use epoll;

worker_connections 16384;

}

stream {

upstream kube_apiserver {

least_conn;

server 192.168.10.11:6443;

server 192.168.10.12:6443;

server 192.168.10.13:6443;

}

server {

listen 127.0.0.1:6443;

proxy_pass kube_apiserver;

proxy_timeout 10m;

proxy_connect_timeout 1s;

}

}

http {

aio threads;

aio_write on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout 5m;

keepalive_requests 100;

reset_timedout_connection on;

server_tokens off;

autoindex off;

server {

listen 8081;

location /healthz {

access_log off;

return 200;

}

location /stub_status {

stub_status on;

access_log off;

}

}

}

nginx-proxy는 Deployment나 DaemonSet이 아니라 static pod로 kubelet이 /etc/kubernetes/manifests 하위 매니페스트를 감지해 직접 생성 및 관리된다.

nginx 설정은 worker 노드는 항상 localhost만 바라보고, 실제 control-plane 분산은 nginx가 책임진다.

kubelet / kube-proxy

↓

https://127.0.0.1:6443

↓

nginx-proxy (local)

↓

192.168.10.11~13:6443 (control-plane)

nginx 컨피그는 Jinja2 템플릿을 사용하여 노드별 nginx.conf 자동 생성된다.

root@admin-lb:~/kubespray# tree roles/kubernetes/node/tasks/loadbalancer

roles/kubernetes/node/tasks/loadbalancer

├── haproxy.yml

├── kube-vip.yml

└── nginx-proxy.yml

1 directory, 3 filesroot@admin-lb:~/kubespray# cat roles/kubernetes/node/tasks/loadbalancer/nginx-proxy.yml

---

- name: Haproxy | Cleanup potentially deployed haproxy

file:

path: "{{ kube_manifest_dir }}/haproxy.yml"

state: absent

- name: Nginx-proxy | Make nginx directory

file:

path: "{{ nginx_config_dir }}"

state: directory

mode: "0700"

owner: root

- name: Nginx-proxy | Write nginx-proxy configuration

template:

src: "loadbalancer/nginx.conf.j2"

dest: "{{ nginx_config_dir }}/nginx.conf"

owner: root

mode: "0755"

backup: true

- name: Nginx-proxy | Get checksum from config

stat:

path: "{{ nginx_config_dir }}/nginx.conf"

get_attributes: false

get_checksum: true

get_mime: false

register: nginx_stat

- name: Nginx-proxy | Write static pod

template:

src: manifests/nginx-proxy.manifest.j2

dest: "{{ kube_manifest_dir }}/nginx-proxy.yml"

mode: "0640"root@admin-lb:~/kubespray# cat roles/kubernetes/node/templates/loadbalancer/nginx.conf.j2

error_log stderr notice;

worker_processes 2;

worker_rlimit_nofile 130048;

worker_shutdown_timeout 10s;

events {

multi_accept on;

use epoll;

worker_connections 16384;

}

stream {

upstream kube_apiserver {

least_conn;

{% for host in groups['kube_control_plane'] -%}

server {{ hostvars[host]['main_access_ip'] | ansible.utils.ipwrap }}:{{ kube_apiserver_port }};

{% endfor -%}

}

server {

listen 127.0.0.1:{{ loadbalancer_apiserver_port|default(kube_apiserver_port) }};

{% if ipv6_stack -%}

listen [::1]:{{ loadbalancer_apiserver_port|default(kube_apiserver_port) }};

{% endif -%}

proxy_pass kube_apiserver;

proxy_timeout 10m;

proxy_connect_timeout 1s;

}

}

http {

aio threads;

aio_write on;

tcp_nopush on;

tcp_nodelay on;

keepalive_timeout {{ loadbalancer_apiserver_keepalive_timeout }};

keepalive_requests 100;

reset_timedout_connection on;

server_tokens off;

autoindex off;

{% if loadbalancer_apiserver_healthcheck_port is defined -%}

server {

listen {{ loadbalancer_apiserver_healthcheck_port }};

{% if ipv6_stack -%}

listen [::]:{{ loadbalancer_apiserver_healthcheck_port }};

{% endif -%}

location /healthz {

access_log off;

return 200;

}

location /stub_status {

stub_status on;

access_log off;

}

}

{% endif %}

}root@admin-lb:~/kubespray# cat roles/kubernetes/node/templates/manifests/nginx-proxy.manifest.j2

apiVersion: v1

kind: Pod

metadata:

name: {{ loadbalancer_apiserver_pod_name }}

namespace: kube-system

labels:

addonmanager.kubernetes.io/mode: Reconcile

k8s-app: kube-nginx

annotations:

nginx-cfg-checksum: "{{ nginx_stat.stat.checksum }}"

spec:

hostNetwork: true

dnsPolicy: ClusterFirstWithHostNet

nodeSelector:

kubernetes.io/os: linux

priorityClassName: system-node-critical

containers:

- name: nginx-proxy

image: {{ nginx_image_repo }}:{{ nginx_image_tag }}

imagePullPolicy: {{ k8s_image_pull_policy }}

resources:

requests:

cpu: {{ loadbalancer_apiserver_cpu_requests }}

memory: {{ loadbalancer_apiserver_memory_requests }}

{% if loadbalancer_apiserver_healthcheck_port is defined -%}

livenessProbe:

httpGet:

path: /healthz

port: {{ loadbalancer_apiserver_healthcheck_port }}

readinessProbe:

httpGet:

path: /healthz

port: {{ loadbalancer_apiserver_healthcheck_port }}

{% endif -%}

volumeMounts:

- mountPath: /etc/nginx

name: etc-nginx

readOnly: true

volumes:

- name: etc-nginx

hostPath:

path: {{ nginx_config_dir }}

api 호출 검증

root@admin-lb:~/kubespray# ssh k8s-node4 curl -s localhost:8081/healthz -I

HTTP/1.1 200 OK

Server: nginx

Date: Sat, 07 Feb 2026 18:12:23 GMT

Content-Type: text/plain

Content-Length: 0

Connection: keep-alive

root@admin-lb:~/kubespray# ssh k8s-node4 curl -sk https://127.0.0.1:6443/version | grep Version

"gitVersion": "v1.32.9",

"goVersion": "go1.23.12",

root@admin-lb:~/kubespray# ssh k8s-node4 ss -tnlp | grep nginx

LISTEN 0 511 0.0.0.0:8081 0.0.0.0:* users:(("nginx",pid=17561,fd=6),("nginx",pid=17560,fd=6),("nginx",pid=17532,fd=6))

LISTEN 0 511 127.0.0.1:6443 0.0.0.0:* users:(("nginx",pid=17561,fd=5),("nginx",pid=17560,fd=5),("nginx",pid=17532,fd=5))

worker 노드에서 localhost(127.0.0.1)로 요청했지만 실제 응답은 control-plane API Server가 반환한다.

이는 nginx-proxy를 통한 Client-Side LB가 정상 동작 중임을 증명하는 것이다.

kubelet이 바라보는 엔드포인트

root@admin-lb:~/kubespray# ssh k8s-node4 cat /etc/kubernetes/kubelet.conf | grep server

server: https://localhost:6443

kube-proxy 구조

root@admin-lb:~/kubespray# kubectl get cm -n kube-system kube-proxy -o yaml | grep 'kubeconfig.conf:' -A18

kubeconfig.conf: |-

apiVersion: v1

kind: Config

clusters:

- cluster:

certificate-authority: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

server: https://127.0.0.1:6443

name: default

contexts:

- context:

cluster: default

namespace: default

user: default

name: default

current-context: default

users:

- name: default

user:

tokenFile: /var/run/secrets/kubernetes.io/serviceaccount/token

nginx 로그 알럿을 해결해보기

root@admin-lb:~/kubespray# kubectl logs -n kube-system nginx-proxy-k8s-node4

/docker-entrypoint.sh: /docker-entrypoint.d/ is not empty, will attempt to perform configuration

/docker-entrypoint.sh: Looking for shell scripts in /docker-entrypoint.d/

/docker-entrypoint.sh: Launching /docker-entrypoint.d/10-listen-on-ipv6-by-default.sh

10-listen-on-ipv6-by-default.sh: info: /etc/nginx/conf.d/default.conf is not a file or does not exist

/docker-entrypoint.sh: Sourcing /docker-entrypoint.d/15-local-resolvers.envsh

/docker-entrypoint.sh: Launching /docker-entrypoint.d/20-envsubst-on-templates.sh

/docker-entrypoint.sh: Launching /docker-entrypoint.d/30-tune-worker-processes.sh

/docker-entrypoint.sh: Configuration complete; ready for start up

2026/02/07 15:42:53 [notice] 1#1: using the "epoll" event method

2026/02/07 15:42:53 [notice] 1#1: nginx/1.28.0

2026/02/07 15:42:53 [notice] 1#1: built by gcc 14.2.0 (Alpine 14.2.0)

2026/02/07 15:42:53 [notice] 1#1: OS: Linux 6.12.0-55.39.1.el10_0.aarch64

2026/02/07 15:42:53 [notice] 1#1: getrlimit(RLIMIT_NOFILE): 65535:65535

2026/02/07 15:42:53 [notice] 1#1: start worker processes

2026/02/07 15:42:53 [notice] 1#1: start worker process 20

2026/02/07 15:42:53 [notice] 1#1: start worker process 21

2026/02/07 15:42:53 [alert] 20#20: setrlimit(RLIMIT_NOFILE, 130048) failed (1: Operation not permitted)

2026/02/07 15:42:53 [alert] 21#21: setrlimit(RLIMIT_NOFILE, 130048) failed (1: Operation not permitted)

2026/02/07 15:43:33 [error] 20#20: *37 recv() failed (104: Connection reset by peer) while proxying and reading from upstream, client: 127.0.0.1, server: 127.0.0.1:6443, upstream: "192.168.10.12:6443", bytes from/to client:1489/0, bytes from/to upstream:0/1489

2026/02/07 15:43:33 [error] 21#21: *31 recv() failed (104: Connection reset by peer) while proxying and reading from upstream, client: 127.0.0.1, server: 127.0.0.1:6443, upstream: "192.168.10.12:6443", bytes from/to client:1489/0, bytes from/to upstream:0/1489

nginx 설정에서는 파일 디스크립터 한계를 130048로 올리려 했으나, 실제 컨테이너 런타임 환경에서는 이를 허용하지 않아 실패한 것이다.

노드4번 containerd 설정 확인

root@admin-lb:~/kubespray# ssh k8s-node4 cat /etc/containerd/cri-base.json | jq | grep rlimits -A 6

"rlimits": [

{

"type": "RLIMIT_NOFILE",

"hard": 65535,

"soft": 65535

}

],

containerd가 OCI Runtime Spec 단계에서 이미 RLIMIT_NOFILE을 65535로 고정돼서 nginx 내부 설정(worker_rlimit_nofile 130048)은 런타임 레벨에서 차단될 수밖에 없는 구조인 상태이다.

root@admin-lb:~/kubespray# ssh k8s-node4 crictl inspect --name nginx-proxy | grep rlimits -A6

"rlimits": [

{

"hard": 65535,

"soft": 65535,

"type": "RLIMIT_NOFILE"

}

],

Kubespray는 containerd 설치 시 containerd_base_runtime_spec_rlimit_nofile: 65535 를 기본값으로 사용한다.

이 설정은 과도한 FD 사용으로 인한 노드 자원 고갈을 방지하고, 대부분의 워크로드에 충분한 기본값 제공하기 위함이다.

하지만 nginx-proxy처럼 커넥션 수가 많은 인프라 컴포넌트에는 이 제한이 nginx 설정과 충돌할 수 있다.

OCI Spec 수정하여 앤서블 플레이북 수행

root@admin-lb:~/kubespray# cat << EOF >> inventory/mycluster/group_vars/all/containerd.yml

containerd_default_base_runtime_spec_patch:

process:

rlimits: []

EOF

grep "^[^#]" inventory/mycluster/group_vars/all/containerd.yml

---

containerd_default_base_runtime_spec_patch:

process:

rlimits: []

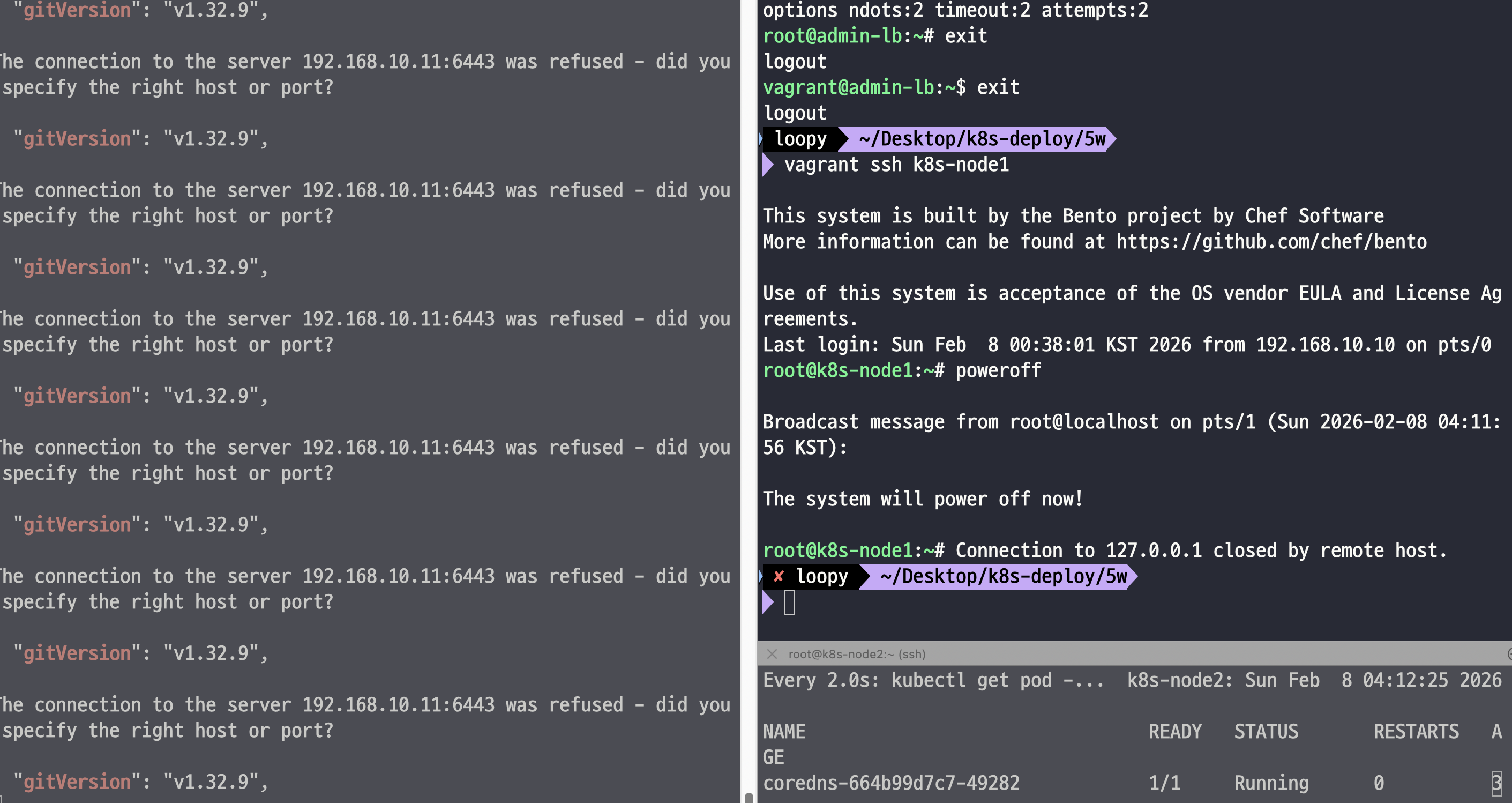

root@k8s-node4:~# journalctl -u containerd.service -fansible-playbook -i inventory/mycluster/inventory.ini -v cluster.yml --tags "containerd" --limit k8s-node4 -e kube_version="1.32.9"

배포할 때 4번 노드에서 containerd 서비스를 모니터링하면서 보면 containerd 서비스가 재시작되는 것을 확인할 수 있다.

containerd 재시작 시 해당 노드에 스케줄 된 모든 파드 상태가 일시적으로 아래와 같이 영향을 받을 수 있다

- NotReady

- ContainerCreating

- CrashLoopBackOff (일부 케이스)

- static pod (nginx-proxy 등)은 kubelet이 즉시 재생성

그러므로 실제 작업 전에는 앤서블 플레이북 영향도를 베타 환경에서 미리 확인하고

kubectl cordon k8s-node4

kubectl drain k8s-node4 --ignore-daemonsets --delete-emptydir-data

안전하게 파드를 격리시키고 작업 대상 노드를 drain 한 후 작업하는 것이 안전하다!

플레이북 배포 후

root@admin-lb:~/kubespray# ssh k8s-node4 cat /etc/containerd/cri-base.json | jq | grep rlimits

"rlimits": [],root@admin-lb:~/kubespray# ssh k8s-node4 crictl inspect --name nginx-proxy | grep rlimits -A6

"rlimits": [

{

"hard": 65535,

"soft": 65535,

"type": "RLIMIT_NOFILE"

}

],

cri-base.json 앞으로 생성될 컨테이너에 적용되는 base runtime spec으로 이미 실행 중이던 nginx-proxy Pod는 기존 runtime spec을 그대로 유지되며, containerd 재시작만으로는 반영되지 않는다.

root@k8s-node4:~# crictl pods --namespace kube-system --name 'nginx-proxy-*' -q | xargs crictl rmp -f

Stopped sandbox 83316bf2859bd6177593dd4b04a662af5f14891602c4af42b887199638229e66

Removed sandbox 83316bf2859bd6177593dd4b04a662af5f14891602c4af42b887199638229e66

nginx-proxy는 static pod로 kubelet이 /etc/kubernetes/manifests 감시 중인데,

Pod를 삭제하여 kubelet이 즉시 다시 생성되고 새로운 OCI runtime spec 적용되게 한다.

root@admin-lb:~/kubespray# ssh k8s-node4 crictl inspect --name nginx-proxy | grep rlimits -A6

root@admin-lb:~/kubespray# kubectl logs -n kube-system nginx-proxy-k8s-node4 -f

/docker-entrypoint.sh: /docker-entrypoint.d/ is not empty, will attempt to perform configuration

/docker-entrypoint.sh: Looking for shell scripts in /docker-entrypoint.d/

/docker-entrypoint.sh: Launching /docker-entrypoint.d/10-listen-on-ipv6-by-default.sh

10-listen-on-ipv6-by-default.sh: info: /etc/nginx/conf.d/default.conf is not a file or does not exist

/docker-entrypoint.sh: Sourcing /docker-entrypoint.d/15-local-resolvers.envsh

/docker-entrypoint.sh: Launching /docker-entrypoint.d/20-envsubst-on-templates.sh

/docker-entrypoint.sh: Launching /docker-entrypoint.d/30-tune-worker-processes.sh

/docker-entrypoint.sh: Configuration complete; ready for start up

2026/02/07 18:30:26 [notice] 1#1: using the "epoll" event method

2026/02/07 18:30:26 [notice] 1#1: nginx/1.28.0

2026/02/07 18:30:26 [notice] 1#1: built by gcc 14.2.0 (Alpine 14.2.0)

2026/02/07 18:30:26 [notice] 1#1: OS: Linux 6.12.0-55.39.1.el10_0.aarch64

2026/02/07 18:30:26 [notice] 1#1: getrlimit(RLIMIT_NOFILE): 1048576:1048576

2026/02/07 18:30:26 [notice] 1#1: start worker processes

2026/02/07 18:30:26 [notice] 1#1: start worker process 20

2026/02/07 18:30:26 [notice] 1#1: start worker process 21

containerd base runtime spec에서 RLIMIT_NOFILE 설정을 제거한 뒤 nginx-proxy static pod를 재기동함으로써, nginx가 호스트 ulimit을 그대로 상속받아 nginx 경고 없이 정상 동작하도록 구성하였다.

컨트롤 플레인 노드 -> K8S Api Endpoint

kubeops view 설치

root@admin-lb:~/kubesprayhelm repo add geek-cookbook https://geek-cookbook.github.io/charts/s/

"geek-cookbook" has been added to your repositories

root@admin-lb:~/kubespray# helm install kube-ops-view geek-cookbook/kube-ops-view --version 1.2.2 \

--set service.main.type=NodePort,service.main.ports.http.nodePort=30000 \

--set env.TZ="Asia/Seoul" --namespace kube-system \

--set image.repository="abihf/kube-ops-view" --set image.tag="latest"

NAME: kube-ops-view

LAST DEPLOYED: Sun Feb 8 03:40:35 2026

NAMESPACE: kube-system

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

1. Get the application URL by running these commands:

export NODE_PORT=$(kubectl get --namespace kube-system -o jsonpath="{.spec.ports[0].nodePort}" services kube-ops-view)

export NODE_IP=$(kubectl get nodes --namespace kube-system -o jsonpath="{.items[0].status.addresses[0].address}")

echo http://$NODE_IP:$NODE_PORT

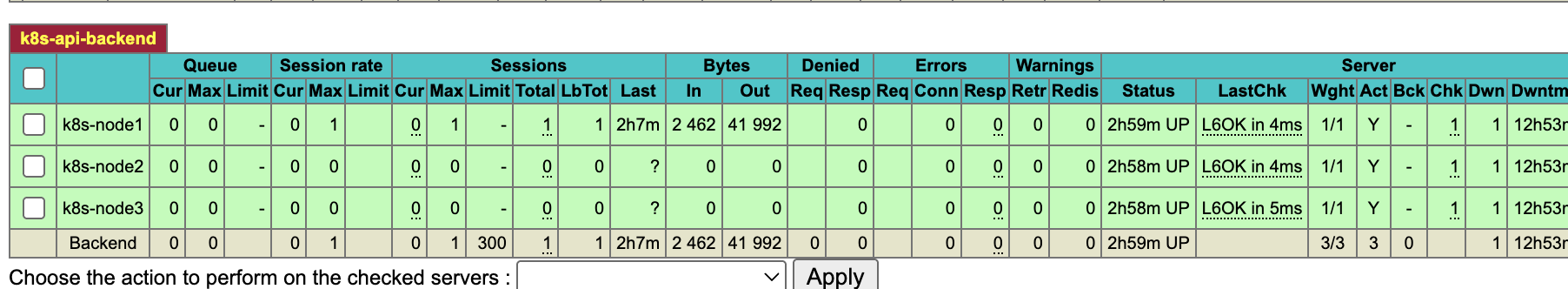

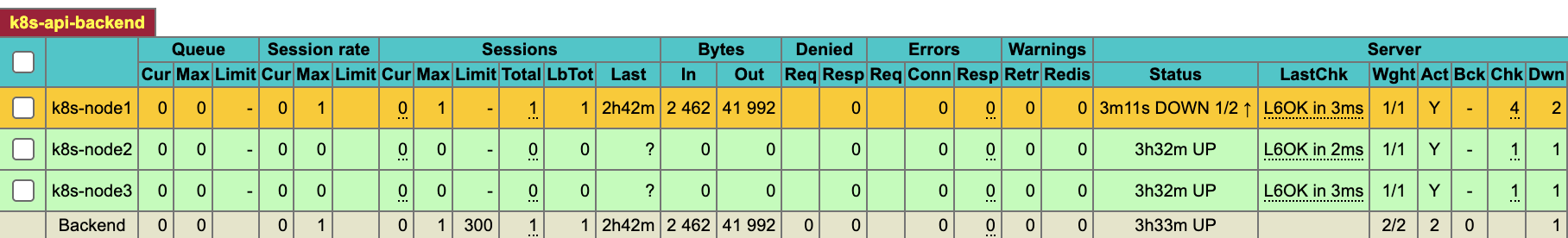

현재는 1번 노드만 트래픽이 들어오는 것을 3대 노드 모두 들어올 수 있도록 한다.

샘플 앱 배포

# 샘플 애플리케이션 배포

cat << EOF | kubectl apply -f -

apiVersion: apps/v1

kind: Deployment

metadata:

name: webpod

spec:

replicas: 2

selector:

matchLabels:

app: webpod

template:

metadata:

labels:

app: webpod

spec:

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: app

operator: In

values:

- sample-app

topologyKey: "kubernetes.io/hostname"

containers:

- name: webpod

image: traefik/whoami

ports:

- containerPort: 80

---

apiVersion: v1

kind: Service

metadata:

name: webpod

labels:

app: webpod

spec:

selector:

app: webpod

ports:

- protocol: TCP

port: 80

targetPort: 80

nodePort: 30003

type: NodePort

EOF

root@admin-lb:~/kubespray# kubectl get deploy,svc,ep webpod -owide

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

deployment.apps/webpod 2/2 2 2 23s webpod traefik/whoami app=webpod

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service/webpod NodePort 10.233.2.178 <none> 80:30003/TCP 23s app=webpod

NAME ENDPOINTS AGE

endpoints/webpod 10.233.67.5:80,10.233.67.6:80 23s

장애 상황 재현

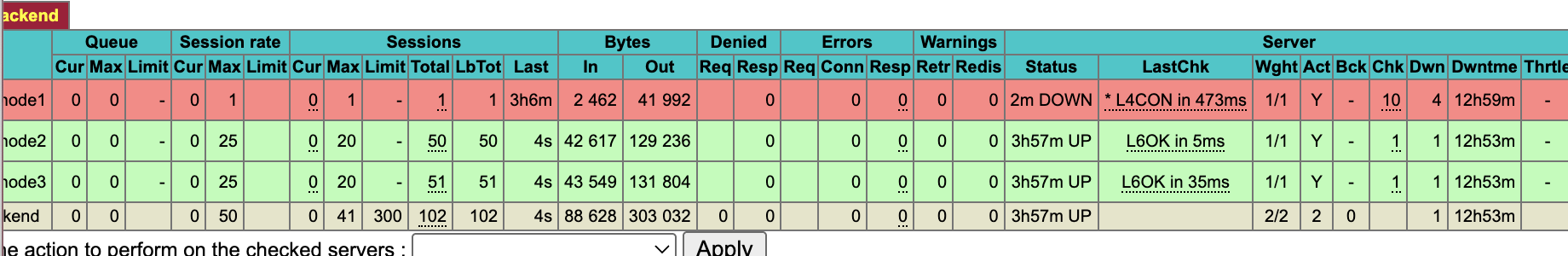

1번 노드를 전원을 꺼서 장애를 재현한다.

하지만 백엔드 대상 서버가 2대가 더 있기 때문에 2번 노드에서 아래 요청에 대해서는 정상적으로 처리된다.

while true; do curl -sk https://127.0.0.1:6443/version | grep gitVersion ; date; sleep 1; echo ; done

external LB -> HA 3대로 지정

sed -i 's/192.168.10.12/192.168.10.10/g' /root/.kube/config

root@admin-lb:~/kubespray# ssh k8s-node1 kubectl get cm -n kube-system kubeadm-config -o yaml

apiVersion: v1

data:

ClusterConfiguration: |

apiServer:

certSANs:

- kubernetes

- kubernetes.default

- kubernetes.default.svc

- kubernetes.default.svc.cluster.local

- 10.233.0.1

- localhost

- 127.0.0.1

- ::1

- k8s-node1

- k8s-node2

- k8s-node3

- lb-apiserver.kubernetes.local

- 192.168.10.11

- 192.168.10.12

- 192.168.10.13

- 10.0.2.15

- fd17:625c:f037:2:a00:27ff:fe90:eaeb

ansible-playbook -i inventory/mycluster/inventory.ini -v cluster.yml --tags "control-plane" --limit kube_control_plane -e kube_version="1.32.9"

...

Sunday 08 February 2026 04:25:31 +0900 (0:00:00.033) 0:00:20.897 *******

===============================================================================

Gather minimal facts ---------------------------------------------------- 1.99s

kubernetes/control-plane : Kubeadm | Check apiserver.crt SAN hosts ------ 1.36s

kubernetes/control-plane : Backup old certs and keys -------------------- 1.29s

kubernetes/control-plane : Install | Copy kubectl binary from download dir --- 1.24s

kubernetes/control-plane : Kubeadm | Check apiserver.crt SAN IPs -------- 1.07s

Gather necessary facts (hardware) --------------------------------------- 0.98s

kubernetes/preinstall : Create other directories of root owner ---------- 0.74s

kubernetes/preinstall : Create kubernetes directories ------------------- 0.72s

kubernetes/control-plane : Backup old confs ----------------------------- 0.70s

kubernetes/control-plane : Update server field in component kubeconfigs --- 0.67s

win_nodes/kubernetes_patch : debug -------------------------------------- 0.66s

kubernetes/control-plane : Kubeadm | Create kubeadm config -------------- 0.64s

kubernetes/control-plane : Kubeadm | regenerate apiserver cert 2/2 ------ 0.42s

kubernetes/control-plane : Install kubectl bash completion -------------- 0.37s

kubernetes/control-plane : Create kube-scheduler config ----------------- 0.36s

kubernetes/control-plane : Renew K8S control plane certificates monthly 2/2 --- 0.35s

Gather necessary facts (network) ---------------------------------------- 0.34s

kubernetes/control-plane : Kubeadm | aggregate all SANs ----------------- 0.34s

kubernetes/control-plane : Set kubectl bash completion file permissions --- 0.34s

kubernetes/control-plane : Install script to renew K8S control plane certificates --- 0.30skubectl edit cm -n kube-system kubeadm-config

data:

ClusterConfiguration: |

apiServer:

certSANs:

- k8s-api-srv.admin-lb.com

- 192.168.10.10

- k8s-node1

- k8s-node2

- k8s-node3

- kubernetes

- kubernetes.default

- kubernetes.default.svc

- kubernetes.default.svc.cluster.local

- lb-apiserver.kubernetes.local

- localhost

- 127.0.0.1

- ::1

- 10.233.0.1

- 192.168.10.11

- 192.168.10.12

- 192.168.10.13

- 10.0.2.15

controlPlaneEndpoint: 192.168.10.10:6443

kubeadm-config ConfigMap에는 controlPlaneEndpoint와 apiServer.certSANs를 통해 API Server의 공식 접근 엔드포인트(IP/DNS)를 명시해야 한다. 이는 인증서 갱신 및 클러스터 업그레이드 시 기준값으로 사용된다.

따라서 현재 사용 중인 VIP 또는 DNS 엔드포인트를 명시적으로 추가해두어야 한다.

root@admin-lb:~/kubespray# kubectl get node -v=6

I0208 04:26:22.096297 21384 loader.go:402] Config loaded from file: /root/.kube/config

I0208 04:26:22.097979 21384 envvar.go:172] "Feature gate default state" feature="ClientsAllowCBOR" enabled=false

I0208 04:26:22.097992 21384 envvar.go:172] "Feature gate default state" feature="ClientsPreferCBOR" enabled=false

I0208 04:26:22.097995 21384 envvar.go:172] "Feature gate default state" feature="InformerResourceVersion" enabled=false

I0208 04:26:22.097997 21384 envvar.go:172] "Feature gate default state" feature="WatchListClient" enabled=false

I0208 04:26:22.111997 21384 round_trippers.go:560] GET https://192.168.10.11:6443/api/v1/nodes?limit=500 200 OK in 7 milliseconds

NAME STATUS ROLES AGE VERSION

k8s-node1 Ready control-plane 3h44m v1.32.9

k8s-node2 Ready control-plane 3h43m v1.32.9

k8s-node3 Ready control-plane 3h43m v1.32.9

k8s-node4 Ready <none> 3h43m v1.32.9sed -i 's/192.168.10.10/k8s-api-srv.admin-lb.com/g' /root/.kube/configroot@admin-lb:~/kubespray# ssh k8s-node1 cat /etc/kubernetes/ssl/apiserver.crt | openssl x509 -text -noout

...

X509v3 Subject Alternative Name:

DNS:k8s-api-srv.admin-lb.com, DNS:k8s-node1, DNS:k8s-node2, DNS:k8s-node3, DNS:kubernetes, DNS:kubernetes.default, DNS:kubernetes.default.svc, DNS:kubernetes.default.svc.cluster.local, DNS:lb-apiserver.kubernetes.local, DNS:localhost, IP Address:10.233.0.1, IP Address:192.168.10.11, IP Address:127.0.0.1, IP Address:0:0:0:0:0:0:0:1, IP Address:192.168.10.10, IP Address:192.168.10.12, IP Address:192.168.10.13, IP Address:10.0.2.15, IP Address:FD17:625C:F037:2:A00:27FF:FE90:EAEB

1번 노드를 죽였지만 2,3번 노드로 트래픽이 분배되는 것을 확인할 수 있다!

버츄얼박스에서 1번 노드를 다시 재기동한다.

노드 관리

노드 추가

root@admin-lb:~/kubespray# cat << EOF > /root/kubespray/inventory/mycluster/inventory.ini

[kube_control_plane]

k8s-node1 ansible_host=192.168.10.11 ip=192.168.10.11 etcd_member_name=etcd1

k8s-node2 ansible_host=192.168.10.12 ip=192.168.10.12 etcd_member_name=etcd2

k8s-node3 ansible_host=192.168.10.13 ip=192.168.10.13 etcd_member_name=etcd3

[etcd:children]

kube_control_plane

[kube_node]

k8s-node4 ansible_host=192.168.10.14 ip=192.168.10.14

k8s-node5 ansible_host=192.168.10.15 ip=192.168.10.15

EOFroot@admin-lb:~/kubespray# ansible-inventory -i /root/kubespray/inventory/mycluster/inventory.ini --graph

@all:

|--@ungrouped:

|--@etcd:

| |--@kube_control_plane:

| | |--k8s-node1

| | |--k8s-node2

| | |--k8s-node3

|--@kube_node:

| |--k8s-node4

| |--k8s-node5

root@admin-lb:~/kubespray# ansible -i inventory/mycluster/inventory.ini k8s-node5 -m ping

[WARNING]: Platform linux on host k8s-node5 is using the discovered Python

interpreter at /usr/bin/python3.12, but future installation of another Python

interpreter could change the meaning of that path. See

https://docs.ansible.com/ansible-

core/2.17/reference_appendices/interpreter_discovery.html for more information.

k8s-node5 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/bin/python3.12"

},

"changed": false,

"ping": "pong"

}

인벤토리에 5번 노드를 추가하고 핑퐁 체크한다.

ANSIBLE_FORCE_COLOR=true ansible-playbook -i inventory/mycluster/inventory.ini -v scale.yml --limit=k8s-node5 -e kube_version="1.32.9" | tee kubespray_add_worker_node.log

...

PLAY RECAP *********************************************************************

k8s-node5 : ok=411 changed=87 unreachable=0 failed=0 skipped=591 rescued=0 ignored=0

Sunday 08 February 2026 04:45:41 +0900 (0:00:00.014) 0:02:21.385 *******

===============================================================================

network_plugin/flannel : Flannel | Wait for flannel subnet.env file presence -- 30.24s

download : Download_container | Download image if required -------------- 6.15s

system_packages : Manage packages --------------------------------------- 6.13s

download : Download_container | Download image if required -------------- 5.89s

download : Download_container | Download image if required -------------- 4.63s

container-engine/containerd : Download_file | Download item ------------- 3.54s

download : Download_file | Download item -------------------------------- 3.21s

container-engine/crictl : Download_file | Download item ----------------- 3.15s

container-engine/runc : Download_file | Download item ------------------- 3.12s

download : Download_container | Download image if required -------------- 2.54s

network_plugin/cni : CNI | Copy cni plugins ----------------------------- 2.48s

container-engine/nerdctl : Download_file | Download item ---------------- 2.37s

download : Download_file | Download item -------------------------------- 2.04s

download : Download_file | Download item -------------------------------- 1.95s

container-engine/containerd : Containerd | Unpack containerd archive ---- 1.91s

download : Download_container | Download image if required -------------- 1.78s

container-engine/nerdctl : Extract_file | Unpacking archive ------------- 1.66s

container-engine/crictl : Extract_file | Unpacking archive -------------- 1.65s

etcd : Check_certs | Register certs that have already been generated on first etcd node --- 1.60s

network_plugin/cni : CNI | Copy cni plugins ----------------------------- 1.43sroot@admin-lb:~/kubespray# kubectl get node -owide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8s-node1 Ready control-plane 4h4m v1.32.9 192.168.10.11 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node2 Ready control-plane 4h3m v1.32.9 192.168.10.12 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node3 Ready control-plane 4h3m v1.32.9 192.168.10.13 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node4 Ready <none> 4h3m v1.32.9 192.168.10.14 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node5 Ready <none> 82s v1.32.9 192.168.10.15 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

노드 삭제

여기서 주의할 것은 비정상 노드 삭제인 remove-node.yaml은 클러스터가 깨지게 되고,

클러스터 리셋 reset.yaml은 k8s 클러스터 전체를 삭제하는 것이므로... 실행 후 복구가 불가인 스크립트이므로 사용하지않는 것을 권장한다고 한다. 가급적 운영중인 클러스터에서는 해당 스크립트를 아예 지워버리기

https://github.com/kubernetes-sigs/kubespray/blob/master/playbooks/remove_node.yml

kubespray/playbooks/remove_node.yml at master · kubernetes-sigs/kubespray

Deploy a Production Ready Kubernetes Cluster. Contribute to kubernetes-sigs/kubespray development by creating an account on GitHub.

github.com

노드 삭제 시 사용해야 하는 플레이북은 remove-node.yml 이다.

이 스크립트는 지워야 될 노드가 정상적인 상태일 때 수행된다.

이 플레이북은 노드 제거 시 발생할 수 있는 클러스터 불일치를 방지하기 위해, 입력 검증과 사용자 확인을 선행하고, etcd 및 control-plane 역할을 고려한 단계적 제거(pre-remove → reset → post-remove) 를 수행하도록 설계되었다.

이 스크립트가 안전한 이유는 이 스크립트가 실행되기 전 사용자에게 yes를 입력을 한 번 더 받게 돼있고, 노드 제거 시 클러스터에서 먼저 분리하고 난 후 노드를 초기화하고 마지막으로 메타데이터까지 정리하는 과정을 지켜서 상태값을 일관되게 만든다.

그리고 etcd 쿼럼을 깨뜨리지 않도록 별도 제거 절차를 수행하여 데이터 무결성을 지키게 된다. 굉장히 보수적으로 잘 설계돼있는 것 같다!

Validate nodes for removal

- 노드 제거 대상이 명시되었는지 사전에 검증하여, 잘못된 실행으로 전체 클러스터에 영향을 주는 사고를 방지한다.

Common tasks for every playbooks

- 모든 플레이북에서 공통으로 사용하는 기본 설정과 환경 초기화 작업을 수행한다.

Confirm node removal

- 실제 노드 제거 작업 전에 사용자로부터 명시적인 확인을 받아, 의도하지 않은 파괴적 작업을 한 번 더 차단한다.

Gather facts

- 제거 대상 노드의 역할(control-plane, worker, etcd 등)을 파악하기 위한 정보를 수집한다.

Reset node

- 클러스터에서 노드를 안전하게 분리한 뒤, 해당 노드의 Kubernetes 구성 요소와 상태를 초기화한다.

- 만약 노드가 etcd 멤버라면 etcd 클러스터에서 멤버를 제거하고 reset한다.

- kubeadm reset, CNI 제거, kubelet / container runtime 설정 제거, 인증서, kubeconfig 삭제하여 노드를 완전히 초기화한다.

Post node removal

- 노드 제거 이후 클러스터 메타데이터와 잔여 설정을 정리하여 상태 일관성을 보장한다

ansible-playbook -i inventory/mycluster/inventory.ini -v remove-node.yml -e node=k8s-node5

> yes

...

Sunday 08 February 2026 04:58:58 +0900 (0:00:00.252) 0:00:38.802 *******

===============================================================================

reset : Reset | delete some files and directories ----------------------- 7.35s

system_packages : Manage packages --------------------------------------- 6.35s

network_plugin/cni : CNI | Copy cni plugins ----------------------------- 2.71s

bootstrap_os : Fetch /etc/os-release ------------------------------------ 1.54s

Gather necessary facts (hardware) --------------------------------------- 1.20s

Confirm Execution ------------------------------------------------------- 1.11s

reset : Reset | stop services ------------------------------------------- 1.07s

reset : Gather active network services ---------------------------------- 1.04s

reset : Reset | stop containerd and etcd services ----------------------- 1.00s

reset : Reset | remove containerd binary files -------------------------- 0.97s

Gather information about installed services ----------------------------- 0.96s

reset : Reset | remove services ----------------------------------------- 0.70s

bootstrap_os : Assign inventory name to unconfigured hostnames (non-CoreOS, non-Flatcar, Suse and ClearLinux, non-Fedora) --- 0.68s

bootstrap_os : Gather host facts to get ansible_distribution_version ansible_distribution_major_version --- 0.67s

reset : Flush iptables -------------------------------------------------- 0.45s

reset : Reset | systemctl daemon-reload --------------------------------- 0.45s

reset : Restart active network services --------------------------------- 0.42s

system_packages : Gather OS information --------------------------------- 0.41s

Gather necessary facts (network) ---------------------------------------- 0.40s

reset : Reset | stop all cri containers --------------------------------- 0.36sroot@admin-lb:~/kubespray# kubectl get node -owide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8s-node1 Ready control-plane 4h17m v1.32.9 192.168.10.11 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node2 Ready control-plane 4h16m v1.32.9 192.168.10.12 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node3 Ready control-plane 4h16m v1.32.9 192.168.10.13 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

k8s-node4 Ready <none> 4h16m v1.32.9 192.168.10.14 <none> Rocky Linux 10.0 (Red Quartz) 6.12.0-55.39.1.el10_0.aarch64 containerd://2.1.5

모니터링 설정

nfs 프로비저너

root@admin-lb:~/kubespray# kubectl create ns nfs-provisioner

helm repo add nfs-subdir-external-provisioner https://kubernetes-sigs.github.io/nfs-subdir-external-provisioner/

helm install nfs-provisioner nfs-subdir-external-provisioner/nfs-subdir-external-provisioner -n nfs-provisioner \

--set nfs.server=192.168.10.10 \

--set nfs.path=/srv/nfs/share \

--set storageClass.defaultClass=true

namespace/nfs-provisioner created

"nfs-subdir-external-provisioner" has been added to your repositories

NAME: nfs-provisioner

LAST DEPLOYED: Sun Feb 8 05:08:57 2026

NAMESPACE: nfs-provisioner

STATUS: deployed

REVISION: 1

TEST SUITE: None

프로메테우스

root@admin-lb:~/kubespray# helm repo add prometheus-community https://prometheus-community.github.io/helm-charts

"prometheus-community" has been added to your repositories

root@admin-lb:~/kubespray# cat <<EOT > monitor-values.yaml

prometheus:

prometheusSpec:

scrapeInterval: "20s"

evaluationInterval: "20s"

storageSpec:

volumeClaimTemplate:

spec:

accessModes: ["ReadWriteOnce"]

resources:

requests:

storage: 10Gi

additionalScrapeConfigs:

- job_name: 'haproxy-metrics'

static_configs:

- targets:

- '192.168.10.10:8405'

externalLabels:

cluster: "myk8s-cluster"

service:

type: NodePort

nodePort: 30001

grafana:

defaultDashboardsTimezone: Asia/Seoul

adminPassword: prom-operator

service:

type: NodePort

nodePort: 30002

alertmanager:

enabled: false

defaultRules:

create: false

kubeProxy:

EOT enabled: falsexporter:helm install kube-prometheus-stack prometheus-community/kube-prometheus-stack --version 80.13.3 \

-f monitor-values.yaml --create-namespace --namespace monitoring

그라파나 대시보드 다운로드

curl -o 12693_rev12.json https://grafana.com/api/dashboards/12693/revisions/12/download

curl -o 15661_rev2.json https://grafana.com/api/dashboards/15661/revisions/2/download

curl -o k8s-system-api-server.json https://raw.githubusercontent.com/dotdc/grafana-dashboards-kubernetes/refs/heads/master/dashboards/k8s-system-api-server.jsonsed -i -e 's/${DS_PROMETHEUS}/prometheus/g' 12693_rev12.json

sed -i -e 's/${DS__VICTORIAMETRICS-PROD-ALL}/prometheus/g' 15661_rev2.json

sed -i -e 's/${DS_PROMETHEUS}/prometheus/g' k8s-system-api-server.jsonkubectl create configmap my-dashboard --from-file=12693_rev12.json --from-file=15661_rev2.json --from-file=k8s-system-api-server.json -n monitoring

kubectl label configmap my-dashboard grafana_dashboard="1" -n monitoringroot@admin-lb:~# kubectl exec -it -n monitoring deploy/kube-prometheus-stack-grafana -- ls -l /tmp/dashboards

total 976

-rw-r--r-- 1 grafana 472 333790 Feb 7 20:13 12693_rev12.json

-rw-r--r-- 1 grafana 472 198839 Feb 7 20:13 15661_rev2.json

-rw-r--r-- 1 grafana 472 12367 Feb 7 20:11 apiserver.json

-rw-r--r-- 1 grafana 472 15598 Feb 7 20:11 cluster-total.json

-rw-r--r-- 1 grafana 472 8600 Feb 7 20:11 controller-manager.json

-rw-r--r-- 1 grafana 472 8340 Feb 7 20:11 etcd.json

-rw-r--r-- 1 grafana 472 7282 Feb 7 20:11 grafana-overview.json

-rw-r--r-- 1 grafana 472 25210 Feb 7 20:11 k8s-coredns.json

-rw-r--r-- 1 grafana 472 26811 Feb 7 20:11 k8s-resources-cluster.json

-rw-r--r-- 1 grafana 472 9837 Feb 7 20:11 k8s-resources-multicluster.json

-rw-r--r-- 1 grafana 472 27310 Feb 7 20:11 k8s-resources-namespace.json

-rw-r--r-- 1 grafana 472 11208 Feb 7 20:11 k8s-resources-node.json

-rw-r--r-- 1 grafana 472 25881 Feb 7 20:11 k8s-resources-pod.json

-rw-r--r-- 1 grafana 472 24677 Feb 7 20:11 k8s-resources-workload.json

-rw-r--r-- 1 grafana 472 27747 Feb 7 20:11 k8s-resources-workloads-namespace.json

-rw-r--r-- 1 grafana 472 35173 Feb 7 20:13 k8s-system-api-server.json

-rw-r--r-- 1 grafana 472 19009 Feb 7 20:11 kubelet.json

-rw-r--r-- 1 grafana 472 11767 Feb 7 20:11 namespace-by-pod.json

-rw-r--r-- 1 grafana 472 19013 Feb 7 20:11 namespace-by-workload.json

-rw-r--r-- 1 grafana 472 8222 Feb 7 20:11 node-cluster-rsrc-use.json

-rw-r--r-- 1 grafana 472 7833 Feb 7 20:11 node-rsrc-use.json

-rw-r--r-- 1 grafana 472 9750 Feb 7 20:11 nodes-aix.json

-rw-r--r-- 1 grafana 472 10987 Feb 7 20:11 nodes-darwin.json

-rw-r--r-- 1 grafana 472 10339 Feb 7 20:11 nodes.json

-rw-r--r-- 1 grafana 472 5971 Feb 7 20:11 persistentvolumesusage.json

-rw-r--r-- 1 grafana 472 6775 Feb 7 20:11 pod-total.json

-rw-r--r-- 1 grafana 472 11115 Feb 7 20:11 prometheus.json

-rw-r--r-- 1 grafana 472 9731 Feb 7 20:11 scheduler.json

-rw-r--r-- 1 grafana 472 11025 Feb 7 20:11 workload-total.json

etcd 매트릭 수집 설정 추가

cat << EOF >> inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

etcd_metrics: true

etcd_listen_metrics_urls: "http://0.0.0.0:2381"

EOF

ansible-playbook -i inventory/mycluster/inventory.ini -v cluster.yml --tags "etcd" --limit etcd -e kube_version="1.32.9"cat <<EOF > monitor-add-values.yaml

prometheus:

prometheusSpec:

additionalScrapeConfigs:

- job_name: 'etcd'

metrics_path: /metrics

static_configs:

- targets:

- '192.168.10.11:2381'

- '192.168.10.12:2381'

- '192.168.10.13:2381'

EOFhelm get values -n monitoring kube-prometheus-stack

helm upgrade kube-prometheus-stack prometheus-community/kube-prometheus-stack --version 80.13.3 \

--reuse-values -f monitor-add-values.yaml --namespace monitoring

k8s 업그레이드 (1.32.9 -> 1.32.10)

upgrade_cluster.yaml

https://github.com/kubernetes-sigs/kubespray/blob/master/playbooks/upgrade_cluster.yml

kubespray/playbooks/upgrade_cluster.yml at master · kubernetes-sigs/kubespray

Deploy a Production Ready Kubernetes Cluster. Contribute to kubernetes-sigs/kubespray development by creating an account on GitHub.

github.com

Common tasks for every playbooks

- name: Common tasks for every playbooks

import_playbook: boilerplate.yml

- 모든 Kubespray 플레이북에서 공통으로 필요한 설정을 먼저 불러온다.

- 프록시, 기본 변수, 환경 설정 등이 여기서 초기화된다.

- 이후 모든 play가 동일한 기준 환경에서 실행되도록 보장한다.

- 업그레이드 도중 환경 차이로 인한 예외를 막는다.

Gather facts

- name: Gather facts

import_playbook: internal_facts.yml

- 노드 역할(control-plane, worker, etcd, calico_rr 등)을 판단하기 위한 내부 fact를 수집한다.

- 해당 단계에서 수집된 facts들은 hosts: kube_control_plane, kube_node, etcd 같은 그룹 분기가 정확히 동작하기 위한 데이터의 기반으로 사용된다.

Download images to ansible host cache via first kube_control_plane node

- hosts: kube_control_plane[0]

roles:

- kubespray_defaults

- kubernetes/preinstall

- download

- 첫 번째 control-plane 노드 한 대만 사용해 이미지/바이너리를 미리 다운로드한다.

- download_run_once 조건으로 중복 다운로드 방지한다.

- 업그레이드 중 네트워크 이슈로 실패하는 걸 막는다.

- 이후 노드들은 로컬 캐시를 사용하므로 속도와 안정성이 좋아진다.

Prepare nodes for upgrade

- hosts: k8s_cluster:etcd:calico_rr

roles:

- kubespray_defaults

- kubernetes/preinstall

- download

- 클러스터에 포함된 모든 노드를 업그레이드 가능한 상태로 준비한다.

- OS 설정, 필수 패키지, 이미지 준비 단계이다.

- 실제 업그레이드 전에 환경 차이로 인한 실패를 제거한다.

Upgrade container engine on non-cluster nodes

- hosts: etcd:calico_rr:!k8s_cluster

serial: 20%

roles:

- container-engine

- Kubernetes 워크로드를 직접 실행하지 않는 노드들의 컨테이너 런타임을 먼저 업그레이드한다.

- 클러스터 핵심 노드와 분리해서 영향 범위를 최소화한다.

- serial로 한 번에 일부만 처리해 리스크를 낮춘다.

Install etcd

- name: Install etcd

import_playbook: install_etcd.yml

- etcd 클러스터를 업그레이드하거나 재구성한다.

- 다른 컴포넌트보다 먼저, 그리고 독립적으로 다뤄진다.

Handle upgrades to control plane components first

- hosts: kube_control_plane

serial: 1

roles:

- upgrade/pre-upgrade

- upgrade/system-upgrade

- kubernetes/control-plane

- kubernetes/client

- control-plane 노드를 1대씩(serial: 1) 업그레이드한다.

- kube-apiserver, controller-manager, scheduler 포함된다.

- API 호환성을 깨뜨리지 않기 위해 1대씩 업그레이드하며, 만약 동시에 여러 control-plane을 건드리면 클러스터의 정합성이 깨질 수 있다.

Upgrade calico and external cloud provider

- hosts: kube_control_plane:calico_rr:kube_node

roles:

- kubernetes-apps/external_cloud_controller

- network_plugin

- 네트워크 플러그인(Calico)과 클라우드 컨트롤러를 전체 노드에 적용한다.

- control-plane 업그레이드 후에 실행해 네트워크 단절 가능성을 최소화한다.

Finally handle worker upgrades

- hosts: kube_node:calico_rr:!kube_control_plane

serial: 20%

roles:

- upgrade/pre-upgrade

- kubernetes/node

- kubernetes/kubeadm

- 워커 노드를 배치 단위로 순차 업그레이드한다.

- 서비스 가용성을 유지하기 위해 한 번에 전부 업그레이드하지 않는다.

- control-plane은 이미 안정화된 상태.

Patch Kubernetes for Windows

- hosts: kube_control_plane[0]

roles:

- win_nodes/kubernetes_patch

- 윈도우 노드일 경우도 고려되어 있다 신기

Install Calico Route Reflector

- hosts: calico_rr

roles:

- network_plugin/calico/rr

- BGP 기반 네트워크 확장성을 위한 Route Reflector를 구성한다.

- 대규모 클러스터에서 네트워크 성능과 안정성을 보장한다.

Install Kubernetes apps

- hosts: kube_control_plane

roles:

- kubernetes-apps/ingress_controller

- kubernetes-apps- Ingress, CSI 등 클러스터 부가 애플리케이션을 설치/업그레이드한다.

Apply resolv.conf changes now that cluster DNS is up

- hosts: k8s_cluster

roles:

- kubernetes/preinstall

- CoreDNS가 정상 동작한 이후에 DNS 설정을 최종 반영한다.

- DNS 설정을 너무 일찍 적용하면 업그레이드 도중 name resolution 실패가 발생할 수 있다.

플레이북 실행

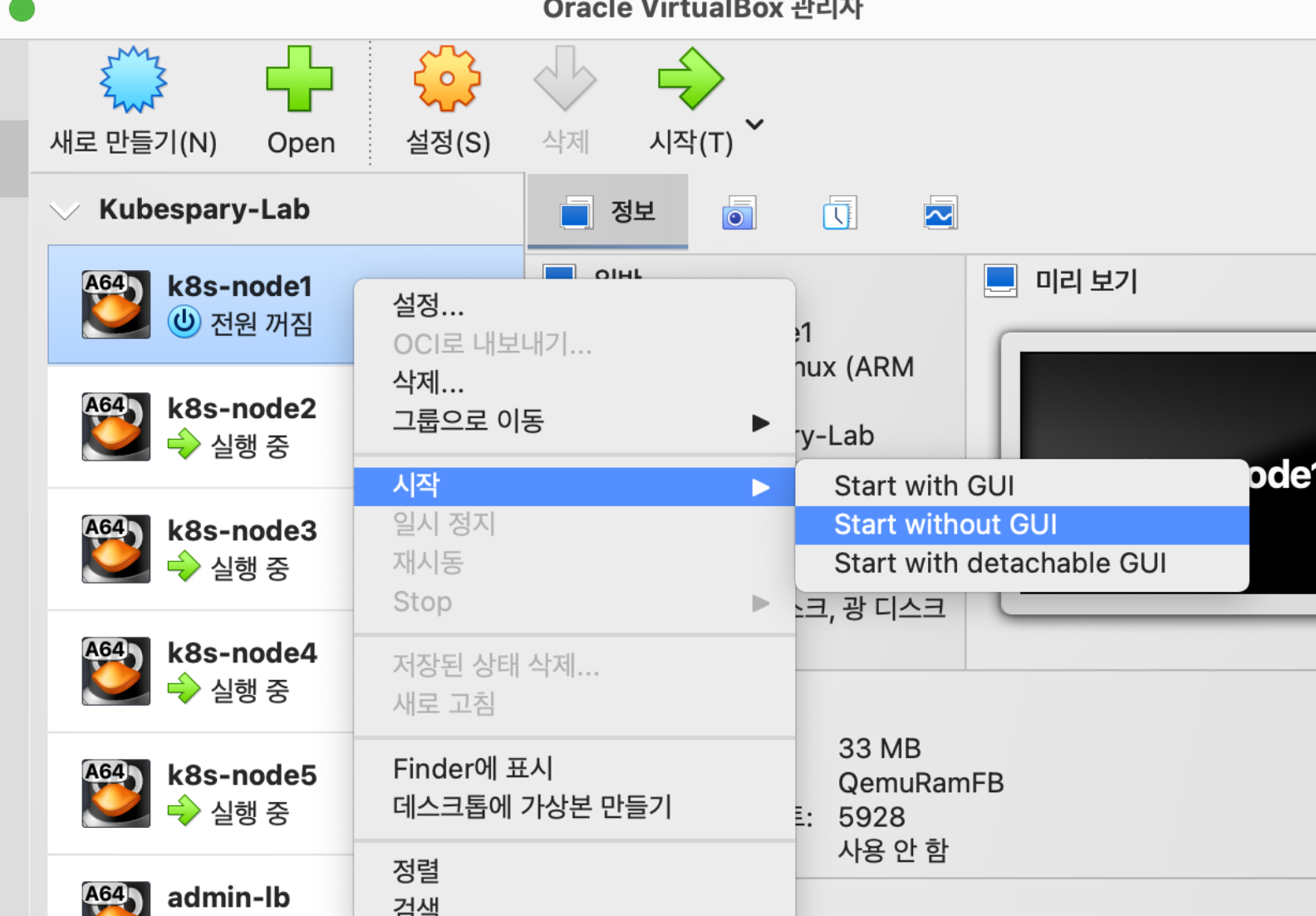

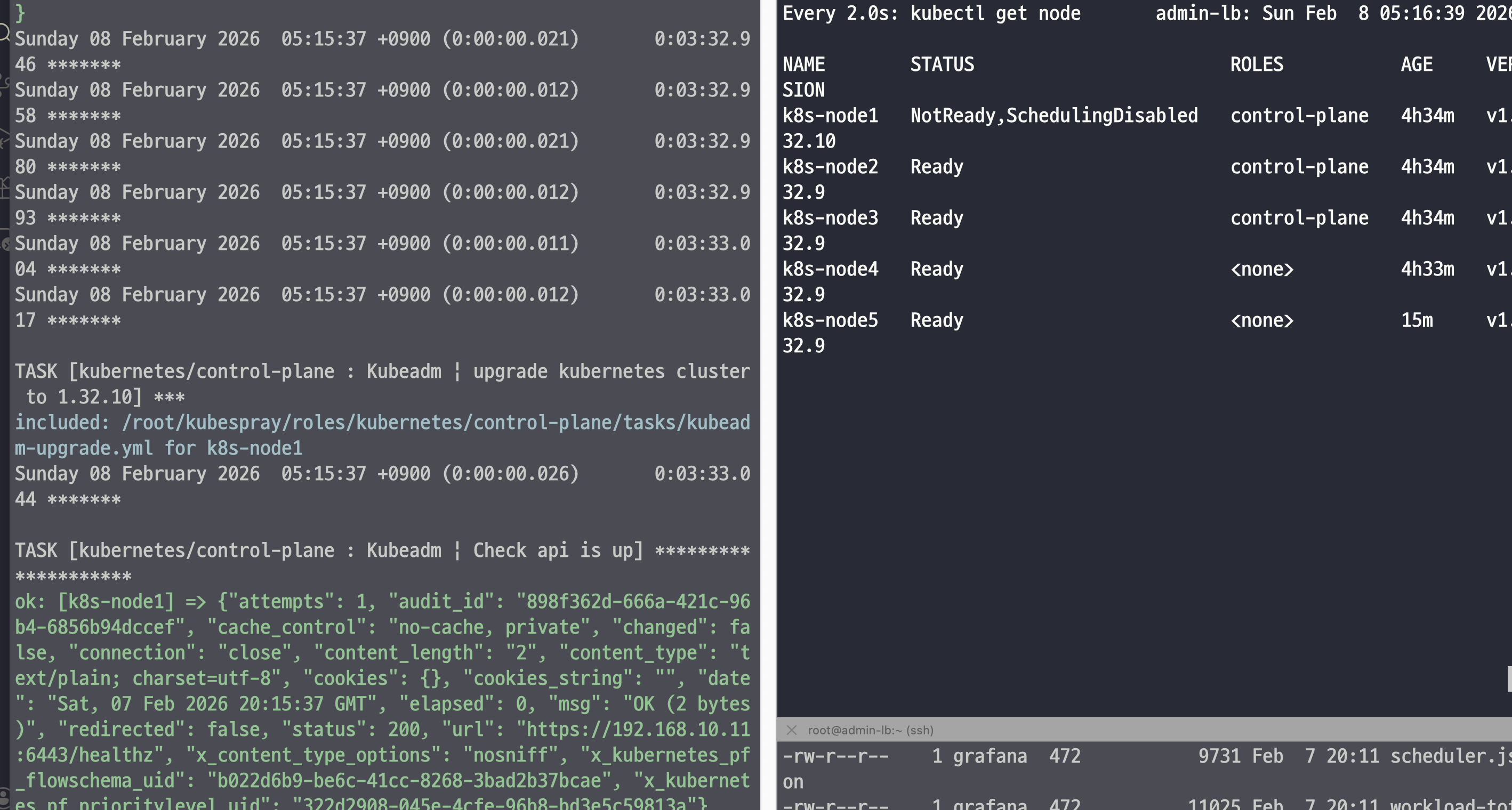

ANSIBLE_FORCE_COLOR=true ansible-playbook -i inventory/mycluster/inventory.ini -v upgrade-cluster.yml -e kube_version="1.32.10" --limit "kube_control_plane:etcd" | tee kubespray_upgrade.log

순차적으로 업그레이드가 진행되는 것을 확인할 수 있다.

PLAY RECAP *********************************************************************

k8s-node1 : ok=533 changed=49 unreachable=0 failed=0 skipped=1091 rescued=0 ignored=0

k8s-node2 : ok=483 changed=35 unreachable=0 failed=0 skipped=994 rescued=0 ignored=0

k8s-node3 : ok=489 changed=35 unreachable=0 failed=0 skipped=1026 rescued=0 ignored=0

Sunday 08 February 2026 05:23:39 +0900 (0:00:00.041) 0:11:35.319 *******

===============================================================================

kubernetes/control-plane : Kubeadm | Upgrade first control plane node to 1.32.10 - 105.01s

kubernetes/control-plane : Kubeadm | Upgrade other control plane nodes to 1.32.10 -- 92.19s

kubernetes/control-plane : Kubeadm | Upgrade other control plane nodes to 1.32.10 -- 81.87s

network_plugin/flannel : Flannel | Wait for flannel subnet.env file presence -- 15.86s

kubernetes/control-plane : Control plane | wait for the apiserver to be running -- 10.46s

download : Download_container | Download image if required -------------- 9.82s

upgrade/pre-upgrade : Drain node ---------------------------------------- 6.83s

download : Download_file | Download item -------------------------------- 6.78s

etcd : Gen_certs | Write etcd member/admin and kube_control_plane client certs to other etcd nodes --- 6.59s

download : Download_container | Download image if required -------------- 5.67s

system_packages : Manage packages --------------------------------------- 5.54s

download : Download_container | Download image if required -------------- 5.50s

download : Download_file | Download item -------------------------------- 5.29s

network_plugin/cni : CNI | Copy cni plugins ----------------------------- 5.20s

network_plugin/cni : CNI | Copy cni plugins ----------------------------- 5.13s

download : Download_container | Download image if required -------------- 5.00s

kubernetes/control-plane : Backup old certs and keys -------------------- 4.85s

container-engine/containerd : Containerd | Unpack containerd archive ---- 4.83s

kubernetes/control-plane : Kubeadm | Check apiserver.crt SAN IPs -------- 4.17s

etcdctl_etcdutl : Extract_file | Unpacking archive ---------------------- 4.06sNAME STATUS ROLES AGE VERSION

k8s-node1 Ready control-plane 4h45m v1.32.10

k8s-node2 Ready control-plane 4h45m v1.32.10

k8s-node3 Ready control-plane 4h45m v1.32.10

k8s-node4 Ready <none> 4h44m v1.32.9

k8s-node5 Ready <none> 26m v1.32.9

약 11분 정도 소요되고 업그레이드가 완료되었다.

root@admin-lb:~# ssh k8s-node1 crictl images

IMAGE TAG IMAGE ID SIZE

docker.io/flannel/flannel-cni-plugin v1.7.1-flannel1 e5bf9679ea8c3 5.14MB

docker.io/flannel/flannel v0.27.3 cadcae92e6360 33.1MB

quay.io/prometheus/node-exporter v1.10.2 6b5bc413b280c 12.1MB

registry.k8s.io/coredns/coredns v1.11.3 2f6c962e7b831 16.9MB

registry.k8s.io/kube-apiserver v1.32.10 03aec5fd5841e 26.4MB

registry.k8s.io/kube-apiserver v1.32.9 02ea53851f07d 26.4MB

registry.k8s.io/kube-controller-manager v1.32.10 66490a6490dde 24.2MB

registry.k8s.io/kube-controller-manager v1.32.9 f0bcbad5082c9 24.1MB

registry.k8s.io/kube-proxy v1.32.10 8b57c1f8bd2dd 27.6MB

registry.k8s.io/kube-proxy v1.32.9 72b57ec14d31e 27.4MB

registry.k8s.io/kube-scheduler v1.32.10 fcf368a1abd0b 19.2MB

registry.k8s.io/kube-scheduler v1.32.9 1d625baf81b59 19.1MB

registry.k8s.io/metrics-server/metrics-server v0.8.0 bc6c1e09a843d 20.6MB

registry.k8s.io/pause 3.10 afb61768ce381 268kB

etcd 확인

root@admin-lb:~# ssh k8s-node1 systemctl status etcd --no-pager | grep active

Active: active (running) since Sun 2026-02-08 04:40:35 KST; 47min agoroot@admin-lb:~# ssh k8s-node1 etcdctl.sh member list -w table

+------------------+---------+-------+----------------------------+----------------------------+------------+

| ID | STATUS | NAME | PEER ADDRS | CLIENT ADDRS | IS LEARNER |

+------------------+---------+-------+----------------------------+----------------------------+------------+

| 8b0ca30665374b0 | started | etcd3 | https://192.168.10.13:2380 | https://192.168.10.13:2379 | false |

| 2106626b12a4099f | started | etcd2 | https://192.168.10.12:2380 | https://192.168.10.12:2379 | false |

| c6702130d82d740f | started | etcd1 | https://192.168.10.11:2380 | https://192.168.10.11:2379 | false |

+------------------+---------+-------+----------------------------+----------------------------+------------+root@admin-lb:~# for i in {1..3}; do echo ">> k8s-node$i <<"; ssh k8s-node$i etcdctl.sh endpoint status -w table; echo; done

>> k8s-node1 <<

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| 127.0.0.1:2379 | c6702130d82d740f | 3.5.25 | 23 MB | false | false | 6 | 55240 | 55240 | |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

>> k8s-node2 <<

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| 127.0.0.1:2379 | 2106626b12a4099f | 3.5.25 | 22 MB | true | false | 6 | 55243 | 55243 | |

+----------------+------------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

>> k8s-node3 <<

+----------------+-----------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | IS LEARNER | RAFT TERM | RAFT INDEX | RAFT APPLIED INDEX | ERRORS |

+----------------+-----------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

| 127.0.0.1:2379 | 8b0ca30665374b0 | 3.5.25 | 22 MB | false | false | 6 | 55243 | 55243 | |

+----------------+-----------------+---------+---------+-----------+------------+-----------+------------+--------------------+--------+

root@admin-lb:~# for i in {1..3}; do echo ">> k8s-node$i <<"; ssh k8s-node$i tree /var/backups; echo; done # etcd 백업 확인

>> k8s-node1 <<

/var/backups

└── etcd-2026-02-08_00:41:43

├── member

│ ├── snap

│ │ └── db

│ └── wal

│ └── 0000000000000000-0000000000000000.wal

└── snapshot.db

5 directories, 3 files

>> k8s-node2 <<

/var/backups

└── etcd-2026-02-08_00:41:43

├── member

│ ├── snap

│ │ └── db

│ └── wal

│ └── 0000000000000000-0000000000000000.wal

└── snapshot.db

5 directories, 3 files

>> k8s-node3 <<

/var/backups

└── etcd-2026-02-08_00:41:43

├── member

│ ├── snap

│ │ └── db

│ └── wal

│ └── 0000000000000000-0000000000000000.wal

└── snapshot.db

5 directories, 3 files

etcd는 영향이 없는 것을 확인할 수 있다.

워커 노드 업그레이드

root@admin-lb:~/kubespray# kubectl get pod -A -owide | grep node4

default webpod-697b545f57-m95gv 1/1 Running 0 81m 10.233.67.6 k8s-node4 <none> <none>

default webpod-697b545f57-prbjp 1/1 Running 0 81m 10.233.67.5 k8s-node4 <none> <none>

kube-system coredns-664b99d7c7-5drtx 1/1 Running 0 4h47m 10.233.67.2 k8s-node4 <none> <none>

kube-system kube-flannel-ds-arm64-wkwrg 1/1 Running 0 4h47m 192.168.10.14 k8s-node4 <none> <none>

kube-system kube-ops-view-8484bdc5df-8xf2c 1/1 Running 0 109m 10.233.67.4 k8s-node4 <none> <none>

kube-system kube-proxy-qw84v 1/1 Running 0 12m 192.168.10.14 k8s-node4 <none> <none>

kube-system metrics-server-65fdf69dcb-jnvbd 1/1 Running 0 4h47m 10.233.67.3 k8s-node4 <none> <none>

kube-system nginx-proxy-k8s-node4 1/1 Running 1 4h47m 192.168.10.14 k8s-node4 <none> <none>

monitoring kube-prometheus-stack-prometheus-node-exporter-8rcnt 1/1 Running 0 19m 192.168.10.14 k8s-node4 <none> <none>

monitoring prometheus-kube-prometheus-stack-prometheus-0 2/2 Running 0 19m 10.233.67.8 k8s-node4 <none> <none>

root@admin-lb:~/kubespray# kubectl get pod -A -owide | grep node5

kube-system kube-flannel-ds-arm64-4plpp 1/1 Running 1 (28m ago) 29m 192.168.10.15 k8s-node5 <none> <none>

kube-system kube-proxy-5lzz6 1/1 Running 0 12m 192.168.10.15 k8s-node5 <none> <none>

kube-system nginx-proxy-k8s-node5 1/1 Running 0 29m 192.168.10.15 k8s-node5 <none> <none>

monitoring kube-prometheus-stack-grafana-5cb7c586f9-bnfd2 3/3 Running 0 19m 10.233.69.6 k8s-node5 <none> <none>

monitoring kube-prometheus-stack-kube-state-metrics-7846957b5b-fqbf2 1/1 Running 0 19m 10.233.69.5 k8s-node5 <none> <none>

monitoring kube-prometheus-stack-operator-584f446c98-tjxfj 1/1 Running 0 19m 10.233.69.4 k8s-node5 <none> <none>

monitoring kube-prometheus-stack-prometheus-node-exporter-pkxgl 1/1 Running 0 19m 192.168.10.15 k8s-node5 <none> <none>

nfs-provisioner nfs-provisioner-nfs-subdir-external-provisioner-b549b9dff-tbddr 1/1 Running 0 21m 10.233.69.2 k8s-node5 <none> <none>

root@admin-lb:~/kubespray# ansible-playbook -i inventory/mycluster/inventory.ini -v upgrade-cluster.yml -e kube_version="1.32.10" --limit "k8s-node5"

...

***********

k8s-node5 : ok=369 changed=20 unreachable=0 failed=0 skipped=608 rescued=0 ignored=0

Sunday 08 February 2026 05:33:05 +0900 (0:00:00.049) 0:02:15.924 *******

===============================================================================

network_plugin/flannel : Flannel | Wait for flannel subnet.env file presence --- 5.23s

system_packages : Manage packages --------------------------------------- 5.04s

container-engine/containerd : Containerd | Unpack containerd archive ---- 4.89s

container-engine/containerd : Download_file | Download item ------------- 4.71s

container-engine/nerdctl : Extract_file | Unpacking archive ------------- 3.37s

upgrade/pre-upgrade : Drain node ---------------------------------------- 3.37s

container-engine/nerdctl : Download_file | Download item ---------------- 3.21s

container-engine/containerd : Containerd | Copy containerd config file --- 2.21s

kubernetes/kubeadm : Restart all kube-proxy pods to ensure that they load the new configmap --- 2.20s

container-engine/runc : Download_file | Download item ------------------- 2.17s

download : Download_file | Download item -------------------------------- 2.09s

download : Download_file | Download item -------------------------------- 2.05s

container-engine/crictl : Download_file | Download item ----------------- 1.90s