26년도 K8S Deploy 정리 글입니다.

Kubespray offline

Kubespray는 기본적으로 Ansible을 통해 Kubernetes 설치에 필요한 바이너리, 컨테이너 이미지, CNI 이미지 등을 외부 레지스트리에서 다운로드한다. 그러나 폐쇄망 환경에서는 외부 네트워크 접근이 불가능하므로 모든 의존 리소스를 사전에 수집하고 내부 저장소에 미러링해야 한다.

이를 위해 Kubespray는 offline 배포 방식을 제공한다.

https://github.com/kubernetes-sigs/kubespray/tree/master/contrib/offline

kubespray/contrib/offline at master · kubernetes-sigs/kubespray

Deploy a Production Ready Kubernetes Cluster. Contribute to kubernetes-sigs/kubespray development by creating an account on GitHub.

github.com

오프라인 설치의 핵심 개념은 두 단계로 나뉜다. 첫 번째는 온라인 환경에서 모든 필요한 파일과 이미지를 사전에 수집하는 단계이며, 두 번째는 이를 폐쇄망 환경으로 이관하여 내부 레지스트리 및 로컬 파일 서버를 통해 설치를 수행하는 단계이다.

위의 Kubespray 저장소를 클론한 후, contrib/offline 디렉토리를 활용하여 필요한 이미지 목록과 바이너리 목록을 확인한다. Kubespray는 roles/download/defaults/main.yml을 기준으로 Kubernetes 버전별 필요한 컨테이너 이미지, etcd 이미지, CNI 이미지, DNS 이미지 등을 정의한다. 이 정보를 기반으로 download 역할이 실행되며 모든 리소스를 한 번에 수집한다.

컨테이너 이미지는 기본적으로 k8s.gcr.io, registry.k8s.io, docker.io 등의 외부 레지스트리에서 내려받는다. 오프라인 환경에서는 이를 내부 Harbor 또는 사설 Registry에 미러링해야 한다. 따라서 먼저 온라인 환경에서 docker pull 또는 ctr image pull을 통해 이미지를 다운로드한 후, docker tag를 사용하여 내부 레지스트리 주소로 태깅한다. 이후 docker push를 통해 내부 레지스트리에 업로드한다.

바이너리 파일 역시 동일하게 처리한다. kubeadm, kubelet, kubectl, containerd, runc 등의 바이너리는 기본적으로 GitHub 릴리즈나 공식 다운로드 서버에서 받아온다. 이를 /tmp/releases 또는 지정된 캐시 디렉토리에 다운로드한 뒤, 오프라인 환경으로 전달한다. Kubespray에서는 download_localhost: true 옵션을 설정하여 컨트롤 노드에서 로컬 캐시를 활용하도록 구성할 수 있다.

kubespray 폐쇄망 환경에서 수정 사항

폐쇄망 환경에서는 inventory 파일에서 몇 가지 주요 설정을 수정한다. download_run_once: true로 설정하여 다운로드 작업을 한 번만 수행하도록 한다. 또한 download_localhost: true로 설정하여 외부 다운로드 대신 로컬 캐시를 사용하도록 한다. 컨테이너 레지스트리 주소는 kube_image_repo, gcr_image_repo, docker_image_repo 등의 변수를 내부 레지스트리 주소로 변경한다. 이를 통해 모든 이미지가 외부가 아닌 내부 레지스트리를 참조하도록 한다.

또한 HTTP 프록시를 사용할 수 없는 환경이므로, no_proxy 설정에 Pod CIDR, Service CIDR, 노드 IP 대역을 포함하여 통신 오류를 방지한다. 특히 etcd 클러스터 및 kube-apiserver 간 통신은 내부 IP를 사용하므로 프록시 우회를 명확히 구성해야 한다.

실제 설치 단계에서는 cluster.yml 플레이북을 실행한다. 이때 Kubespray는 외부 다운로드를 시도하지 않고, 사전에 준비한 이미지와 바이너리를 사용한다. Ansible 로그를 통해 download 태스크가 외부 URL을 호출하지 않는지 확인한다. 만약 외부 URL 접근 시도가 발생한다면 inventory 설정 또는 캐시 경로 구성이 누락된 것이다.

kubespray 역할 분리

Kubespray는 다운로드 역할과 설치 역할이 명확히 분리되어 있다. 그리고 모든 이미지 경로는 변수화되어 있어 내부 레지스트리로 손쉽게 전환할 수 있다. 폐쇄망 환경에서는 이미지뿐 아니라 바이너리와 Helm chart 의존성까지 모두 사전에 수집해야 한다.

특히 CNI 플러그인 이미지 누락, CoreDNS 이미지 누락, etcd 이미지 태그 불일치가 가장 흔한 실패 요인이 될 수 있으므로 설치 전 ansible-playbook --tags download를 활용하여 필요한 리소스를 모두 점검해보아야 한다.

실습 환경 구성

Kubespray 오프라인 설치는 단순한 이미지 미러링 작업이 아니라, Kubernetes 배포에 필요한 모든 의존성을 완전하게 통제하는 과정이다. 이를 통해 폐쇄망 환경에서도 일관되고 재현 가능한 Kubernetes 클러스터를 구축할 수 있다.

git clone https://github.com/kubespray-offline/kubespray-offline

cd kubespray-offline/root@admin:~/kubespray-offline# source ./config.sh

root@admin:~/kubespray-offline# echo -e "kubespary $KUBESPRAY_VERSION"

echo -e "runc $RUNC_VERSION"

echo -e "containerd $CONTAINERD_VERSION"

echo -e "nercdtl $NERDCTL_VERSION"

echo -e "cni $CNI_VERSION"

echo -e "nginx $NGINX_VERSION"

echo -e "registry $REGISTRY_VERSION"

echo -e "registry_port: $REGISTRY_PORT"

echo -e "Additional container registry hosts: $ADDITIONAL_CONTAINER_REGISTRY_LIST"

echo -e "cpu arch: $IMAGE_ARCH"

kubespary 2.30.0

runc 1.3.4

containerd 2.2.1

nercdtl 2.2.1

cni 1.8.0

nginx 1.29.4

registry 3.0.0

registry_port: 35000

Additional container registry hosts: myregistry.io

cpu arch: arm64./download-all.sh

...

Directory walk started

Directory walk done - 230 packages

Temporary output repo path: outputs/rpms/local/.repodata/

Pool started (with 5 workers)

Pool finished

create-repo done.

=> Running: ./copy-target-scripts.sh

==> Copy target scripts

Done.

# 20분 정도 소요되었다.root@admin:~/kubespray-offline# du -sh /root/kubespray-offline/outputs/

3.3G /root/kubespray-offline/outputs/root@admin:~/kubespray-offline# tree /root/kubespray-offline/outputs/ -L 1

/root/kubespray-offline/outputs/

├── config.sh

├── config.toml

├── containerd.service

├── extract-kubespray.sh

├── files

├── images

├── install-containerd.sh

├── load-push-all-images.sh

├── nginx-default.conf

├── patches

├── playbook

├── pypi

├── pyver.sh

├── rpms

├── setup-all.sh

├── setup-container.sh

├── setup-offline.sh

├── setup-py.sh

├── start-nginx.sh

├── start-registry.sh

└── venv.sh

7 directories, 15 files

이 단계에서 수행되는 후속 스크립트들을 분석해본다.

config.sh

source ./target-scripts/config.sh

- config.sh를 현재 쉘 컨텍스트에 로드한다.

- 즉, KUBESPRAY_VERSION, CONTAINERD_VERSION, IMAGE_ARCH 등의 변수가 download-all.sh 전체에서 전역 변수처럼 사용된다.

- 단순 실행(./config.sh)이 아니라 source를 사용한 이유는 환경 변수 공유 목적이다.

docker=${docker:-podman}

#docker=${docker:-docker}

#docker=${docker:-/usr/local/bin/nerdctl}

Kubespray offline 스크립트는 내부적으로 다음 작업을 수행하므로 컨테이너 CLI 추상화가 필요하다.

- 이미지 pull

- 이미지 tag

- registry push

- registry 컨테이너 실행

ansible_in_container=${ansible_in_container:-false}

true일 경우

- ansible container image 실행

- 준비 노드에 ansible 설치 필요 없음

- 완전한 격리 환경 제공

false일 경우

- 로컬 ansible 사용

KUBESPRAY_VERSION=${KUBESPRAY_VERSION:-2.30.0}

RUNC_VERSION=1.3.4

CONTAINERD_VERSION=2.2.1

NERDCTL_VERSION=2.2.1

CNI_VERSION=1.8.0

kubespray와 containerd의 버전을 고정한다.

REGISTRY_PORT=35000

ADDITIONAL_CONTAINER_REGISTRY_LIST="myregistry.io"

오프라인 구조에서는 외부 registry → 내부 registry → target node pull 구조로 동작할 수 있도록 한다.

map_arch() {

case "$1" in

x86_64) echo "amd64" ;;

aarch64) echo "arm64" ;;

*) echo "$1" ;;

esac

}

x86_64 -> amd64 / aarch64 -> arm64 아키텍처로 분기된다.

그리고 OS도 redhat, debian으로 분기처리된다.

precheck.sh

#!/bin/bash

source /etc/os-release

source ./config.sh

if [ "$docker" != "podman" ]; then

if ! command -v $docker >/dev/null 2>&1; then

echo "No $docker installed"

exit 1

fi

fi

if [ -e /etc/redhat-release ] && [[ "$VERSION_ID" =~ ^7.* ]]; then

if [ "$(getenforce)" == "Enforcing" ]; then

echo "You must disable SELinux for RHEL7/CentOS7"

exit 1

fi

fi

해당 스크립트는 download-all.sh 실행 전 사전 환경 검증을 수행하는 부분이다. 핵심 목적은 오프라인 번들 생성이 가능한 상태인지 빠르게 판단하는 것이다.

OS 및 오프라인 설정을 로드하여 컨테이너 런타임 실행 가능 여부를 검증하고, RHEL7 + SELinux Enforcing 환경을 차단하여 실제 다운로드 로직에 들어가기 전 환경 불일치로 인한 실패를 미리 방지하는 단계이다.

prepare-pkgs.sh

#!/bin/bash

echo "==> prepare-pkgs.sh"

. /etc/os-release

. ./scripts/common.sh

# Select python version

. ./target-scripts/pyver.sh

# Install required packages

if [ -e /etc/redhat-release ]; then

echo "==> Install required packages"

$sudo dnf check-update

$sudo dnf install -y rsync gcc libffi-devel createrepo git podman || exit 1

case "$VERSION_ID" in

7*)

# RHEL/CentOS 7

echo "FATAL: RHEL/CentOS 7 is not supported anymore."

exit 1

;;

8*)

# RHEL/CentOS 8

if ! command -v repo2module >/dev/null; then

echo "==> Install modulemd-tools"

$sudo dnf install -y https://dl.fedoraproject.org/pub/epel/epel-release-latest-8.noarch.rpm

$sudo dnf copr enable -y frostyx/modulemd-tools-epel

$sudo dnf install -y modulemd-tools

fi

;;

9*)

# RHEL 9

if ! command -v repo2module >/dev/null; then

$sudo dnf install -y modulemd-tools

fi

;;

10*)

# RHEL 9

if ! command -v repo2module >/dev/null; then

$sudo dnf install -y createrepo_c

fi

;;

*)

echo "Unknown version_id: $VERSION_ID"

exit 1

;;

esac

# Install python

$sudo dnf install -y python${PY} python${PY}-pip python${PY}-devel || exit 1

else

$sudo apt update

if [ "$1" == "--upgrade" ]; then

$sudo apt upgrade

fi

$sudo apt -y install lsb-release curl gpg gcc libffi-dev rsync git software-properties-common || exit 1

case "$VERSION_ID" in

20.04)

# Prepare for podman

echo "deb http://download.opensuse.org/repositories/devel:/kubic:/libcontainers:/stable/xUbuntu_${VERSION_ID}/ /" | $sudo tee /etc/apt/sources.list.d/devel:kubic:libcontainers:stable.list

curl -SL https://download.opensuse.org/repositories/devel:kubic:libcontainers:stable/xUbuntu_${VERSION_ID}/Release.key | $sudo apt-key add -

# Prepare for latest python3

sudo add-apt-repository ppa:deadsnakes/ppa -y || exit 1

$sudo apt update

;;

esac

$sudo apt install -y python${PY} python${PY}-venv python${PY}-dev python3-pip python3-selinux podman || exit 1

fi

오프라인 번들을 생성하기 전에 준비 노드(preparation node)의 빌드 환경을 구성하는 단계이며, Kubespray 오프라인 패키지를 생성할 수 있는 최소 실행 환경을 자동으로 갖추는 것이다.

prepare-py.sh

#!/bin/bash

# Create python3 env

echo "==> prepare-py.sh"

. /etc/os-release

. ./target-scripts/venv.sh

source ./scripts/set-locale.sh

echo "==> Update pip, etc"

pip install -U pip setuptools

#if [ "$(getenforce)" == "Enforcing" ]; then

# pip install -U selinux

#fi

echo "==> Install python packages"

pip install -r requirements.txtcat target-scripts/venv.sh

#!/bin/bash

source /etc/os-release

# Select python version

source "$(dirname "${BASH_SOURCE[0]}")/pyver.sh"

python3=python${PY}

VENV_DIR=${VENV_DIR:-~/.venv/${PY}}

echo "python3 = $python3"

echo "VENV_DIR = ${VENV_DIR}"

if [ ! -e ${VENV_DIR} ]; then

$python3 -m venv ${VENV_DIR}

fi

source ${VENV_DIR}/bin/activate

Python 가상환경 생성하여 가상환경 활성화하고 pip 최신화 및 Kubespray 요구 Python 패키지 설치를 통해 오프라인 배포를 수행할 Ansible 실행 환경을 완성한다.

setup-container.sh

오프라인 번들 생성 이후 준비 노드에 컨테이너 런타임과 로컬 레지스트리를 구성하여 오프라인 환경에서 이미지 저장 및 배포를 담당할 containerd 기반 런타임을 부팅한다.

root@admin:~/kubespray-offline/outputs# ./setup-container.sh

==> Install runc

==> Install nerdctl

nerdctl

containerd-rootless-setuptool.sh

containerd-rootless.sh

==> Install containerd

bin/containerd-stress

bin/containerd

bin/ctr

bin/containerd-shim-runc-v2

==> Start containerd

Created symlink '/etc/systemd/system/multi-user.target.wants/containerd.service' → '/etc/systemd/system/containerd.service'.

==> Install CNI plugins

./

./README.md

./static

./host-device

./ipvlan

./dhcp

./LICENSE

./portmap

./tap

./host-local

./vlan

./loopback

./sbr

./firewall

./bandwidth

./bridge

./vrf

./macvlan

./tuning

./dummy

./ptp

==> Load registry, nginx images

unpacking docker.io/library/registry:2.8.1 (sha256:b1524398e0afcc7ce37d998ee27928c83ffd27e35af8ef93313b052cc0d7062f)...

Loaded image: registry:2.8.1

unpacking docker.io/library/registry:3.0.0 (sha256:496d3637ba811d52d4a615818e36fb3d674cf9f33533e0ddbe92cbe6eb8aba48)...

Loaded image: registry:3.0.0

unpacking docker.io/library/nginx:1.28.0-alpine (sha256:bcb5257f77e1426f09b62cfbd56eee3225c92dbab249ac133da279f1e671dc24)...

Loaded image: nginx:1.28.0-alpine

unpacking docker.io/library/nginx:1.29.4 (sha256:4c333d291372a77f1577e20c985a19012440e17034789195eddfeea1144bcc7a)...

Loaded image: nginx:1.29.4

start-nginx.sh

오프라인 번들을 HTTP 파일 서버 형태로 제공하기 위한 nginx를 기동하여 준비 노드를 단순 이미지 저장소가 아니라 패키지·이미지 배포 서버로 동작하도록 구성한다.

[준비 노드]

├─ containerd

├─ local registry (image server)

└─ nginx (file server)root@admin:~/kubespray-offline/outputs# cp nginx-default.conf nginx-default.bak

root@admin:~/kubespray-offline/outputs# cat << EOF > nginx-default.conf

server {

listen 80;

listen [::]:80;

server_name localhost;

location / {

root /usr/share/nginx/html;

# index index.html index.htm;

autoindex on; # 디렉터리 목록 표시

autoindex_exact_size off; # 파일 크기 KB/MB/GB 단위로 보기 좋게

autoindex_localtime on; # 서버 로컬 타임으로 표시

}

error_page 500 502 503 504 /50x.html;

location = /50x.html {

root /usr/share/nginx/html;

}

# Force sendfile to off

sendfile off;

}

EOF

root@admin:~/kubespray-offline/outputs# ./start-nginx.sh

===> Stop nginx

nginx

nginx

===> Start nginx

59c972f5feb8cb37612ae63b6c42121d6acfd7761ee6f6f67a690bd4b0838b36

root@admin:~/kubespray-offline/outputs# nerdctl ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

d60af7732b81 docker.io/library/nginx:1.29.4 "/docker-entrypoint.…" 53 seconds ago Up nginx

root@admin:~/kubespray-offline/outputs# ss -tnlp | grep nginx

LISTEN 0 511 0.0.0.0:80 0.0.0.0:* users:(("nginx",pid=19315,fd=6),("nginx",pid=19314,fd=6),("nginx",pid=19313,fd=6),("nginx",pid=19312,fd=6),("nginx",pid=19279,fd=6))

LISTEN 0 511 [::]:80 [::]:* users:(("nginx",pid=19315,fd=7),("nginx",pid=19314,fd=7),("nginx",pid=19313,fd=7),("nginx",pid=19312,fd=7),("nginx",pid=19279,fd=7))

setup-offline.sh

준비 노드를 완전한 오프라인 전용 패키지 서버로 전환하여 외부 인터넷 저장소를 차단하고, 모든 패키지 소스를 로컬 nginx 서버로 강제 변경한다.

root@admin:~/kubespray-offline/outputs# dnf repolist

repo id repo name

appstream Rocky Linux 10 - AppStream

baseos Rocky Linux 10 - BaseOS

extras Rocky Linux 10 - Extras

root@admin:~/kubespray-offline/outputs# cat /etc/redhat-release

Rocky Linux release 10.0 (Red Quartz)root@admin:~/kubespray-offline/outputs# ./setup-offline.sh

/bin/rm: cannot remove '/etc/yum.repos.d/offline.repo': No such file or directory

===> Disable all yumrepositories

===> Setup local yum repository

[offline-repo]

name=Offline repo

baseurl=http://localhost/rpms/local/

enabled=1

gpgcheck=0

===> Setup PyPI mirror

root@admin:~/kubespray-offline/outputs# tree /etc/yum.repos.d/

/etc/yum.repos.d/

├── offline.repo

├── rocky-addons.repo.original

├── rocky-devel.repo.original

├── rocky-extras.repo.original

└── rocky.repo.original

1 directory, 5 files

root@admin:~/kubespray-offline/outputs# cat /etc/yum.repos.d/offline.repo

[offline-repo]

name=Offline repo

baseurl=http://localhost/rpms/local/

enabled=1

gpgcheck=0

root@admin:~/kubespray-offline/outputs# dnf clean all

18 files removed

root@admin:~/kubespray-offline/outputs# dnf repolist

repo id repo name

offline-repo Offline repo

root@admin:~/kubespray-offline/outputs# cat ~/.config/pip/pip.conf

[global]

index = http://localhost/pypi/

index-url = http://localhost/pypi/

trusted-host = localhost

외부 인터넷 접근 차단

↓

DNF → localhost RPM repo

pip → localhost PyPI mirror

이미지 → localhost registry

파일 → localhost nginx

오프라인에서 패키지를 다운받을 수 있도록 변경한다.

setup-py.sh

root@admin:~/kubespray-offline/outputs# ./setup-py.sh

===> Install python, venv, etc

Offli 17 MB/s | 85 kB 00:00

Package python3-3.12.12-3.el10_1.aarch64 is already installed.

Dependencies resolved.

Nothing to do.

Complete!root@admin:~/kubespray-offline/outputs# source pyver.sh

root@admin:~/kubespray-offline/outputs# echo -e "python_version $python${PY}"

python_version 3.12

root@admin:~/kubespray-offline/outputs# dnf info python3

Last metadata expiration check: 0:00:24 ago on Sun 15 Feb 2026 04:39:52 AM KST.

Installed Packages

Name : python3

Version : 3.12.12

Release : 3.el10_1

Architecture : aarch64

Size : 83 k

Source : python3.12-3.12.12-3.el10_1.src.rpm

Repository : @System

From repo : baseos

Summary : Python 3.12 interpreter

URL : https://www.python.org/

License : Python-2.0.1

Description : Python 3.12 is an

...: accessible, high-level,

...: dynamically typed,

...: interpreted programming

...: language, designed with an

...: emphasis on code

...: readability. It includes an

...: extensive standard library,

...: and has a vast ecosystem of

...: third-party libraries.

...:

...: The python3 package provides

...: the "python3" executable:

...: the reference interpreter

...: for the Python language,

...: version 3. The majority of

...: its standard library is

...: provided in the python3-libs

...: package, which should be

...: installed automatically

...: along with python3. The

...: remaining parts of the

...: Python standard library are

...: broken out into the

...: python3-tkinter and

...: python3-test packages, which

...: may need to be installed

...: separately.

...:

...: Documentation for Python is

...: provided in the python3-docs

...: package.

...:

...: Packages containing

...: additional libraries for

...: Python are generally named

...: with the "python3-" prefix.

- Python 3.12 설치 완료

- pyver.sh 기준 일치

- ARM64 아키텍처 정상 인식

- venv 생성 가능 상태

start-registry.sh

준비 노드에서 로컬 컨테이너 레지스트리를 실제로 기동하여 오프라인 환경에서 Kubernetes 이미지들을 저장하고 배포할 사설 Registry 서버를 실행한다.

root@admin:~/kubespray-offline/outputs# ./start-registry.sh

===> Start registry

6f31bb1fa408e6097c55dd69edf211b86f1969b7d510e50b466f1ee10cc8e95froot@admin:~/kubespray-offline/outputs# source config.sh

root@admin:~/kubespray-offline/outputs# echo -e "registry_port: $REGISTRY_PORT"

registry_port: 35000

root@admin:~/kubespray-offline/outputs# nerdctl ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

6f31bb1fa408 docker.io/library/registry:3.0.0 "/entrypoint.sh /etc…" 10 seconds ago Up registry

59c972f5feb8 docker.io/library/nginx:1.29.4 "/docker-entrypoint.…" 5 minutes ago Up nginx

root@admin:~/kubespray-offline/outputs# ss -tnlp | grep registry

LISTEN 0 4096 *:35000 *:* users:(("registry",pid=19601,fd=3))

LISTEN 0 4096 *:5001 *:* users:(("registry",pid=19601,fd=7))

root@admin:~/kubespray-offline/outputs# tree /var/lib/registry/

/var/lib/registry/

0 directories, 0 filesroot@admin:~/kubespray-offline/outputs# curl 192.168.10.10:5001/metrics

# HELP go_gc_duration_seconds A summary of the wall-time pause (stop-the-world) duration in garbage collection cycles.

# TYPE go_gc_duration_seconds summary

go_gc_duration_seconds{quantile="0"} 1.2667e-05

go_gc_duration_seconds{quantile="0.25"} 1.2667e-05

go_gc_duration_seconds{quantile="0.5"} 1.4625e-05

go_gc_duration_seconds{quantile="0.75"} 1.4625e-05

go_gc_duration_seconds{quantile="1"} 1.4625e-05

go_gc_duration_seconds_sum 2.7292e-05

go_gc_duration_seconds_count 2

# HELP go_gc_gogc_percent Heap size target percentage configured by the user, otherwise 100. This value is set by the GOGC environment variable, and the runtime/debug.SetGCPercent function. Sourced from /gc/gogc:percent

# TYPE go_gc_gogc_percent gauge

go_gc_gogc_percent 100

# HELP go_gc_gomemlimit_bytes Go runtime memory limit configured by the user, otherwise math.MaxInt64. This value is set by the GOMEMLIMIT environment variable, and the runtime/debug.SetMemoryLimit function. Sourced from /gc/gomemlimit:bytes

# TYPE go_gc_gomemlimit_bytes gauge

go_gc_gomemlimit_bytes 9.223372036854776e+18

# HELP go_goroutines Number of goroutines that currently exist.

# TYPE go_goroutines gauge

go_goroutines 12

# HELP go_info Information about the Go environment.

# TYPE go_info gauge

go_info{version="go1.23.7"} 1

# HELP go_memstats_alloc_bytes Number of bytes allocated in heap and currently in use. Equals to /memory/classes/heap/objects:bytes.

# TYPE go_memstats_alloc_bytes gauge

go_memstats_alloc_bytes 5.507576e+06

# HELP go_memstats_alloc_bytes_total Total number of bytes allocated in heap until now, even if released already. Equals to /gc/heap/allocs:bytes.

# TYPE go_memstats_alloc_bytes_total counter

go_memstats_alloc_bytes_total 7.101952e+06

# HELP go_memstats_buck_hash_sys_bytes Number of bytes used by the profiling bucket hash table. Equals to /memory/classes/profiling/buckets:bytes.

# TYPE go_memstats_buck_hash_sys_bytes gauge

go_memstats_buck_hash_sys_bytes 1.454268e+06

# HELP go_memstats_frees_total Total number of heap objects frees. Equals to /gc/heap/frees:objects + /gc/heap/tiny/allocs:objects.

# TYPE go_memstats_frees_total counter

go_memstats_frees_total 12305

# HELP go_memstats_gc_sys_bytes Number of bytes used for garbage collection system metadata. Equals to /memory/classes/metadata/other:bytes.

# TYPE go_memstats_gc_sys_bytes gauge

go_memstats_gc_sys_bytes 3.122648e+06

# HELP go_memstats_heap_alloc_bytes Number of heap bytes allocated and currently in use, same as go_memstats_alloc_bytes. Equals to /memory/classes/heap/objects:bytes.

# TYPE go_memstats_heap_alloc_bytes gauge

go_memstats_heap_alloc_bytes 5.507576e+06

# HELP go_memstats_heap_idle_bytes Number of heap bytes waiting to be used. Equals to /memory/classes/heap/released:bytes + /memory/classes/heap/free:bytes.

# TYPE go_memstats_heap_idle_bytes gauge

go_memstats_heap_idle_bytes 4.194304e+06

# HELP go_memstats_heap_inuse_bytes Number of heap bytes that are in use. Equals to /memory/classes/heap/objects:bytes + /memory/classes/heap/unused:bytes

# TYPE go_memstats_heap_inuse_bytes gauge

go_memstats_heap_inuse_bytes 7.634944e+06

# HELP go_memstats_heap_objects Number of currently allocated objects. Equals to /gc/heap/objects:objects.

# TYPE go_memstats_heap_objects gauge

go_memstats_heap_objects 25779

# HELP go_memstats_heap_released_bytes Number of heap bytes released to OS. Equals to /memory/classes/heap/released:bytes.

# TYPE go_memstats_heap_released_bytes gauge

go_memstats_heap_released_bytes 3.735552e+06

# HELP go_memstats_heap_sys_bytes Number of heap bytes obtained from system. Equals to /memory/classes/heap/objects:bytes + /memory/classes/heap/unused:bytes + /memory/classes/heap/released:bytes + /memory/classes/heap/free:bytes.

# TYPE go_memstats_heap_sys_bytes gauge

go_memstats_heap_sys_bytes 1.1829248e+07

# HELP go_memstats_last_gc_time_seconds Number of seconds since 1970 of last garbage collection.

# TYPE go_memstats_last_gc_time_seconds gauge

go_memstats_last_gc_time_seconds 1.771098079710761e+09

# HELP go_memstats_mallocs_total Total number of heap objects allocated, both live and gc-ed. Semantically a counter version for go_memstats_heap_objects gauge. Equals to /gc/heap/allocs:objects + /gc/heap/tiny/allocs:objects.

# TYPE go_memstats_mallocs_total counter

go_memstats_mallocs_total 38084

# HELP go_memstats_mcache_inuse_bytes Number of bytes in use by mcache structures. Equals to /memory/classes/metadata/mcache/inuse:bytes.

# TYPE go_memstats_mcache_inuse_bytes gauge

go_memstats_mcache_inuse_bytes 4800

# HELP go_memstats_mcache_sys_bytes Number of bytes used for mcache structures obtained from system. Equals to /memory/classes/metadata/mcache/inuse:bytes + /memory/classes/metadata/mcache/free:bytes.

# TYPE go_memstats_mcache_sys_bytes gauge

go_memstats_mcache_sys_bytes 15600

# HELP go_memstats_mspan_inuse_bytes Number of bytes in use by mspan structures. Equals to /memory/classes/metadata/mspan/inuse:bytes.

# TYPE go_memstats_mspan_inuse_bytes gauge

go_memstats_mspan_inuse_bytes 136000

# HELP go_memstats_mspan_sys_bytes Number of bytes used for mspan structures obtained from system. Equals to /memory/classes/metadata/mspan/inuse:bytes + /memory/classes/metadata/mspan/free:bytes.

# TYPE go_memstats_mspan_sys_bytes gauge

go_memstats_mspan_sys_bytes 146880

# HELP go_memstats_next_gc_bytes Number of heap bytes when next garbage collection will take place. Equals to /gc/heap/goal:bytes.

# TYPE go_memstats_next_gc_bytes gauge

go_memstats_next_gc_bytes 9.948432e+06

# HELP go_memstats_other_sys_bytes Number of bytes used for other system allocations. Equals to /memory/classes/other:bytes.

# TYPE go_memstats_other_sys_bytes gauge

go_memstats_other_sys_bytes 852932

# HELP go_memstats_stack_inuse_bytes Number of bytes obtained from system for stack allocator in non-CGO environments. Equals to /memory/classes/heap/stacks:bytes.

# TYPE go_memstats_stack_inuse_bytes gauge

go_memstats_stack_inuse_bytes 753664

# HELP go_memstats_stack_sys_bytes Number of bytes obtained from system for stack allocator. Equals to /memory/classes/heap/stacks:bytes + /memory/classes/os-stacks:bytes.

# TYPE go_memstats_stack_sys_bytes gauge

go_memstats_stack_sys_bytes 753664

# HELP go_memstats_sys_bytes Number of bytes obtained from system. Equals to /memory/classes/total:byte.

# TYPE go_memstats_sys_bytes gauge

go_memstats_sys_bytes 1.817524e+07

# HELP go_sched_gomaxprocs_threads The current runtime.GOMAXPROCS setting, or the number of operating system threads that can execute user-level Go code simultaneously. Sourced from /sched/gomaxprocs:threads

# TYPE go_sched_gomaxprocs_threads gauge

go_sched_gomaxprocs_threads 4

# HELP go_threads Number of OS threads created.

# TYPE go_threads gauge

go_threads 7

# HELP process_cpu_seconds_total Total user and system CPU time spent in seconds.

# TYPE process_cpu_seconds_total counter

process_cpu_seconds_total 0.06

# HELP process_max_fds Maximum number of open file descriptors.

# TYPE process_max_fds gauge

process_max_fds 1.073741816e+09

# HELP process_network_receive_bytes_total Number of bytes received by the process over the network.

# TYPE process_network_receive_bytes_total counter

process_network_receive_bytes_total 3.972183348e+09

# HELP process_network_transmit_bytes_total Number of bytes sent by the process over the network.

# TYPE process_network_transmit_bytes_total counter

process_network_transmit_bytes_total 2.3941779e+07

# HELP process_open_fds Number of open file descriptors.

# TYPE process_open_fds gauge

process_open_fds 8

# HELP process_resident_memory_bytes Resident memory size in bytes.

# TYPE process_resident_memory_bytes gauge

process_resident_memory_bytes 4.6338048e+07

# HELP process_start_time_seconds Start time of the process since unix epoch in seconds.

# TYPE process_start_time_seconds gauge

process_start_time_seconds 1.77109807885e+09

# HELP process_virtual_memory_bytes Virtual memory size in bytes.

# TYPE process_virtual_memory_bytes gauge

process_virtual_memory_bytes 1.304838144e+09

# HELP process_virtual_memory_max_bytes Maximum amount of virtual memory available in bytes.

# TYPE process_virtual_memory_max_bytes gauge

process_virtual_memory_max_bytes 1.8446744073709552e+19

# HELP promhttp_metric_handler_requests_in_flight Current number of scrapes being served.

# TYPE promhttp_metric_handler_requests_in_flight gauge

promhttp_metric_handler_requests_in_flight 1

# HELP promhttp_metric_handler_requests_total Total number of scrapes by HTTP status code.

# TYPE promhttp_metric_handler_requests_total counter

promhttp_metric_handler_requests_total{code="200"} 0

promhttp_metric_handler_requests_total{code="500"} 0

promhttp_metric_handler_requests_total{code="503"} 0

# HELP registry_http_in_flight_requests The in-flight HTTP requests

# TYPE registry_http_in_flight_requests gauge

registry_http_in_flight_requests{handler="base"} 0

registry_http_in_flight_requests{handler="blob"} 0

registry_http_in_flight_requests{handler="blob_upload"} 0

registry_http_in_flight_requests{handler="blob_upload_chunk"} 0

registry_http_in_flight_requests{handler="catalog"} 0

registry_http_in_flight_requests{handler="manifest"} 0

registry_http_in_flight_requests{handler="tags"} 0

# HELP registry_proxy_hits_total The number of total proxy request hits

# TYPE registry_proxy_hits_total counter

registry_proxy_hits_total{type="blob"} 0

registry_proxy_hits_total{type="manifest"} 0

# HELP registry_proxy_misses_total The number of total proxy request misses

# TYPE registry_proxy_misses_total counter

registry_proxy_misses_total{type="blob"} 0

registry_proxy_misses_total{type="manifest"} 0

# HELP registry_proxy_pulled_bytes_total The size of total bytes pulled from the upstream

# TYPE registry_proxy_pulled_bytes_total counter

registry_proxy_pulled_bytes_total{type="blob"} 0

registry_proxy_pulled_bytes_total{type="manifest"} 0

# HELP registry_proxy_pushed_bytes_total The size of total bytes pushed to the client

# TYPE registry_proxy_pushed_bytes_total counter

registry_proxy_pushed_bytes_total{type="blob"} 0

registry_proxy_pushed_bytes_total{type="manifest"} 0

# HELP registry_proxy_requests_total The number of total incoming proxy request received

# TYPE registry_proxy_requests_total counter

registry_proxy_requests_total{type="blob"} 0

registry_proxy_requests_total{type="manifest"} 0

# HELP registry_storage_action_seconds The number of seconds that the storage action takes

# TYPE registry_storage_action_seconds histogram

registry_storage_action_seconds_bucket{action="Stat",driver="filesystem",le="0.005"} 4

registry_storage_action_seconds_bucket{action="Stat",driver="filesystem",le="0.01"} 4

registry_storage_action_seconds_bucket{action="Stat",driver="filesystem",le="0.025"} 4

registry_storage_action_seconds_bucket{action="Stat",driver="filesystem",le="0.05"} 4

registry_storage_action_seconds_bucket{action="Stat",driver="filesystem",le="0.1"} 4

registry_storage_action_seconds_bucket{action="Stat",driver="filesystem",le="0.25"} 4

registry_storage_action_seconds_bucket{action="Stat",driver="filesystem",le="0.5"} 4

registry_storage_action_seconds_bucket{action="Stat",driver="filesystem",le="1"} 4

registry_storage_action_seconds_bucket{action="Stat",driver="filesystem",le="2.5"} 4

registry_storage_action_seconds_bucket{action="Stat",driver="filesystem",le="5"} 4

registry_storage_action_seconds_bucket{action="Stat",driver="filesystem",le="10"} 4

registry_storage_action_seconds_bucket{action="Stat",driver="filesystem",le="+Inf"} 4

registry_storage_action_seconds_sum{action="Stat",driver="filesystem"} 0.00037425

registry_storage_action_seconds_count{action="Stat",driver="filesystem"} 4

# HELP registry_storage_cache_errors_total The number of cache request errors

# TYPE registry_storage_cache_errors_total counter

registry_storage_cache_errors_total 0

# HELP registry_storage_cache_hits_total The number of cache request received

# TYPE registry_storage_cache_hits_total counter

registry_storage_cache_hits_total 0

# HELP registry_storage_cache_requests_total The number of cache request received

# TYPE registry_storage_cache_requests_total counter

registry_storage_cache_requests_total 0

root@admin:~/kubespray-offline/outputs# curl 192.168.10.10:5001/debug/pprof/<html>

<head>

<title>/debug/pprof/</title>

<style>

.profile-name{

display:inline-block;

width:6rem;

}

</style>

</head>

<body>

/debug/pprof/

<br>

<p>Set debug=1 as a query parameter to export in legacy text format</p>

<br>

Types of profiles available:

<table>

<thead><td>Count</td><td>Profile</td></thead>

<tr><td>15</td><td><a href='allocs?debug=1'>allocs</a></td></tr>

<tr><td>0</td><td><a href='block?debug=1'>block</a></td></tr>

<tr><td>0</td><td><a href='cmdline?debug=1'>cmdline</a></td></tr>

<tr><td>9</td><td><a href='goroutine?debug=1'>goroutine</a></td></tr>

<tr><td>15</td><td><a href='heap?debug=1'>heap</a></td></tr>

<tr><td>0</td><td><a href='mutex?debug=1'>mutex</a></td></tr>

<tr><td>0</td><td><a href='profile?debug=1'>profile</a></td></tr>

<tr><td>7</td><td><a href='threadcreate?debug=1'>threadcreate</a></td></tr>

<tr><td>0</td><td><a href='trace?debug=1'>trace</a></td></tr>

</table>

<a href="goroutine?debug=2">full goroutine stack dump</a>

<br>

<p>

Profile Descriptions:

<ul>

<li><div class=profile-name>allocs: </div> A sampling of all past memory allocations</li>

<li><div class=profile-name>block: </div> Stack traces that led to blocking on synchronization primitives</li>

<li><div class=profile-name>cmdline: </div> The command line invocation of the current program</li>

<li><div class=profile-name>goroutine: </div> Stack traces of all current goroutines. Use debug=2 as a query parameter to export in the same format as an unrecovered panic.</li>

<li><div class=profile-name>heap: </div> A sampling of memory allocations of live objects. You can specify the gc GET parameter to run GC before taking the heap sample.</li>

<li><div class=profile-name>mutex: </div> Stack traces of holders of contended mutexes</li>

<li><div class=profile-name>profile: </div> CPU profile. You can specify the duration in the seconds GET parameter. After you get the profile file, use the go tool pprof command to investigate the profile.</li>

<li><div class=profile-name>threadcreate: </div> Stack traces that led to the creation of new OS threads</li>

<li><div class=profile-name>trace: </div> A trace of execution of the current program. You can specify the duration in the seconds GET parameter. After you get the trace file, use the go tool trace command to investigate the trace.</li>

</ul>

</p>

</body>

</html>

load-push-images.sh

root@admin:~/kubespray-offline/outputs# echo -e "cpu arch: $IMAGE_ARCH"

cpu arch: arm64

root@admin:~/kubespray-offline/outputs# echo -e "Additional container registry hosts: $ADDITIONAL_CONTAINER_REGISTRY_LIST"

Additional container registry hosts: myregistry.io

root@admin:~/kubespray-offline/outputs# ls -l images/*.tar.gz

-rw-r--r--. 1 root root 10068768 Feb 15 04:27 images/docker.io_amazon_aws-alb-ingress-controller-v1.1.9.tar.gz

-rw-r--r--. 1 root root 175407403 Feb 15 04:28 images/docker.io_amazon_aws-ebs-csi-driver-v0.5.0.tar.gz

-rw-r--r--. 1 root root 95707259 Feb 15 04:25 images/docker.io_cloudnativelabs_kube-router-v2.1.1.tar.gz

-rw-r--r--. 1 root root 4978225 Feb 15 04:23 images/docker.io_flannel_flannel-cni-plugin-v1.7.1-flannel1.tar.gz

-rw-r--r--. 1 root root 32159259 Feb 15 04:23 images/docker.io_flannel_flannel-v0.27.3.tar.gz

-rw-r--r--. 1 root root 160658472 Feb 15 04:25 images/docker.io_kubeovn_kube-ovn-v1.12.21.tar.gz

-rw-r--r--. 1 root root 73788930 Feb 15 04:29 images/docker.io_kubernetesui_dashboard-v2.7.0.tar.gz

-rw-r--r--. 1 root root 18013963 Feb 15 04:29 images/docker.io_kubernetesui_metrics-scraper-v1.0.8.tar.gz

-rw-r--r--. 1 root root 14991749 Feb 15 04:25 images/docker.io_library_haproxy-3.2.4-alpine.tar.gz

-rw-r--r--. 1 root root 20891493 Feb 15 04:25 images/docker.io_library_nginx-1.28.0-alpine.tar.gz

-rw-r--r--. 1 root root 60177566 Feb 15 04:30 images/docker.io_library_nginx-1.29.4.tar.gz

-rw-r--r--. 1 root root 8986338 Feb 15 04:26 images/docker.io_library_registry-2.8.1.tar.gz

-rw-r--r--. 1 root root 17299815 Feb 15 04:30 images/docker.io_library_registry-3.0.0.tar.gz

-rw-r--r--. 1 root root 2382760 Feb 15 04:20 images/docker.io_mirantis_k8s-netchecker-agent-v1.2.2.tar.gz

-rw-r--r--. 1 root root 31516638 Feb 15 04:20 images/docker.io_mirantis_k8s-netchecker-server-v1.2.2.tar.gz

-rw-r--r--. 1 root root 19547919 Feb 15 04:26 images/docker.io_rancher_local-path-provisioner-v0.0.32.tar.gz

-rw-r--r--. 1 root root 193267884 Feb 15 04:22 images/ghcr.io_k8snetworkplumbingwg_multus-cni-v4.2.2.tar.gz

-rw-r--r--. 1 root root 16276331 Feb 15 04:25 images/ghcr.io_kube-vip_kube-vip-v1.0.3.tar.gz

-rw-r--r--. 1 root root 45260358 Feb 15 04:24 images/quay.io_calico_apiserver-v3.30.6.tar.gz

-rw-r--r--. 1 root root 66394432 Feb 15 04:23 images/quay.io_calico_cni-v3.30.6.tar.gz

-rw-r--r--. 1 root root 48851711 Feb 15 04:24 images/quay.io_calico_kube-controllers-v3.30.6.tar.gz

-rw-r--r--. 1 root root 148461712 Feb 15 04:23 images/quay.io_calico_node-v3.30.6.tar.gz

-rw-r--r--. 1 root root 32631851 Feb 15 04:24 images/quay.io_calico_typha-v3.30.6.tar.gz

-rw-r--r--. 1 root root 24593487 Feb 15 04:22 images/quay.io_cilium_certgen-v0.2.4.tar.gz

-rw-r--r--. 1 root root 64499040 Feb 15 04:22 images/quay.io_cilium_cilium-envoy-v1.34.10-1762597008-ff7ae7d623be00078865cff1b0672cc5d9bfc6d5.tar.gz

-rw-r--r--. 1 root root 229234734 Feb 15 04:21 images/quay.io_cilium_cilium-v1.18.6.tar.gz

-rw-r--r--. 1 root root 24236932 Feb 15 04:21 images/quay.io_cilium_hubble-relay-v1.18.6.tar.gz

-rw-r--r--. 1 root root 18236531 Feb 15 04:22 images/quay.io_cilium_hubble-ui-backend-v0.13.3.tar.gz

-rw-r--r--. 1 root root 11763582 Feb 15 04:22 images/quay.io_cilium_hubble-ui-v0.13.3.tar.gz

-rw-r--r--. 1 root root 85426846 Feb 15 04:21 images/quay.io_cilium_operator-v1.18.6.tar.gz

-rw-r--r--. 1 root root 21212011 Feb 15 04:20 images/quay.io_coreos_etcd-v3.5.26.tar.gz

-rw-r--r--. 1 root root 12131783 Feb 15 04:27 images/quay.io_jetstack_cert-manager-cainjector-v1.15.3.tar.gz

-rw-r--r--. 1 root root 17886338 Feb 15 04:27 images/quay.io_jetstack_cert-manager-controller-v1.15.3.tar.gz

-rw-r--r--. 1 root root 15404676 Feb 15 04:27 images/quay.io_jetstack_cert-manager-webhook-v1.15.3.tar.gz

-rw-r--r--. 1 root root 25544489 Feb 15 04:29 images/quay.io_metallb_controller-v0.13.9.tar.gz

-rw-r--r--. 1 root root 45845034 Feb 15 04:29 images/quay.io_metallb_speaker-v0.13.9.tar.gz

-rw-r--r--. 1 root root 19870574 Feb 15 04:25 images/registry.k8s.io_coredns_coredns-v1.12.1.tar.gz

-rw-r--r--. 1 root root 10145282 Feb 15 04:26 images/registry.k8s.io_cpa_cluster-proportional-autoscaler-v1.8.8.tar.gz

-rw-r--r--. 1 root root 33524672 Feb 15 04:26 images/registry.k8s.io_dns_k8s-dns-node-cache-1.25.0.tar.gz

-rw-r--r--. 1 root root 111593834 Feb 15 04:27 images/registry.k8s.io_ingress-nginx_controller-v1.13.3.tar.gz

-rw-r--r--. 1 root root 23871128 Feb 15 04:29 images/registry.k8s.io_kube-apiserver-v1.34.3.tar.gz

-rw-r--r--. 1 root root 20135843 Feb 15 04:29 images/registry.k8s.io_kube-controller-manager-v1.34.3.tar.gz

-rw-r--r--. 1 root root 22164840 Feb 15 04:29 images/registry.k8s.io_kube-proxy-v1.34.3.tar.gz

-rw-r--r--. 1 root root 15303816 Feb 15 04:29 images/registry.k8s.io_kube-scheduler-v1.34.3.tar.gz

-rw-r--r--. 1 root root 19950690 Feb 15 04:26 images/registry.k8s.io_metrics-server_metrics-server-v0.8.0.tar.gz

-rw-r--r--. 1 root root 253638 Feb 15 04:25 images/registry.k8s.io_pause-3.10.1.tar.gz

-rw-r--r--. 1 root root 21622283 Feb 15 04:28 images/registry.k8s.io_provider-os_cinder-csi-plugin-v1.30.0.tar.gz

-rw-r--r--. 1 root root 24387220 Feb 15 04:27 images/registry.k8s.io_sig-storage_csi-attacher-v4.4.2.tar.gz

-rw-r--r--. 1 root root 8403411 Feb 15 04:28 images/registry.k8s.io_sig-storage_csi-node-driver-registrar-v2.4.0.tar.gz

-rw-r--r--. 1 root root 26201955 Feb 15 04:27 images/registry.k8s.io_sig-storage_csi-provisioner-v3.6.2.tar.gz

-rw-r--r--. 1 root root 24691993 Feb 15 04:28 images/registry.k8s.io_sig-storage_csi-resizer-v1.9.2.tar.gz

-rw-r--r--. 1 root root 24512691 Feb 15 04:27 images/registry.k8s.io_sig-storage_csi-snapshotter-v6.3.2.tar.gz

-rw-r--r--. 1 root root 8838631 Feb 15 04:28 images/registry.k8s.io_sig-storage_livenessprobe-v2.11.0.tar.gz

-rw-r--r--. 1 root root 50488538 Feb 15 04:26 images/registry.k8s.io_sig-storage_local-volume-provisioner-v2.5.0.tar.gz

-rw-r--r--. 1 root root 23609719 Feb 15 04:27 images/registry.k8s.io_sig-storage_snapshot-controller-v7.0.2.tar.gzvi load-push-all-images.sh

...

load_images() {

for image in $BASEDIR/images/*.tar.gz; do

echo "===> Loading $image"

sudo $NERDCTL load --all-platforms -i $image || exit 1 # --all-platforms 추가

done

..../load-push-all-images.sh

...

elapsed: 0.3 s total: 72.4 M (241.4 MiB/s)root@admin:~/kubespray-offline/outputs# nerdctl images

REPOSITORY TAG IMAGE ID CREATED PLATFORM SIZE BLOB SIZE

localhost:35000/kube-proxy v1.34.3 fa5ed2c96dd3 26 seconds ago linux/arm64 78.05MB 75.94MB

localhost:35000/kube-scheduler v1.34.3 985575f183de 26 seconds ago linux/arm64 53.34MB 51.59MB

localhost:35000/kube-controller-manager v1.34.3 354700b61969 27 seconds ago linux/arm64 74.38MB 72.62MB

localhost:35000/kube-apiserver v1.34.3 dece5cf2dd3b 27 seconds ago linux/arm64 86.56MB 84.81MB

localhost:35000/metallb/controller v0.13.9 b724b69a4c9b 27 seconds ago linux/arm64 63.12MB 63.11MB

localhost:35000/metallb/speaker v0.13.9 51f18d4f5d4d 28 seconds ago linux/arm64 111.1MB 111.1MB

localhost:35000/kubernetesui/metrics-scraper v1.0.8 9115322001e6 28 seconds ago linux/amd64 42.26MB 42.26MB

localhost:35000/kubernetesui/dashboard v2.7.0 c353af0aa3a0 29 seconds ago linux/arm64 256.1MB 247.6MB

localhost:35000/amazon/aws-ebs-csi-driver v0.5.0 ff65db28333e 30 seconds ago linux/amd64 456.9MB 444.1MB

localhost:35000/provider-os/cinder-csi-plugin v1.30.0 f4caa8cc697d 31 seconds ago linux/arm64 63.05MB 61.21MB

localhost:35000/sig-storage/csi-node-driver-registrar v2.4.0 9124c121892e 31 seconds ago linux/arm64 22.41MB 20.25MB

localhost:35000/sig-storage/livenessprobe v2.11.0 1b1ba6eb5c8d 31 seconds ago linux/arm64 22.25MB 20.14MB

localhost:35000/sig-storage/csi-resizer v1.9.2 8f191a7ec9cc 31 seconds ago linux/arm64 64.17MB 62.06MB

localhost:35000/sig-storage/snapshot-controller v7.0.2 f23cbdb6dd3f 32 seconds ago linux/arm64 62.28MB 59.56MB

localhost:35000/sig-storage/csi-snapshotter v6.3.2 2ba4692b39fc 32 seconds ago linux/arm64 63.98MB 61.87MB

localhost:35000/sig-storage/csi-provisioner v3.6.2 b34169e8b528 32 seconds ago linux/arm64 67.74MB 65.63MB

localhost:35000/sig-storage/csi-attacher v4.4.2 2aa9f6446ccd 32 seconds ago linux/arm64 63.48MB 61.37MB

localhost:35000/jetstack/cert-manager-webhook v1.15.3 2d91656807bb 33 seconds ago linux/arm64 58.15MB 56.39MB

localhost:35000/jetstack/cert-manager-cainjector v1.15.3 a13418dc926e 33 seconds ago linux/arm64 44.65MB 42.89MB

localhost:35000/jetstack/cert-manager-controller v1.15.3 5114bfbeac23 33 seconds ago linux/arm64 67.13MB 65.37MB

localhost:35000/amazon/aws-alb-ingress-controller v1.1.9 e88f69a74c30 33 seconds ago linux/arm64 37.9MB 37.91MB

localhost:35000/ingress-nginx/controller v1.13.3 68a587e5104f 34 seconds ago linux/arm64 336.3MB 334.2MB

localhost:35000/rancher/local-path-provisioner v0.0.32 4a3d51575c84 35 seconds ago linux/arm64 61.37MB 61.35MB

localhost:35000/sig-storage/local-volume-provisioner v2.5.0 d158fd9f3579 35 seconds ago linux/arm64 134.7MB 130.4MB

localhost:35000/metrics-server/metrics-server v0.8.0 87ccea7af925 36 seconds ago linux/arm64 82.58MB 80.84MB

localhost:35000/library/registry 2.8.1 b1524398e0af 36 seconds ago linux/arm64 25.85MB 25.68MB

localhost:35000/cpa/cluster-proportional-autoscaler v1.8.8 4146047e636f 36 seconds ago linux/arm64 39.98MB 37.86MB

localhost:35000/dns/k8s-dns-node-cache 1.25.0 7071feee8b70 36 seconds ago linux/arm64 90.54MB 88.43MB

localhost:35000/coredns/coredns v1.12.1 e674cf21adf3 37 seconds ago linux/arm64 74.94MB 73.19MB

localhost:35000/library/haproxy 3.2.4-alpine 71268591d942 37 seconds ago linux/arm64 33.57MB 33.37MB

localhost:35000/library/nginx 1.28.0-alpine bcb5257f77e1 37 seconds ago linux/arm64 52.73MB 51.23MB

localhost:35000/kube-vip/kube-vip v1.0.3 133301efa7cb 38 seconds ago linux/arm64 58.69MB 58.69MB

localhost:35000/pause 3.10.1 3f85f9d8a6bc 38 seconds ago linux/arm64 516.1kB 516.9kB

localhost:35000/cloudnativelabs/kube-router v2.1.1 9b0b03a20f4b 38 seconds ago linux/arm64 216.7MB 216.1MB

localhost:35000/kubeovn/kube-ovn v1.12.21 c93b1a2ecea3 40 seconds ago linux/arm64 513.6MB 501.2MB

localhost:35000/calico/apiserver v3.30.6 b15f366465da 40 seconds ago linux/arm64 113.8MB 113.8MB

localhost:35000/calico/typha v3.30.6 3f421c499d47 40 seconds ago linux/arm64 82.42MB 82.4MB

localhost:35000/calico/kube-controllers v3.30.6 7639e6dca06e 41 seconds ago linux/arm64 117.4MB 117.4MB

localhost:35000/calico/cni v3.30.6 0aa5f9cab8de 41 seconds ago linux/arm64 157.3MB 157.3MB

localhost:35000/calico/node v3.30.6 742471336dda 43 seconds ago linux/arm64 401.5MB 400MB

localhost:35000/flannel/flannel-cni-plugin v1.7.1-flannel1 332db17b4c4a 43 seconds ago linux/arm64 11.39MB 11.37MB

localhost:35000/flannel/flannel v0.27.3 3b36a8d4db19 43 seconds ago linux/arm64 102.6MB 101.5MB

localhost:35000/k8snetworkplumbingwg/multus-cni v4.2.2 db44f5c1fd8e 45 seconds ago linux/arm64 497.5MB 495.3MB

localhost:35000/cilium/cilium-envoy v1.34.10-1762597008-ff7ae7d623be00078865cff1b0672cc5d9bfc6d5 f8eeaa2e6cbe 46 seconds ago linux/arm64 206.8MB 201.9MB

localhost:35000/cilium/hubble-ui-backend v0.13.3 1b0a4d764eaf 46 seconds ago linux/arm64 68.89MB 67.14MB

localhost:35000/cilium/hubble-ui v0.13.3 637e4d5a05ac 46 seconds ago linux/arm64 35.56MB 31.92MB

localhost:35000/cilium/certgen v0.2.4 e3403ce43031 47 seconds ago linux/arm64 58.68MB 58.68MB

localhost:35000/cilium/hubble-relay v1.18.6 5c66f4ecc18e 47 seconds ago linux/arm64 92.61MB 90.86MB

localhost:35000/cilium/operator v1.18.6 7bba55333f30 48 seconds ago linux/arm64 258MB 258MB

localhost:35000/cilium/cilium v1.18.6 88b892a5f89b 52 seconds ago linux/arm64 732.4MB 725.2MB

localhost:35000/coreos/etcd v3.5.26 4b003fe9069c 52 seconds ago linux/arm64 66.06MB 63.34MB

localhost:35000/mirantis/k8s-netchecker-agent v1.2.2 e07c83f8f083 53 seconds ago linux/amd64 5.681MB 5.856MB

localhost:35000/mirantis/k8s-netchecker-server v1.2.2 8e0ef348cf54 53 seconds ago linux/amd64 125.8MB 123.7MB

localhost:35000/library/registry 3.0.0 496d3637ba81 53 seconds ago linux/arm64 57.52MB 57.34MB

localhost:35000/library/nginx 1.29.4 4c333d291372 54 seconds ago linux/arm64 190.7MB 184MB

registry.k8s.io/sig-storage/snapshot-controller v7.0.2 f23cbdb6dd3f 54 seconds ago linux/arm64 62.28MB 59.56MB

registry.k8s.io/sig-storage/local-volume-provisioner v2.5.0 d158fd9f3579 55 seconds ago linux/arm64 134.7MB 130.4MB

registry.k8s.io/sig-storage/livenessprobe v2.11.0 1b1ba6eb5c8d 56 seconds ago linux/arm64 22.25MB 20.14MB

registry.k8s.io/sig-storage/csi-snapshotter v6.3.2 2ba4692b39fc 57 seconds ago linux/arm64 63.98MB 61.87MB

registry.k8s.io/sig-storage/csi-resizer v1.9.2 8f191a7ec9cc 57 seconds ago linux/arm64 64.17MB 62.06MB

registry.k8s.io/sig-storage/csi-provisioner v3.6.2 b34169e8b528 58 seconds ago linux/arm64 67.74MB 65.63MB

registry.k8s.io/sig-storage/csi-node-driver-registrar v2.4.0 9124c121892e 58 seconds ago linux/arm64 22.41MB 20.25MB

registry.k8s.io/sig-storage/csi-attacher v4.4.2 2aa9f6446ccd 59 seconds ago linux/arm64 63.48MB 61.37MB

registry.k8s.io/provider-os/cinder-csi-plugin v1.30.0 f4caa8cc697d 59 seconds ago linux/arm64 63.05MB 61.21MB

registry.k8s.io/pause 3.10.1 3f85f9d8a6bc About a minute ago linux/arm64 516.1kB 516.9kB

registry.k8s.io/metrics-server/metrics-server v0.8.0 87ccea7af925 About a minute ago linux/arm64 82.58MB 80.84MB

registry.k8s.io/kube-scheduler v1.34.3 985575f183de About a minute ago linux/arm64 53.34MB 51.59MB

registry.k8s.io/kube-proxy v1.34.3 fa5ed2c96dd3 About a minute ago linux/arm64 78.05MB 75.94MB

registry.k8s.io/kube-controller-manager v1.34.3 354700b61969 About a minute ago linux/arm64 74.38MB 72.62MB

registry.k8s.io/kube-apiserver v1.34.3 dece5cf2dd3b About a minute ago linux/arm64 86.56MB 84.81MB

registry.k8s.io/ingress-nginx/controller v1.13.3 68a587e5104f About a minute ago linux/arm64 336.3MB 334.2MB

registry.k8s.io/dns/k8s-dns-node-cache 1.25.0 7071feee8b70 About a minute ago linux/arm64 90.54MB 88.43MB

registry.k8s.io/cpa/cluster-proportional-autoscaler v1.8.8 4146047e636f About a minute ago linux/arm64 39.98MB 37.86MB

registry.k8s.io/coredns/coredns v1.12.1 e674cf21adf3 About a minute ago linux/arm64 74.94MB 73.19MB

quay.io/metallb/speaker v0.13.9 51f18d4f5d4d About a minute ago linux/arm64 111.1MB 111.1MB

quay.io/metallb/controller v0.13.9 b724b69a4c9b About a minute ago linux/arm64 63.12MB 63.11MB

quay.io/jetstack/cert-manager-webhook v1.15.3 2d91656807bb About a minute ago linux/arm64 58.15MB 56.39MB

quay.io/jetstack/cert-manager-controller v1.15.3 5114bfbeac23 About a minute ago linux/arm64 67.13MB 65.37MB

quay.io/jetstack/cert-manager-cainjector v1.15.3 a13418dc926e About a minute ago linux/arm64 44.65MB 42.89MB

quay.io/coreos/etcd v3.5.26 4b003fe9069c About a minute ago linux/arm64 66.06MB 63.34MB

quay.io/cilium/operator v1.18.6 7bba55333f30 About a minute ago linux/arm64 258MB 258MB

quay.io/cilium/hubble-ui v0.13.3 637e4d5a05ac About a minute ago linux/arm64 35.56MB 31.92MB

quay.io/cilium/hubble-ui-backend v0.13.3 1b0a4d764eaf About a minute ago linux/arm64 68.89MB 67.14MB

quay.io/cilium/hubble-relay v1.18.6 5c66f4ecc18e About a minute ago linux/arm64 92.61MB 90.86MB

quay.io/cilium/cilium v1.18.6 88b892a5f89b About a minute ago linux/arm64 732.4MB 725.2MB

quay.io/cilium/cilium-envoy v1.34.10-1762597008-ff7ae7d623be00078865cff1b0672cc5d9bfc6d5 f8eeaa2e6cbe About a minute ago linux/arm64 206.8MB 201.9MB

quay.io/cilium/certgen v0.2.4 e3403ce43031 About a minute ago linux/arm64 58.68MB 58.68MB

quay.io/calico/typha v3.30.6 3f421c499d47 About a minute ago linux/arm64 82.42MB 82.4MB

quay.io/calico/node v3.30.6 742471336dda About a minute ago linux/arm64 401.5MB 400MB

quay.io/calico/kube-controllers v3.30.6 7639e6dca06e About a minute ago linux/arm64 117.4MB 117.4MB

quay.io/calico/cni v3.30.6 0aa5f9cab8de About a minute ago linux/arm64 157.3MB 157.3MB

quay.io/calico/apiserver v3.30.6 b15f366465da About a minute ago linux/arm64 113.8MB 113.8MB

ghcr.io/kube-vip/kube-vip v1.0.3 133301efa7cb About a minute ago linux/arm64 58.69MB 58.69MB

ghcr.io/k8snetworkplumbingwg/multus-cni v4.2.2 db44f5c1fd8e About a minute ago linux/arm64 497.5MB 495.3MB

rancher/local-path-provisioner v0.0.32 4a3d51575c84 About a minute ago linux/arm64 61.37MB 61.35MB

mirantis/k8s-netchecker-server v1.2.2 8e0ef348cf54 About a minute ago linux/amd64 125.8MB 123.7MB

mirantis/k8s-netchecker-agent v1.2.2 e07c83f8f083 About a minute ago linux/amd64 5.681MB 5.856MB

haproxy 3.2.4-alpine 71268591d942 About a minute ago linux/arm64 33.57MB 33.37MB

kubernetesui/metrics-scraper v1.0.8 9115322001e6 About a minute ago linux/amd64 42.26MB 42.26MB

kubernetesui/dashboard v2.7.0 c353af0aa3a0 About a minute ago linux/arm64 256.1MB 247.6MB

kubeovn/kube-ovn v1.12.21 c93b1a2ecea3 About a minute ago linux/arm64 513.6MB 501.2MB

flannel/flannel v0.27.3 3b36a8d4db19 About a minute ago linux/arm64 102.6MB 101.5MB

flannel/flannel-cni-plugin v1.7.1-flannel1 332db17b4c4a About a minute ago linux/arm64 11.39MB 11.37MB

cloudnativelabs/kube-router v2.1.1 9b0b03a20f4b About a minute ago linux/arm64 216.7MB 216.1MB

amazon/aws-ebs-csi-driver v0.5.0 ff65db28333e About a minute ago linux/amd64 456.9MB 444.1MB

amazon/aws-alb-ingress-controller v1.1.9 e88f69a74c30 About a minute ago linux/arm64 37.9MB 37.91MB

nginx 1.29.4 4c333d291372 13 minutes ago linux/arm64 190.7MB 184MB

nginx 1.28.0-alpine bcb5257f77e1 13 minutes ago linux/arm64 52.73MB 51.23MB

registry 3.0.0 496d3637ba81 13 minutes ago linux/arm64 57.52MB 57.34MB

registry 2.8.1 b1524398e0af 13 minutes ago linux/arm64 25.85MB 25.68MB

이미지 저장소 카탈로그

root@admin:~/kubespray-offline/outputs# curl -s http://localhost:35000/v2/_catalog | jq

{

"repositories": [

"amazon/aws-alb-ingress-controller",

"amazon/aws-ebs-csi-driver",

"calico/apiserver",

"calico/cni",

"calico/kube-controllers",

"calico/node",

"calico/typha",

"cilium/certgen",

"cilium/cilium",

"cilium/cilium-envoy",

"cilium/hubble-relay",

"cilium/hubble-ui",

"cilium/hubble-ui-backend",

"cilium/operator",

"cloudnativelabs/kube-router",

"coredns/coredns",

"coreos/etcd",

"cpa/cluster-proportional-autoscaler",

"dns/k8s-dns-node-cache",

"flannel/flannel",

"flannel/flannel-cni-plugin",

"ingress-nginx/controller",

"jetstack/cert-manager-cainjector",

"jetstack/cert-manager-controller",

"jetstack/cert-manager-webhook",

"k8snetworkplumbingwg/multus-cni",

"kube-apiserver",

"kube-controller-manager",

"kube-proxy",

"kube-scheduler",

"kube-vip/kube-vip",

"kubeovn/kube-ovn",

"kubernetesui/dashboard",

"kubernetesui/metrics-scraper",

"library/haproxy",

"library/nginx",

"library/registry",

"metallb/controller",

"metallb/speaker",

"metrics-server/metrics-server",

"mirantis/k8s-netchecker-agent",

"mirantis/k8s-netchecker-server",

"pause",

"provider-os/cinder-csi-plugin",

"rancher/local-path-provisioner",

"sig-storage/csi-attacher",

"sig-storage/csi-node-driver-registrar",

"sig-storage/csi-provisioner",

"sig-storage/csi-resizer",

"sig-storage/csi-snapshotter",

"sig-storage/livenessprobe",

"sig-storage/local-volume-provisioner",

"sig-storage/snapshot-controller"

]

}

kube-apiserver

root@admin:~/kubespray-offline/outputs# curl -s http://localhost:35000/v2/kube-apiserver/tags/list | jq

{

"name": "kube-apiserver",

"tags": [

"v1.34.3"

]

}

이미지 매니페스트

root@admin:~/kubespray-offline/outputs# curl -s http://localhost:35000/v2/kube-apiserver/manifests/v1.34.3 | jq

{

"schemaVersion": 2,

"mediaType": "application/vnd.docker.distribution.manifest.v2+json",

"config": {

"mediaType": "application/vnd.docker.container.image.v1+json",

"digest": "sha256:cf65ae6c8f700cc27f57b7305c6e2b71276a7eed943c559a0091e1e667169896",

"size": 2906

},

"layers": [

{

"mediaType": "application/vnd.docker.image.rootfs.diff.tar",

"digest": "sha256:378b3db0974f7a5a8767b6329ad310983bc712d0e400ff5faa294f95f869cc8c",

"size": 327680

},

{

"mediaType": "application/vnd.docker.image.rootfs.diff.tar",

"digest": "sha256:8fa10c0194df9b7c054c90dbe482585f768a54428fc90a5b78a0066a123b1bba",

"size": 40960

},

{

"mediaType": "application/vnd.docker.image.rootfs.diff.tar",

"digest": "sha256:4840c7c54023c867f19564429c89ddae4e9589c83dce82492183a7e9f7dab1fa",

"size": 2406400

},

{

"mediaType": "application/vnd.docker.image.rootfs.diff.tar",

"digest": "sha256:114dde0fefebbca13165d0da9c500a66190e497a82a53dcaabc3172d630be1e9",

"size": 102400

},

{

"mediaType": "application/vnd.docker.image.rootfs.diff.tar",

"digest": "sha256:4d049f83d9cf21d1f5cc0e11deaf36df02790d0e60c1a3829538fb4b61685368",

"size": 1536

},

{

"mediaType": "application/vnd.docker.image.rootfs.diff.tar",

"digest": "sha256:af5aa97ebe6ce1604747ec1e21af7136ded391bcabe4acef882e718a87c86bcc",

"size": 2560

},

{

"mediaType": "application/vnd.docker.image.rootfs.diff.tar",

"digest": "sha256:6f1cdceb6a3146f0ccb986521156bef8a422cdbb0863396f7f751f575ba308f4",

"size": 2560

},

{

"mediaType": "application/vnd.docker.image.rootfs.diff.tar",

"digest": "sha256:bbb6cacb8c82e4da4e8143e03351e939eab5e21ce0ef333c42e637af86c5217b",

"size": 2560

},

{

"mediaType": "application/vnd.docker.image.rootfs.diff.tar",

"digest": "sha256:2a92d6ac9e4fcc274d5168b217ca4458a9fec6f094ead68d99c77073f08caac1",

"size": 1536

},

{

"mediaType": "application/vnd.docker.image.rootfs.diff.tar",

"digest": "sha256:1a73b54f556b477f0a8b939d13c504a3b4f4db71f7a09c63afbc10acb3de5849",

"size": 10240

},

{

"mediaType": "application/vnd.docker.image.rootfs.diff.tar",

"digest": "sha256:f4aee9e53c42a22ed82451218c3ea03d1eea8d6ca8fbe8eb4e950304ba8a8bb3",

"size": 3072

},

{

"mediaType": "application/vnd.docker.image.rootfs.diff.tar",

"digest": "sha256:bfe9137a1b044e8097cdfcb6899137a8a984ed70931ed1e8ef0cf7e023a139fc",

"size": 241664

},

{

"mediaType": "application/vnd.docker.image.rootfs.diff.tar",

"digest": "sha256:a40e7263c9df7d0a1f68b0f447449f41f88f6982e9f148d8b9d9b0c22ee7fc26",

"size": 1837056

},

{

"mediaType": "application/vnd.docker.image.rootfs.diff.tar",

"digest": "sha256:f6a99f5dc9ec493ccd88f616c8917a4f3bc01f68f75e3e78e232d6071d36b51f",

"size": 79827968

}

]

}

http://192.168.10.10:35000/v2/_catalog

현재 준비 노드에서 실행 중인 Docker Registry HTTP API 엔드포인트를 조회하면 어떤 이미지를 저장하고 있는지 확인할 수 있다.

{

"repositories": [

"amazon/aws-alb-ingress-controller",

"amazon/aws-ebs-csi-driver",

"calico/apiserver",

"calico/cni",

"calico/kube-controllers",

"calico/node",

"calico/typha",

"cilium/certgen",

"cilium/cilium",

"cilium/cilium-envoy",

"cilium/hubble-relay",

"cilium/hubble-ui",

"cilium/hubble-ui-backend",

"cilium/operator",

"cloudnativelabs/kube-router",

"coredns/coredns",

"coreos/etcd",

"cpa/cluster-proportional-autoscaler",

"dns/k8s-dns-node-cache",

"flannel/flannel",

"flannel/flannel-cni-plugin",

"ingress-nginx/controller",

"jetstack/cert-manager-cainjector",

"jetstack/cert-manager-controller",

"jetstack/cert-manager-webhook",

"k8snetworkplumbingwg/multus-cni",

"kube-apiserver",

"kube-controller-manager",

"kube-proxy",

"kube-scheduler",

"kube-vip/kube-vip",

"kubeovn/kube-ovn",

"kubernetesui/dashboard",

"kubernetesui/metrics-scraper",

"library/haproxy",

"library/nginx",

"library/registry",

"metallb/controller",

"metallb/speaker",

"metrics-server/metrics-server",

"mirantis/k8s-netchecker-agent",

"mirantis/k8s-netchecker-server",

"pause",

"provider-os/cinder-csi-plugin",

"rancher/local-path-provisioner",

"sig-storage/csi-attacher",

"sig-storage/csi-node-driver-registrar",

"sig-storage/csi-provisioner",

"sig-storage/csi-resizer",

"sig-storage/csi-snapshotter",

"sig-storage/livenessprobe",

"sig-storage/local-volume-provisioner",

"sig-storage/snapshot-controller"

]

}

extract-kubespray.sh

root@admin:~/kubespray-offline/outputs# ./extract-kubespray.sh

..

/

kubespray-2.30.0/tests/testcases/roles/cluster-dump/

kubespray-2.30.0/tests/testcases/roles/cluster-dump/tasks/

kubespray-2.30.0/tests/testcases/roles/cluster-dump/tasks/main.yml

kubespray-2.30.0/tests/testcases/tests.yml

kubespray-2.30.0/upgrade-cluster.yml

kubespray-2.30.0/upgrade_cluster.ymlroot@admin:~/kubespray-offline/outputs# tree kubespray-2.30.0/ -L 1

kubespray-2.30.0/

├── ansible.cfg

├── CHANGELOG.md

├── cluster.yml

├── CNAME

├── code-of-conduct.md

├── _config.yml

├── contrib

├── CONTRIBUTING.md

├── Dockerfile

├── docs

├── extra_playbooks

├── galaxy.yml

├── index.html

├── inventory

├── library

├── LICENSE

├── logo

├── meta

├── OWNERS

├── OWNERS_ALIASES

├── pipeline.Dockerfile

├── playbooks

├── plugins

├── README.md

├── recover-control-plane.yml

├── RELEASE.md

├── remove-node.yml

├── remove_node.yml

├── requirements.txt

├── reset.yml

├── roles

├── scale.yml

├── scripts

├── SECURITY_CONTACTS

├── test-infra

├── tests

├── upgrade-cluster.yml

├── upgrade_cluster.yml

└── Vagrantfile

14 directories, 26 files

kubespray 설치

root@admin:~/kubespray-offline/outputs# python --version

Python 3.12.12

root@admin:~/kubespray-offline/outputs# python3.12 -m venv ~/.venv/3.12

root@admin:~/kubespray-offline/outputs# source ~/.venv/3.12/bin/activate

((3.12) ) root@admin:~/kubespray-offline/outputs# which ansible

/root/.venv/3.12/bin/ansible

((3.12) ) root@admin:~/kubespray-offline/outputs# cd /root/kubespray-offline/outputs/kubespray-2.30.0# 인벤토리 파일 복사

root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# cp ../../offline.yml .

cp -r inventory/sample inventory/mycluster

((3.12) )

root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# tree inventory/mycluster/

inventory/mycluster/

├── group_vars

│ ├── all

│ │ ├── all.yml

│ │ ├── aws.yml

│ │ ├── azure.yml

│ │ ├── containerd.yml

│ │ ├── coreos.yml

│ │ ├── cri-o.yml

│ │ ├── docker.yml

│ │ ├── etcd.yml

│ │ ├── gcp.yml

│ │ ├── hcloud.yml

│ │ ├── huaweicloud.yml

│ │ ├── oci.yml

│ │ ├── offline.yml

│ │ ├── openstack.yml

│ │ ├── upcloud.yml

│ │ └── vsphere.yml

│ └── k8s_cluster

│ ├── addons.yml

│ ├── k8s-cluster.yml

│ ├── k8s-net-calico.yml

│ ├── k8s-net-cilium.yml

│ ├── k8s-net-custom-cni.yml

│ ├── k8s-net-flannel.yml

│ ├── k8s-net-kube-ovn.yml

│ ├── k8s-net-kube-router.yml

│ ├── k8s-net-macvlan.yml

│ └── kube_control_plane.yml

└── inventory.ini

4 directories, 27 files

((3.12) )# 이미지 저장소, 웹 서버 호스트 수정

root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# sed -i "s/YOUR_HOST/192.168.10.10/g" offline.yml

((3.12) ) root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# cat offline.yml | grep 192.168.10.10

http_server: "http://192.168.10.10"

registry_host: "192.168.10.10:35000"

((3.12) )root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# \cp -f offline.yml inventory/mycluster/group_vars/all/offline.yml

((3.12) )cat <<EOF > inventory/mycluster/inventory.ini

[kube_control_plane]

k8s-node1 ansible_host=192.168.10.11 ip=192.168.10.11 etcd_member_name=etcd1

[etcd:children]

kube_control_plane

[kube_node]

k8s-node2 ansible_host=192.168.10.12 ip=192.168.10.12

EOFroot@admin:~/kubespray-offline/outputs/kubespray-2.30.0# ansible -i inventory/mycluster/inventory.ini all -m ping

[WARNING]: Platform linux on host k8s-node2 is using the discovered Python

interpreter at /usr/bin/python3.12, but future installation of another Python

interpreter could change the meaning of that path. See

https://docs.ansible.com/ansible-

core/2.17/reference_appendices/interpreter_discovery.html for more information.

k8s-node2 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/bin/python3.12"

},

"changed": false,

"ping": "pong"

}

[WARNING]: Platform linux on host k8s-node1 is using the discovered Python

interpreter at /usr/bin/python3.12, but future installation of another Python

interpreter could change the meaning of that path. See

https://docs.ansible.com/ansible-

core/2.17/reference_appendices/interpreter_discovery.html for more information.

k8s-node1 | SUCCESS => {

"ansible_facts": {

"discovered_interpreter_python": "/usr/bin/python3.12"

},

"changed": false,

"ping": "pong"

}

((3.12) )root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# mkdir offline-repo

((3.12) ) root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# cp -r ../playbook/ offline-repo/

((3.12) ) root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# tree offline-repo/

offline-repo/

└── playbook

├── offline-repo.yml

└── roles

└── offline-repo

├── defaults

│ └── main.yml

├── files

│ └── 99offline

└── tasks

├── Debian.yml

├── main.yml

└── RedHat.yml

7 directories, 6 files

((3.12) )root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# ansible-playbook -i inventory/mycluster/inventory.ini offline-repo/playbook/offline-repo.yml

PLAY [all] *********************************************************************

Sunday 15 February 2026 04:54:08 +0900 (0:00:00.011) 0:00:00.011 *******

TASK [Gathering Facts] *********************************************************

ok: [k8s-node2]

ok: [k8s-node1]

Sunday 15 February 2026 04:54:09 +0900 (0:00:00.997) 0:00:01.008 *******

TASK [offline-repo : include_tasks] ********************************************

included: /root/kubespray-offline/outputs/kubespray-2.30.0/offline-repo/playbook/roles/offline-repo/tasks/RedHat.yml for k8s-node2, k8s-node1

Sunday 15 February 2026 04:54:09 +0900 (0:00:00.021) 0:00:01.030 *******

TASK [offline-repo : Install offline yum repo] *********************************

changed: [k8s-node2]

changed: [k8s-node1]

PLAY RECAP *********************************************************************

k8s-node1 : ok=3 changed=1 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

k8s-node2 : ok=3 changed=1 unreachable=0 failed=0 skipped=0 rescued=0 ignored=0

Sunday 15 February 2026 04:54:09 +0900 (0:00:00.127) 0:00:01.157 *******

===============================================================================

Gathering Facts --------------------------------------------------------- 1.00s

offline-repo : Install offline yum repo --------------------------------- 0.13s

offline-repo : include_tasks -------------------------------------------- 0.02s

((3.12) )

오프라인 노드에서 기존 외부 레포 제거

오프라인 환경에서는 기존 Rocky Linux 공식 저장소가 활성화되어 있으면 Kubespray 실행 중 외부 URL 접근을 시도하게 된다. 이 경우 인터넷이 차단된 환경에서는 설치가 실패한다. 따라서 모든 노드에서 기존 repo 파일을 비활성화한다.

root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# for i in rocky-addons rocky-devel rocky-extras rocky; do

ssh k8s-node1 "mv /etc/yum.repos.d/$i.repo /etc/yum.repos.d/$i.repo.original"

ssh k8s-node2 "mv /etc/yum.repos.d/$i.repo /etc/yum.repos.d/$i.repo.original"

done

((3.12) ) root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# ssh k8s-node1 tree /etc/yum.repos.d/

/etc/yum.repos.d/

├── offline.repo

├── rocky-addons.repo.original

├── rocky-devel.repo.original

├── rocky-extras.repo.original

└── rocky.repo.original

1 directory, 5 files

((3.12) ) root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# ssh k8s-node1 dnf repolist

repo id repo name

offline-repo Offline repo for kubespray

((3.12) ) root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# ssh k8s-node2 tree /etc/yum.repos.d/

/etc/yum.repos.d/

├── offline.repo

├── rocky-addons.repo.original

├── rocky-devel.repo.original

├── rocky-extras.repo.original

└── rocky.repo.original

1 directory, 5 files

((3.12) ) root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# ssh k8s-node2 dnf repolist

repo id repo name

offline-repo Offline repo for kubespray

((3.12) )

Kubespray Group Vars를 오프라인 환경에 맞게 수정

echo "kubectl_localhost: true" >> inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml # 배포를 수행하는 로컬 머신의 bin 디렉토리에도 kubectl 바이너리를 다운로드

sed -i 's|kube_owner: kube|kube_owner: root|g' inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

sed -i 's|kube_network_plugin: calico|kube_network_plugin: flannel|g' inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

sed -i 's|kube_proxy_mode: ipvs|kube_proxy_mode: iptables|g' inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

sed -i 's|enable_nodelocaldns: true|enable_nodelocaldns: false|g' inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

grep -iE 'kube_owner|kube_network_plugin:|kube_proxy_mode|enable_nodelocaldns:' inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

echo "enable_dns_autoscaler: false" >> inventory/mycluster/group_vars/k8s_cluster/k8s-cluster.yml

echo "flannel_interface: enp0s9" >> inventory/mycluster/group_vars/k8s_cluster/k8s-net-flannel.yml

grep "^[^#]" inventory/mycluster/group_vars/k8s_cluster/k8s-net-flannel.yml

sed -i 's|helm_enabled: false|helm_enabled: true|g' inventory/mycluster/group_vars/k8s_cluster/addons.yml

sed -i 's|metrics_server_enabled: false|metrics_server_enabled: true|g' inventory/mycluster/group_vars/k8s_cluster/addons.yml

grep -iE 'metrics_server_enabled:' inventory/mycluster/group_vars/k8s_cluster/addons.yml

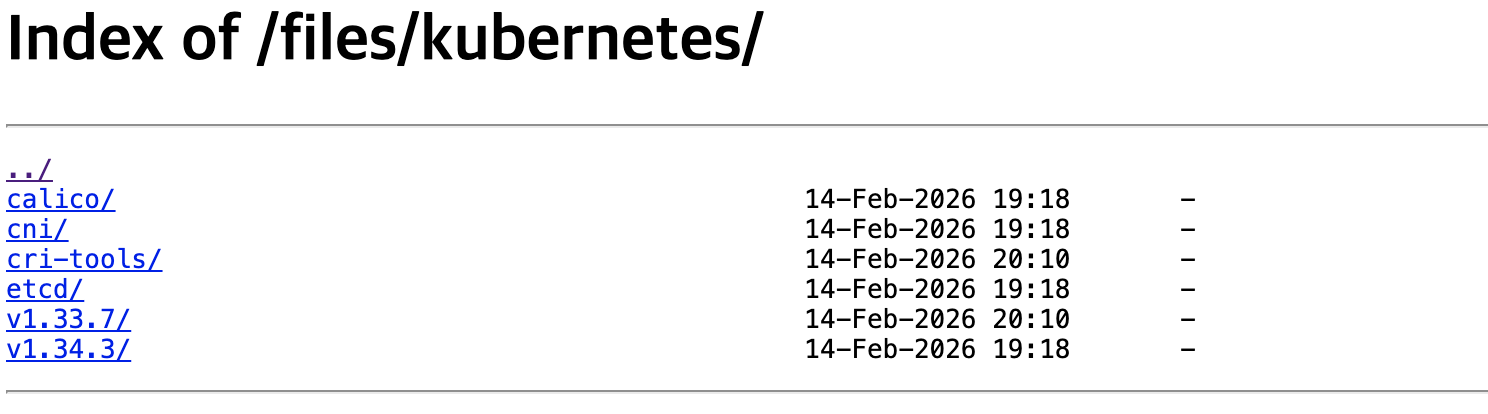

echo "metrics_server_requests_cpu: 25m" >> inventory/mycluster/group_vars/k8s_cluster/addons.yml

echo "metrics_server_requests_memory: 16Mi" >> inventory/mycluster/group_vars/k8s_cluster/addons.ymlsed -i 's/amd64/arm64/g' inventory/mycluster/group_vars/all/offline.ymlansible-playbook -i inventory/mycluster/inventory.ini -v cluster.yml -e kube_version="1.34.3"

...

===============================================================================

kubernetes/kubeadm : Join to cluster if needed ------------------------- 20.97s

etcd : Restart etcd ----------------------------------------------------- 8.19s

kubernetes/control-plane : Kubeadm | Initialize first control plane node (1st try) --- 5.86s

etcd : Configure | Check if etcd cluster is healthy --------------------- 5.15s

network_plugin/flannel : Flannel | Wait for flannel subnet.env file presence --- 5.15s

etcd : Configure | Ensure etcd is running ------------------------------- 2.95s

download : Download_file | Download item -------------------------------- 2.19s

container-engine/containerd : Containerd | Unpack containerd archive ---- 2.06s

network_plugin/cni : CNI | Copy cni plugins ----------------------------- 2.03s

container-engine/validate-container-engine : Populate service facts ----- 1.96s

container-engine/containerd : Download_file | Download item ------------- 1.89s

kubernetes-apps/metrics_server : Metrics Server | Create manifests ------ 1.84s

container-engine/crictl : Download_file | Download item ----------------- 1.74s

container-engine/crictl : Extract_file | Unpacking archive -------------- 1.71s

download : Download_container | Download image if required -------------- 1.67s

container-engine/nerdctl : Download_file | Download item ---------------- 1.64s

kubernetes/control-plane : Control plane | wait for kube-scheduler ------ 1.63s

container-engine/nerdctl : Extract_file | Unpacking archive ------------- 1.62s

etcdctl_etcdutl : Extract_file | Unpacking archive ---------------------- 1.61s

container-engine/runc : Download_file | Download item ------------------- 1.56s

((3.12) )

root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# ssh k8s-node2 cat /etc/NetworkManager/conf.d/dns.conf

[global-dns-domain-*]

servers = 10.233.0.3,168.126.63.1,168.126.63.2

[global-dns]

searches = default.svc.cluster.local,svc.cluster.local

options = ndots:2,timeout:2,attempts:2

((3.12) ) root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# ssh k8s-node2 cat /etc/resolv.conf

# Generated by NetworkManager

search default.svc.cluster.local svc.cluster.local

nameserver 10.233.0.3

nameserver 168.126.63.1

nameserver 168.126.63.2

options ndots:2 timeout:2 attempts:2

((3.12) ) root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# ssh k8s-node2 cat /etc/NetworkManager/conf.d/k8s.conf

[keyfile]

unmanaged-devices+=interface-name:kube-ipvs0;interface-name:nodelocaldns

((3.12) )

kubectl 접근 설정

root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# cp inventory/mycluster/artifacts/kubectl /usr/local/bin/

kubectl version --client=true

Client Version: v1.34.3

Kustomize Version: v5.7.1

((3.12) ) root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# mkdir /root/.kube

scp k8s-node1:/root/.kube/config /root/.kube/

sed -i 's/127.0.0.1/192.168.10.11/g' /root/.kube/config

con 100% 5649 7.7MB/s 00:00

((3.12) )

root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# source <(kubectl completion bash)

alias k=kubectl

complete -F __start_kubectl k

echo 'source <(kubectl completion bash)' >> /etc/profile

echo 'alias k=kubectl' >> /etc/profile

echo 'complete -F __start_kubectl k' >> /etc/profile

((3.12) )root@admin:~/kubespray-offline/outputs/kubespray-2.30.0# kubectl get deploy,sts,ds -n kube-system -owide

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

deployment.apps/coredns 2/2 2 2 3m26s coredns 192.168.10.10:35000/coredns/coredns:v1.12.1 k8s-app=kube-dns

deployment.apps/metrics-server 1/1 1 1 3m18s metrics-server 192.168.10.10:35000/metrics-server/metrics-server:v0.8.0 app.kubernetes.io/name=metrics-server,version=0.8.0

NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE CONTAINERS IMAGES SELECTOR

daemonset.apps/kube-flannel 0 0 0 0 0 <none> 3m35s kube-flannel 192.168.10.10:35000/flannel/flannel:v0.27.3 app=flannel

daemonset.apps/kube-flannel-ds-arm 0 0 0 0 0 <none> 3m35s kube-flannel 192.168.10.10:35000/flannel/flannel:v0.27.3 app=flannel

daemonset.apps/kube-flannel-ds-arm64 2 2 2 2 2 <none> 3m35s kube-flannel 192.168.10.10:35000/flannel/flannel:v0.27.3 app=flannel

daemonset.apps/kube-flannel-ds-ppc64le 0 0 0 0 0 <none> 3m35s kube-flannel 192.168.10.10:35000/flannel/flannel:v0.27.3 app=flannel

daemonset.apps/kube-flannel-ds-s390x 0 0 0 0 0 <none> 3m35s kube-flannel 192.168.10.10:35000/flannel/flannel:v0.27.3 app=flannel

daemonset.apps/kube-proxy 2 2 2 2 2 kubernetes.io/os=linux 4m11s kube-proxy 192.168.10.10:35000/kube-proxy:v1.34.3 k8s-app=kube-proxy

((3.12) )

k8s-node 확인

root@k8s-node1:~# crictl images

IMAGE TAG IMAGE ID SIZE

192.168.10.10:35000/coredns/coredns v1.12.1 138784d87c9c5 73.2MB

192.168.10.10:35000/flannel/flannel-cni-plugin v1.7.1-flannel1 e5bf9679ea8c3 11.4MB

192.168.10.10:35000/flannel/flannel v0.27.3 cadcae92e6360 102MB

192.168.10.10:35000/kube-apiserver v1.34.3 cf65ae6c8f700 84.8MB

192.168.10.10:35000/kube-controller-manager v1.34.3 7ada8ff13e54b 72.6MB

192.168.10.10:35000/kube-proxy v1.34.3 4461daf6b6af8 75.9MB

192.168.10.10:35000/kube-scheduler v1.34.3 2f2aa21d34d2d 51.6MB

192.168.10.10:35000/metrics-server/metrics-server v0.8.0 bc6c1e09a843d 80.8MB

192.168.10.10:35000/pause 3.10.1 d7b100cd9a77b 517kBroot@k8s-node1:~# crictl images

IMAGE TAG IMAGE ID SIZE

192.168.10.10:35000/coredns/coredns v1.12.1 138784d87c9c5 73.2MB

192.168.10.10:35000/flannel/flannel-cni-plugin v1.7.1-flannel1 e5bf9679ea8c3 11.4MB

192.168.10.10:35000/flannel/flannel v0.27.3 cadcae92e6360 102MB

192.168.10.10:35000/kube-apiserver v1.34.3 cf65ae6c8f700 84.8MB

192.168.10.10:35000/kube-controller-manager v1.34.3 7ada8ff13e54b 72.6MB

192.168.10.10:35000/kube-proxy v1.34.3 4461daf6b6af8 75.9MB

192.168.10.10:35000/kube-scheduler v1.34.3 2f2aa21d34d2d 51.6MB

192.168.10.10:35000/metrics-server/metrics-server v0.8.0 bc6c1e09a843d 80.8MB

192.168.10.10:35000/pause 3.10.1 d7b100cd9a77b 517kBroot@k8s-node1:~# tree /etc/containerd/

/etc/containerd/

├── certs.d

│ └── 192.168.10.10:35000

│ └── hosts.toml

├── config.toml

└── cri-base.json

3 directories, 3 filesroot@k8s-node1:~# cat /etc/containerd/config.toml

version = 3

root = "/var/lib/containerd"

state = "/run/containerd"

oom_score = 0

[grpc]

max_recv_message_size = 16777216

max_send_message_size = 16777216

[debug]

address = ""

level = "info"

format = ""

uid = 0

gid = 0

[metrics]

address = ""

grpc_histogram = false

[plugins]

[plugins."io.containerd.cri.v1.runtime"]

max_container_log_line_size = 16384

enable_unprivileged_ports = false

enable_unprivileged_icmp = false

enable_selinux = false

disable_apparmor = false

tolerate_missing_hugetlb_controller = true

disable_hugetlb_controller = true

[plugins."io.containerd.cri.v1.runtime".containerd]

default_runtime_name = "runc"

[plugins."io.containerd.cri.v1.runtime".containerd.runtimes]

[plugins."io.containerd.cri.v1.runtime".containerd.runtimes.runc]

runtime_type = "io.containerd.runc.v2"

base_runtime_spec = "/etc/containerd/cri-base.json"

[plugins."io.containerd.cri.v1.runtime".containerd.runtimes.runc.options]

Root = ""

SystemdCgroup = true

BinaryName = "/usr/local/bin/runc"

[plugins."io.containerd.cri.v1.images"]

snapshotter = "overlayfs"

image_pull_progress_timeout = "5m"

[plugins."io.containerd.cri.v1.images".pinned_images]

sandbox = "192.168.10.10:35000/pause:3.10.1"

[plugins."io.containerd.cri.v1.images".registry]

config_path = "/etc/containerd/certs.d"

[plugins."io.containerd.nri.v1.nri"]

disable = falseroot@k8s-node1:~# cat /etc/containerd/certs.d/192.168.10.10\:35000/hosts.toml

server = "https://192.168.10.10:35000"

[host."http://192.168.10.10:35000"]

capabilities = ["pull","resolve"]

skip_verify = true

override_path = false

kubespray offline 설치를 위한 지원

contrib/offline 디렉터리는 Kubespray를 폐쇄망 환경에서 설치할 수 있도록 필요한 모든 아티팩트를 사전에 수집 / 가공 / 배포하기 위한 도구 모음이다.

[준비 노드]

├─ containerd

├─ local registry (이미지 서버)

├─ nginx (파일 서버)

│ ├─ /rpms

│ ├─ /files

│ └─ /pypi

└─ offline yum/pip 설정

최종적으로 오프라인 디렉터리를 사용하면 준비 노드는 위 구조를 갖게 된다.